Science & Technology Funding Uncertainty Impacts Regular People, Too

The Federation of American Scientists urges the U.S. government to release holds on Congressionally-appropriated funding for scientific research, education, and critical activities at the earliest possible time. This includes removing new or additional administrative processes that create additional layers of review and approval for federal funding opportunities, consistent with the Administration’s stated commitment to reduce administrative overhead in science and technology.

Funding disruptions and delays can create additional uncertainty for scientific programs, forcing individuals to change plans, cancel work, or seek other opportunities. According to a recent survey published by STAT:

- 23% of laboratories funded by NIH have laid off staff and rescinded offers made to graduate students as a result of funding changes, threatening the viability of the country’s talent pipeline (especially impacting women scientists and scientists from marginalized communities, and early career researchers);

- 68% reported their institutions increased administrative processes for spending and hiring as a result of federal policy changes, despite the government’s commitment to relieve administrative burden;

- 43% of researchers canceled planned research;

- 54% of researchers reduced the scope of their research, diminishing the impact and efficiency of their work;

- 56% redirected funding from other projects; and

- 53% of researchers advised their students to consider opportunities outside the United States.

When the economic dynamics of any industry change, those with the talent and ability to change direction are often the first to do so. We are already seeing increasing competition for international funding opportunities from American scientists. The prestigious European Research Council, whose grants are awarded if researchers agree to establish their team in Europe, has seen a fourfold increase in applications from Americans for the program’s Advanced Grants. Last year, a survey by Nature suggested that 75% of American researchers were considering moving overseas.

Changes in the viability of proposals as a result of funding delays and lengthy approval processes also wastes the government’s time and money, forcing program officers to reevaluate proposals, often under new direction, when projects being considered for funding are no longer considered viable.

What seem like esoteric considerations that impact only a small fraction of the population is, in fact, a much larger concern: historically, funding for science and technology has significant ripple effects that boost the American economy and offer real solutions that improve people’s daily lives. If the National Institutes of Health expenditure is limited to that provided in the Fiscal Year 2026 President’s Budget Request, the projected economic impact would cost the U.S. economy approximately 46 billion dollars and over 200,000 jobs (not including NIH jobs already terminated). Cuts to research-performing agencies can lead to losses of capacity, reduced ability to develop and deploy critical and emerging technologies, and diminished capability to maintain datasets that are essential for the functioning of the American economy, as noted in FAS’s ongoing “Dearly Departed Datasets” project.

“Recapturing the urgency that propelled us so far in the last century”, as the President’s letter to OSTP Director Michael Kratsios directs, requires approaching our changing competitive landscape, including federal support for science and technology, with the same level of urgency.

At FAS, we believe that collaboration produces the strongest policy solutions in pursuit of a government that delivers real results for the American people. This includes engaging directly with key stakeholders across the S&T ecosystem who are deeply connected to, impacted by or implicated in federal policymaking decisions around S&T funding and infrastructure. We also engage directly with members of the public who may have ideas or input about the impact of changes to the structure and level of the federal S&T ecosystem, but may not be in a position to directly impact it.

If you are interested in this topic, want to offer your perspective, or have ideas for policy solutions to this challenge, we invite you to connect with our team by responding to our open call.

Increasing the Value of Federal Investigator-Initiated Research through Agency Impact Goals

American investment in science is incredibly productive. Yet, it is losing trust with the public, being seen as misaligned with American priorities and very expensive. To increase the real and perceived benefit of research funding, funding agencies should develop challenge goals for their extramural research programs focused on the impact portion of their mission. For example, the NIH could adopt one goal per institute or center “to enhance health, lengthen life, and reduce illness and disability”; NSF could adopt one goal per directorate “to advance the national health, prosperity and welfare; [or] to secure the national defense”. Asking research agencies to consider person-level or economic impacts in advance helps the American people see the value of federal research funding, and encourages funders to approach the problem holistically, from basic to applied research. For almost every problem there are different scientific questions that will yield benefit over multiple time scales and insight from multiple disciplines.

This plan has three elements:

- Focus some agency funding on measurable mission impacts

- Fund multiple timescales as part of a single plan

- Institutionalize the impact funding process across science funders

For example, if NIH wanted to reduce the burden of Major Depression, it could invest in a shorter time frame to learn how to better deliver evidence-based care to everyone who needs it. At the same time, it can invest in midrange work to develop and test new models and medications, and in the decades-long work required to understand how the exosome influences mood disorders. A simple way to implement this approach would be to build on the processes developed by the Government Performance Results Act (GPRA), which already requires goal setting and reporting, though proposals could be worked into any strategic planning process through a variety of administrative mechanisms.

Challenge and Opportunity

In 1945, Vannevar Bush called science the ‘endless frontier’, and argued funding scientific research is fundamental to the obligations of American government. He wrote “without scientific progress no amount of achievement in other directions can insure our health, prosperity, and security as a nation in the modern world”. The legacy of this report is that health, prosperity, and security feature prominently in the missions of most federal research agencies (see Table 1). However, in this century we have begun to drift from his focus on the impacts of science. We have the strange situation where our enterprise is both incredibly productive, and losing trust with the public, viewed as out of touch or misaligned with American priorities. This memo proposes a simple solution to address this issue for federal funding agencies like NIH and NSF that largely focus on extramural investigator-initiated research. These are research programs where the funding agency signals interest in specific topics and teams of scientists submit their research plans addressing those topics. The agency then funds a subset of those plans with input from external scientific reviewers.

This funding approach is incredibly productive. For example, NIH funds most of the pipeline for the emerging bioeconomy, which accounts for 5.1% of our GDP. From 2010 to 2016, every one of the 210 new entities approved by the FDA had some NIH funding. And yet, there appears to be a disconnect between our funding strategy and the public interest focus of the Endless Frontier operationalized through our federal science agency missions for investigator initiated research.

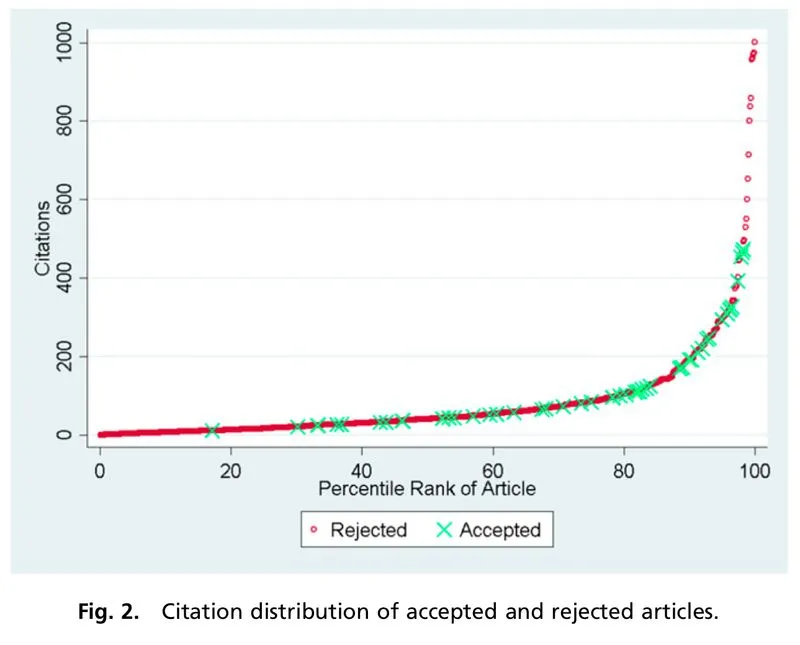

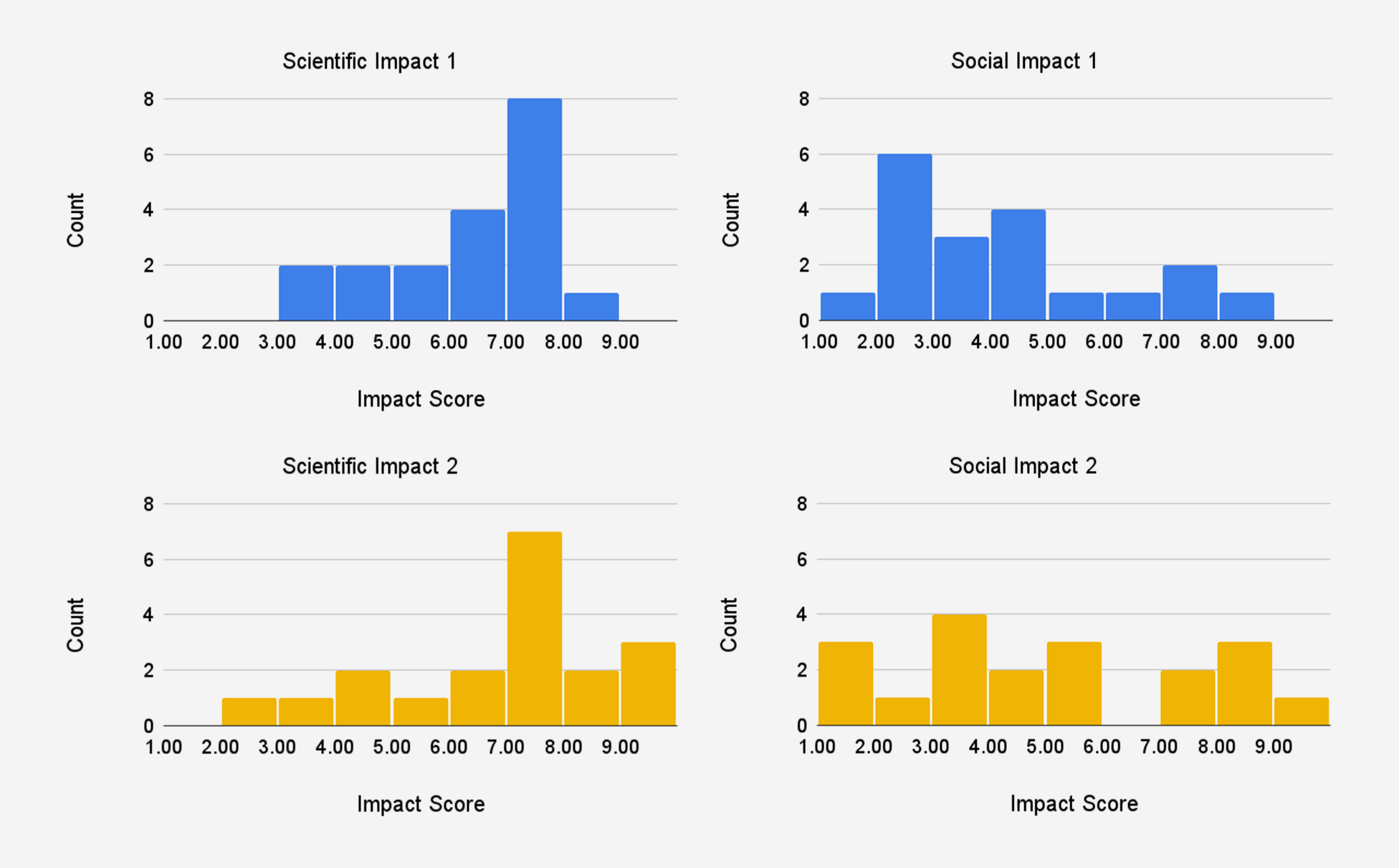

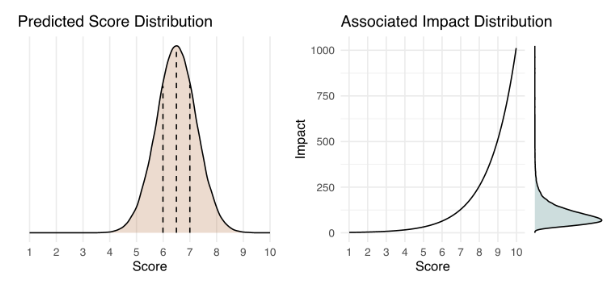

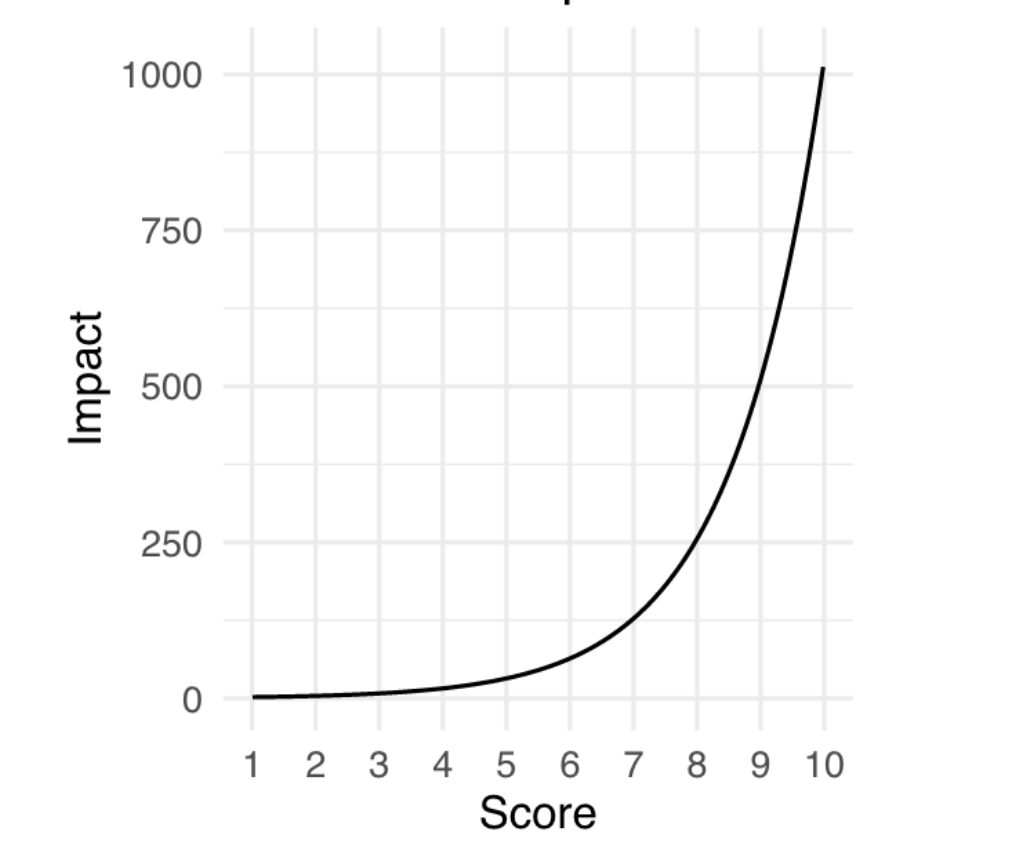

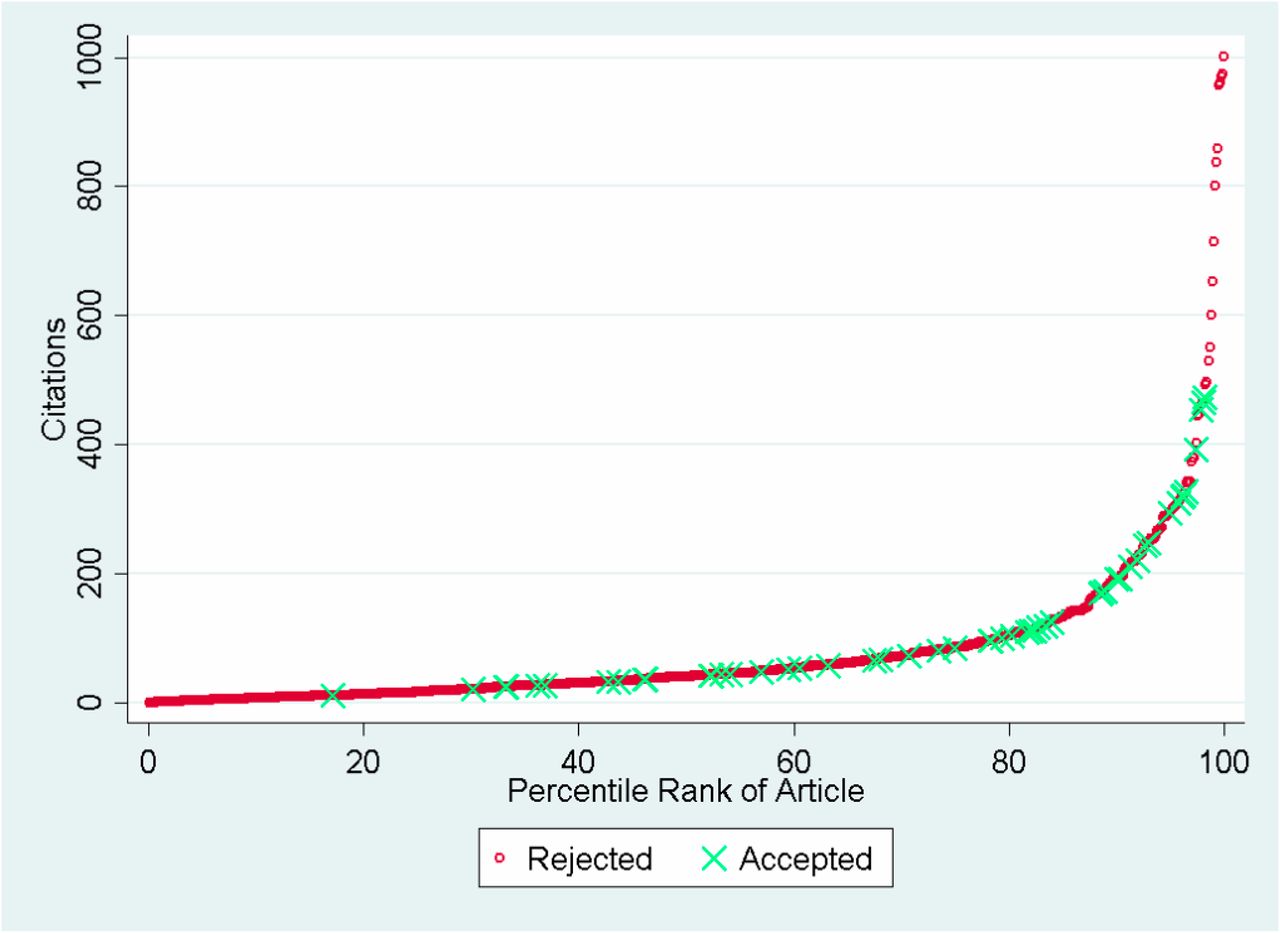

A fundamental driver of this disconnect might be a slight misalignment of the incentives of academic scientists, who are rewarded for novelty and scientific impact, with the broader public interest. Our federal agencies are highly attuned to scientific leaders, and place equal or even greater weight on innovation (novelty plus scientific impact) than real world impact. For example, NSF review criteria place equal weight on intellectual merit (‘advance knowledge’) and broader impacts (‘benefit society and contribute to the achievement of specific, desired societal outcomes’). NIH’s impact score of new applications is an ‘assessment of the likelihood for the project to exert a sustained, powerful influence on the research field(s) involved’ [emphasis mine], which is only part of the agency’s mission. The practical implications of this sustained focus away from the impact portion of agencies missions become apparent in figure 1, showing tremendous spending in health research unrelated to a key public interest measure like lifespan, especially when compared to other nations’ health research spending.

Perhaps the realization that the federal research investment is not strongly linked to their mission impact is one reason why American science has been slowly losing public trust over time. Among the people of 68 nations ranking the integrity of scientists, Americans ranked scientists 7th highest, whereas we ranked scientists 16th highest in our estimation of them acting in the public interest. And this is despite the fact that the American investment in science is many times higher than the 15 nations who rated scientists more highly on public interest. A more accurate description of our 21st century federal science enterprise might be the ‘timeless frontier’, where our science agencies pursue cycles of funding year in and year out, with their functional goal being scientific changes and their primary measure of success being projects funded. Advancing the economy, health, national defense, etc., are almost incidental benefits to our process measures.

We can do better. In 2024, the National Academy of Medicine called out the lack of high level coordination in research funding. In 2025, the administration has been making drastic cuts and dramatic changes to goals and processes of federal research funding, and the ultimate outcome of these changes is unclear. In the face of this change, Drs. Victor Dzau and Keith Yamamoto, staunch champions of our federal science programs, are calling for “a coherent strategy […] to sustain and coordinate the unrivaled strengths of government-funded research and ensure that its benefits reach all Americans”.

We can build on the incredible success of the federal science enterprise – inarguably the most productive science enterprise in all history. The primary source of American scientific strength is scale. American funding agencies are usually the largest funders in their space. I will highlight some challenges of the current approach and suggest improvements to yield even more impactful approaches more closely aligned with the public interest.

The primary federal funding strategy is broad diversification, where our agencies fund every high scoring application in a topic space (see FAQs). Further, federal science agencies pay little attention to when they expect to see a fundamental impact arising from their research portfolio. For example, a centrally directed program like the Human Genome Project can lead to breakthrough treatments decades later, but in the meantime, other research that generates improvements on faster timescales could have been coordinated, such as developing conventional drug treatments, or research to optimize quality and delivery of existing treatment.

And yet, the breadth and complexity of broad diversification makes it easy to cherry pick successes. This is a strategic issue, and is bigger than the project selection issues highlighted in the earlier discussion about review criteria. When research funding agencies make their pitch for federal dollars they highlight a handful of successes over tens of thousands of projects funded over many years. They ignore failures, the time when investments were made, and time to benefit. With the goals and metrics we have in place, it is simply too hard to summarize progress in any other way.

Overly diversified science funding supports both good Congressional testimony and bad strategy. If your problem happens to fall into a unicorn space of success, there is a lot to celebrate. But most problems do not, and we experience inconsistent returns. We need to define the success of research funding more precisely, in advance, and in ways that more obviously align with the public interest.

Plan of Action

If we tweak our funding strategy to focus on societal impacts, we can move to a more impactful science enterprise, and help regain public support for science funding. We can focus federal research funding on effective answers to difficult problems demanding both urgency and short term improvements, and fundamental discoveries that may take decades to realize. My solution and implementation actions for agencies, and potentially Congress, are described below.

Recommendation 1. Focus some agency funding on measurable mission impacts.

We should empower our science agencies to step away from broad diversification as the predominant funding strategy, and pursue measurable mission impacts with specific time horizons. It can be a challenge for funders to step away from process measures (e.g. projects or consortia funded) and focus on actual changes in mission impact.

Ideally, these specific impacts would be broken into measurable goals that would be selected through a participatory process that includes scientific experts, people with lived experience of the issue, and potential partner agencies. I recommend each agency division (e.g. an NSF Directorate) allocate a percentage of their budget to these mission impact strategies. Further, to avoid strategic errors that can arise from overwhelming power of federal funding to shape the direction of scientific fields, these high level funding plans should be as impact focused as possible, and avoid steering funding to one scientific theory or discipline over another.

Recommendation 2. Fund multiple timescales as part of a single plan.

Research funders need to balance their investment portfolios not only across problem areas, but over time. Complex challenges will often require funding different aspects of the solution on different timelines in parallel as part of a larger plan. Balancing time as well as spending allows for a more robust portfolio of funding that draws from a broader array of scientific disciplines and institutions.

Note, this approach means starting lines of research that may not lead to ultimate impact for decades. This approach might seem strange given our relatively short budget cycles, but is very common in science, where projects like the Human Genome initiative, the Brain Initiative, or the National Nanotechnology Initiative, have all exceeded a single budget cycle and will take years to realize their full impact. These kinds of efforts require milestones to ensure they stay on track over time.

Recommendation 3. Institutionalize the impact funding process across science funders.

Our research enterprise has become oriented around investigator-initiated, project-based awards. Alternative funding strategies, such as the DARPA model, are viewed as anomalies that must require completely different governance and procedures. These differences in goals are unnecessary. A consistent focus on impacts and strategy in funding across agencies will help the scientific community become more aware of the time to benefit of research, help underscore the value of research investment to the American public, and help research agencies collaborate among themselves and with their partner agencies (e.g. NIH collaborates more closely with CMS, FDA, etc.).

In short, institutionalizing this process can lead to greater accountability and recognition for our science enterprise. This structure allows our funders to report to the public progress on specific goals on predetermined and preannounced timelines, rather than having to comb through tens of thousands of independent funding decisions and competing strategies to find case studies to highlight. In this way, expected and unexpected scientific results, and even operational challenges, can be discussed within an impact framework that clearly ties to the agency mission and public interest.

Example of Planning using an Impact Focus

Here is an example of a mission impact goal Reducing the Burden of Major Depressive Disorder that could be put forth by the National Institute of Mental Health (NIMH), and the process to develop it.

Commence Inclusive Planning: NIMH brings together experts from academia, clinical care, industry, people impacted by depression, and FDA and CMS to develop measures, timelines and funding strategies.

Develop Specific Impact Measures: These should reflect the agency’s impact portion of their mission. For example, NIH’s mission impact of “enhance health, lengthen life, and reduce illness and disability” requires measuring impact on human beings. Example measurement targets could include:

- Reduced incidence of Major Depressive Disorder

- Increased productivity (e.g. days worked) of people living with Major Depressive Disorder

- Reduced suicide rates

Fund Multiple Time Scales: Designate time scales in parallel as part of a comprehensive strategy. These different plans would involve different disciplines, funding mechanisms, and private sector and government partners. Examples of plans working at different timescales to support the same goal and measures could include:

- 10 year plan: Increase utilization of evidence based care

- 15 year plan: Develop and implement new treatments

- 30 year plan: Determine how the exposome causes and prevents depression, and how can be changed

- It is likely that NIMH has already obligated funds to projects that support one of these plans, though they may need additional work to ensure that those projects can directly tie to the specific plan measures.

Implementation Strategies for Impact Goals

Each federal funding agency could allocate a percentage of their budget to these and other impact goals. The exact amount would depend on the current funding approach of each agency. As this proposal calls for more direct focus on agency mission, and not a change in mission, it is likely that a significant percentage of the agency’s current budget already supports an impact goal on one or more of its time scales.

For an agency heavily weighted towards project based funding of small investigator teams, like NIH, I would recommend starting with a goal of 20% of their budgets set towards impact spending and consider increases over time. Other agencies with different funding models may want to start in a different place. Further, I would recommend different goals and targeted funds for each major administrative unit, such as an institute or directorate.

All federal funders already engage in some form of strategic and budget planning, and most also have formal structures for engaging stakeholders into those planning decisions. Therefore, each agency already has sufficient authorities and structures to implement this proposal. However, it is likely that these impact goals will require collaboration across agencies, and that could be difficult for agencies to efficiently conduct by themselves.

Additional support to make this change could come from Congressional Report language as part of the budget process, through interagency leadership from the White House Office of Science and Technology, or through the Office of Management and Budget. For example, the Government Performance Results Act (GPRA) already requires agency goal setting, reporting and supports cross agency priority goals. That planning process could easily be adapted to this more specific impact focus for research funding agencies, and reporting on those goals could be incorporated into routine reporting of agency activities.

Conclusion

We are living through a massive disruption in federal research funding, and as of the fall of 2025, it is not clear what future federal research funding will look like. We have an opportunity to focus the incredibly productive federal research enterprise around the central reasons why Americans invest in it. We can meet Bush’s challenge of the Endless Frontier simply by clearly defining the benefits the American people want to see, and explicitly setting plans, timing and money to make that happen.

We can call our shots and focus our science funding around impacts, not spending. And we can set our goals with enough emotional resonance and depth to capture both the interests of the average American, and the needs of scientists from different disciplines and types of institutions. We already have the legal authorities in place to adopt these techniques, we just need the will.

Inadvertently, the huge scale of federal funding could lead to a monopsonistic effect. In other words, NIH’s buying power is so large, if NIH does not fund a specific type of research, people may stop studying it. This risk is highest within a narrow scientific field if there is a bias in grant selection. A well publicized example being NIH’s strong funding preference to one theory of Alzheimer’s Disease to the diminishment of competing theories, which in turn influenced careers and publication patterns to contribute to that bias.

Creating A Vision and Setting Course for the Science and Technology Ecosystem of 2050

The science and technology (S&T) ecosystem is a complex network that enables innovation, scientific research, and technology development. The researchers, technologists, investors, educators, policy makers, and businesses that make up this ecosystem have looked different and evolved over centuries. Now, we find ourselves at an inflection point. We are experiencing long-standing crises such as climate change, inequities in healthcare, and education; there are now new ones, including the defunding of federal and private sector efforts to foster diverse, inclusive, and accessible communities, learning, and work environments.

As a Senior Fellow at the Federation of American Scientists (FAS), I am focused on setting a vision for the future of the S&T ecosystem. This is not about making predictions; rather, it is, instead, about articulating and moving toward our collective preferred future. It includes being clear about how discoveries from the S&T ecosystem can be quickly and equitably distributed, and why the ecosystem matters.

The future I’m focused on isn’t next year, or the next presidential election – or even the one after that; many others are already having those discussions. I have my sights set on the year 2050, a future so far out that none of us can predict or forecast its details with much confidence.

This project presents an opportunity to bring together stakeholders across different backgrounds to work towards a common future state of the S&T ecosystem.

To better understand what might drive the way we live, learn, and work in 2050, I’m asking the community to share their expertise and thoughts about how key factors like research and development infrastructure and automation will shape the trajectory of the ecosystem. Specifically, we are looking at the role of automation, including robotics, computing, and artificial intelligence, in shaping how we live, learn, and work. We are examining both the transformative potential and the ethical, social, and economic implications of increasingly automated systems. We are also looking at the future of research and development infrastructure, which includes the physical and digital systems that support innovation: state-of-the-art facilities, specialized equipment, a skilled workforce, and data that enables discovery and collaboration.

To date, we’ve talked to dozens of experts in workforce development, national security, R&D facilities, forecasting, AI policy, automation, climate policy, and S&T policy to better understand what their hopes are, and what it might take to realize our preferred future. They have shared perspectives on what excites and worries them, trends they are watching, and thoughts on why science and technology matter to the U.S. My work is just beginning, and I want your help.

So, I invite you to share your vision for science and technology in 2050 through our survey.

The information shared will be used to develop a report with answers to questions like:

- What’s the closest we can get to a shared “north star” to guide the S&T ecosystem?

- What are the best mechanisms to unite S&T ecosystem stakeholders towards that “north star”?

- What is a potential roadmap for the policy, education, and workforce strategies that will move us forward together?

We know that the S&T epicenter moves around the world as empires, dynasties, and governments rise and fall. The United States has enjoyed the privilege of being the engine of this global ecosystem, fueled by public and private investments and directed by aspirational visions to address our nation’s pressing issues. As a nation, we’ve always challenged ourselves to aspire to greater heights. We must re-commit to this ambition in the face of global competition with clarity, confidence, and speed.

As we stand at this inflection point, it is imperative we ask ourselves – as scientists, and as a nation – what is the purpose of the S&T ecosystem today? Who, or what, should benefit from the risks, capital, and effort poured into this work? Whether you are deeply steeped in the science and technology community, or a concerned citizen who recognizes how your life can be improved by ongoing innovation, please share your thoughts by August 31.

In addition to the survey, we’ll be exploring these questions with subject matter experts, and there will be other ways to engage – to learn more, reach out to me at QBrown@fas.org.

It’s become acutely clear to me that the ecosystem we live in will be shaped by those who speak up, whether it be few or many, and I welcome you to make your voice heard.

Bringing Transparency to Federal R&D Infrastructure Costs

There is an urgent need to manage the escalating costs of federal R&D infrastructure and the increasing risk that failing facilities pose to the scientific missions of the federal research enterprise. Many of the laboratories and research support facilities operating under the federal research umbrella are near or beyond their life expectancy, creating significant safety hazards for federal workers and local communities. Unfortunately, the nature of the federal budget process forces agencies into a position where the actual cost of operations are not transparent in agency budget requests to OMB before becoming further obscured to appropriators, leading to potential appropriations disasters (including an approximately 60% cut to National Institute of Standards and Technology (NIST) facilities in 2024 after the agency’s challenges became newsworthy). Providing both Congress and OMB with a complete accounting of the actual costs of agency facilities may break the gamification of budget requests and help the government prioritize infrastructure investments.

Challenge and Opportunity

Recent reports by the National Research Council and the National Science and Technology Council, including the congressionally-mandated Quadrennial Science and Technology Review have highlighted the dire state of federal facilities. Maintenance backlogs have ballooned in recent years, forcing some agencies to shut down research activities in strategic R&D domains including Antarctic research and standards development. At NIST, facilities outages due to failing steam pipes, electricity, and black mold have led to outages reducing research productivity from 10-40 percent. NASA and NIST have both reported their maintenance backlogs have increased to exceed 3 billion dollars. The Department of Defense forecasts that bringing their buildings up to modern standards would cost approximately 7 billion “putting the military at risk of losing its technological superiority.” The shutdown of many Antarctic science operations and collapse of the Arecibo Observatory have been placed in stark contrast with the People’s Republic of China opening rival and more capable facilities in both research domains. In the late 2010s, Senate staffers were often forced to call national laboratories, directly, to ask them what it would actually cost for the country to fully fund a particular large science activity.

This memo does not suggest that the government should continue to fund old or outdated facilities; merely that there is a significant opportunity for appropriators to understand the actual cost of our legacy research and development ecosystem, initially ramped up during the Cold War. Agencies should be able to provide a straight answer to Congress about what it would cost to operate their inventory of facilities. Likewise, Congress should be able to decide which facilities should be kept open, where placing a facility on life support is acceptable, and which facilities should be shut down. The cost of maintaining facilities should also be transparent to the Office of Management and Budget so examiners can help the President make prudent decisions about the direction of the federal budget.

The National Science and Technology Council’s mandated research and development infrastructure report to Congress is a poor delivery vehicle. As coauthors of the 2024 research infrastructure report, we can attest to the pressure that exists within the White House to provide a positive narrative about the current state of play as well as OMB’s reluctance to suggest additional funding is needed to maintain our inventory of facilities outside the budget process. It would be much easier for agencies who already have a sense of what it costs to maintain their operations to provide that information directly to appropriators (as opposed to a sanitized White House report to an authorizing committee that may or may not have jurisdiction over all the agencies covered in the report)–assuming that there is even an Assistant Director for Research Infrastructure serving in OSTP to complete the America COMPETES mandate. Current government employees suggest that the Trump Administration intends to discontinue the Research and Development Infrastructure Subcommittee.

Agencies may be concerned that providing such cost transparency to Congress could result in greater micromanagement over which facilities receive which investments. Given the relevance of these facilities to their localities (including both economic benefits and environmental and safety concerns) and the role that legacy facilities can play in training new generations of scientists, this is a matter that deserves public debate. In our experience, the wider range of factors considered by appropriation staff are relevant to investment decisions. Further, accountability for macro-level budget decisions should ultimately fall on decisionmakers who choose whether or not to prioritize investments in both our scientific leadership and the health and safety of the federal workforce and nearby communities. Facilities managers who are forced to make agonizing choices in extremely resource-constrained environments currently bear most of that burden.

Plan of Action

Recommendation 1: Appropriations committees should require from agencies annual reports on the actual cost of completed facilities modernization, operations, and maintenance, including utility distribution systems.

Transparency is the only way that Congress and OMB can get a grip on the actual cost of running our legacy research infrastructure. This should be done by annual reporting to the relevant appropriators the actual cost of facilities operations and maintenance. Other costs that should be accounted for include obligations to international facilities (such as ITER) and facilities and collections that are paid for by grants (such as scientific collections which support the bioeconomy). Transparent accounting of facilities costs against what an administration chooses to prioritize in the annual President’s Budget Request may help foster meaningful dialogue between agencies, examiners, and appropriations staff.

The reports from agencies should describe the work done in each building and impact of disruption. Using the NIST as an example, the Radiation Physics Building (still without the funding to complete its renovation) is crucial to national security and the medical community. If it were to go down (or away), every medical device in the United States that uses radiation would be decertified within 6 months, creating a significant single point of failure that cannot be quickly mitigated. The identification of such functions may also enable identification of duplicate efforts across agencies.

The costs of utility systems should be included because of the broad impacts that supporting infrastructure failures can have on facility operations. At NIST’s headquarters campus in Maryland, the entire underground utility distribution system is beyond its designed lifespan and suffering nonstop issues. The Central Utility Plant (CUP), which creates steam and chilled water for the campus, is in a similar state. The CUP’s steam distribution system will be at the complete end of life (per forensic testing of failed pipes and components) in less than a decade and potentially as soon as 2030. If work doesn’t start within the next year (by early 2026), it is likely the system could go down. This would result in a complete loss of heat and temperature control on the campus; particularly concerning given the sensitivity of modern experiments and calibrations to changes in heat and humidity. Less than a decade ago, NASA was forced to delay the launch of a satellite after NIST’s steam system was down for a few weeks and calibrations required for the satellite couldn’t be completed.

Given the varying business models for infrastructure around the Federal government, standardization of accounting and costs may be too great a lift–particularly for agencies that own and operate their own facilities (government owned, government operated, or GOGOs) compared with federally funded research and development centers (FFRDCs) operated by companies and universities (government owned, contractor operated, or GOCOs).

These reports should privilege modernization efforts, which according to former federal facilities managers should help account for 80-90 percent of facility revitalization, while also delivering new capabilities that help our national labs maintain (and often re-establish) their world-leading status. It would also serve as a potential facilities inventory, allowing appropriators the ability to de-conflict investments as necessary.

It would be far easier for agencies to simply provide an itemized list of each of their facilities, current maintenance backlog, and projected costs for the next fiscal year to both Congress and OMB at the time of annual budget submission to OMB. This should include the total cost of operating facilities, projected maintenance costs, any costs needed to bring a federal facility up to relevant safety and environmental codes (many are not). In order to foster public trust, these reports should include an assessment of systems that are particularly at risk of failure, the risk to the agency’s operations, and their impact on surrounding communities, federal workers, and organizations that use those laboratories. Fatalities and incidents that affect local communities, particularly in laboratories intended to improve public safety, are not an acceptable cost of doing business. These reports should be made public (except for those details necessary to preserve classified activities).

Recommendation 2: Congress should revisit the idea of a special building fund from the General Services Administration (GSA) from which agencies can draw loans for revitalization.

During the first Trump Administration, Congress considered the establishment of a special building fund from the GSA from which agencies could draw loans at very low interest (covering the staff time of GSA officials managing the program). This could allow agencies the ability to address urgent or emergency needs that happen out of the regular appropriations cycle. This approach has already been validated by the Government Accountability Office for certain facilities, who found that “Access to full, upfront funding for large federal capital projects—whether acquisition, construction, or renovation—could save time and money.” Major international scientific organizations that operate large facilities, including CERN (the European Organization for Nuclear Research), have similar ability to take loans to pay for repairs, maintenance, or budget shortfalls that helps them maintain financial stability and reduce the risk of escalating costs as a result of deferred maintenance.

Up-front funding for major projects enabled by access to GSA loans can also reduce expenditures in the long run. In the current budget environment, it is not uncommon for the cost of major investments to double due to inflation and doing the projects piecemeal. In 2010, NIST proposed a renovation of its facilities in Boulder with an expected cost of $76 million. The project, which is still not completed today, is now estimated to cost more than $450 million due to a phased approach unsupported by appropriations. Productivity losses as a result of delayed construction (or a need to wait for appropriations) may have compounding effects on industry that may depend on access to certain capabilities and harm American competitiveness, as described in the previous recommendation.

Conclusion

As the 2024 RDI Report points out “Being a science superpower carries the burden of supporting and maintaining the advanced underlying infrastructure that supports the research and development enterprise.” Without a transparent accounting of costs it is impossible for Congress to make prudent decisions about the future of that enterprise. Requiring agencies to provide complete information to both Congress and OMB at the beginning of each year’s budget process likely provides the best chance of allowing us to address this challenge.

A National Institute for High-Reward Research

The policy discourse about high-risk, high-reward research has been too narrow. When that term is used, people are usually talking about DARPA-style moonshot initiatives with extremely ambitious goals. Given the overly conservative nature of most scientific funding, there’s a fair appetite (and deservedly so) for creating new agencies like ARPA-H, and other governmental and private analogues.

The “moonshot” definition, however, omits other types of high-risk, high-reward research that are just as important for the government to fund—perhaps even more so, because they are harder for anyone else to support or even to recognize in the first place.

Far too many scientific breakthroughs and even Nobel-winning discoveries had trouble getting funded at the outset. The main reason at the time was that the researcher’s idea seemed irrelevant or fanciful. For example, CRISPR was originally thought to be nothing more than a curiosity about bacterial defense mechanisms.

Perhaps ironically, the highest rewards in science often come from the unlikeliest places. Some of our “high reward” funding should therefore be focused on projects, fields, ideas, theories, etc. that are thought to be irrelevant, including ideas that have gotten turned down elsewhere because they are unlikely to “work.” The “risk” here isn’t necessarily technical risk, but the risk of being ignored.

Traditional funders are unlikely to create funding lines specifically for research that they themselves thought was irrelevant. Thus, we need a new agency that specializes in uncovering funding opportunities that were overlooked elsewhere. Judging from the history of scientific breakthroughs, the benefits could be quite substantial.

Challenge and Opportunity

There are far too many cases where brilliant scientists had trouble getting their ideas funded or even faced significant opposition at the time. For just a few examples (there are many others):

- The team that discovered how to manufacture human insulin applied for an NIH grant for an early stage of their work. The rejection notice said that the project looked “extremely complex and time-consuming,” and “appears as an academic exercise.”

- Katalin Karikó’s early work on mRNA was a key contributor to multiple Covid vaccines, and ultimately won the Nobel Prize. But she repeatedly got demoted at the University of Pennsylvania because she couldn’t get NIH funding.

- Carol Grieder’s work on telomerase was rejected by an NIH panel on literally the same day that she won the Nobel Prize, on the grounds that she didn’t have enough preliminary data about telomerase.

- Francisco Mojica (who identified CRISPR while studying archaebacteria in the 1990s) has said, “When we didn’t have any idea about the role these systems played, we applied for financial support from the Spanish government for our research. The government received complaints about the application and, subsequently, I was unable to get any financial support for many years.”

- Peyton Rous’s early 20th century studies on transplanting tumors between purebred chickens was ridiculed at the time, but his work won the Nobel Prize over 50 years later, and provided the basis for other breakthroughs involving reverse transcription, retroviruses, oncogenes, and more.

One could fill an entire book with nothing but these kinds of stories.

Why do so many brilliant scientists struggle to get funding and support for their groundbreaking ideas? In many cases, it’s not because of any reason that a typical “high risk, high reward” research program would address. Instead, it’s because their research can be seen as irrelevant, too far removed from any practical application, or too contrary to whatever is currently trendy.

To make matters worse, the temptation for government funders is to opt for large-scale initiatives with a lofty goal like “curing cancer” or some goal that is equally ambitious but also equally unlikely to be accomplished by a top-down mandate. For example, the U.S. government announced a National Plan to Address Alzheimer’s Disease in 2012, and the original webpage promised to “prevent and effectively treat Alzheimer’s by 2025.” Billions have been spent over the past decade on this objective, but U.S. scientists are nowhere near preventing or treating Alzheimer’s yet. (Around October 2024, the webpage was updated and now aims to “address Alzheimer’s and related dementias through 2035.”)

The challenge is whether quirky, creative, seemingly irrelevant, contrarian science—which is where some of the most significant scientific breakthroughs originated—can survive in a world that is increasingly managed by large bureaucracies whose procedures don’t really have a place for that type of science, and by politicians eager to proclaim that they have launched an ambitious goal-driven initiative.

The answer that I propose: Create an agency whose sole raison d’etre is to fund scientific research that other agencies won’t fund—not for reasons of basic competence, of course, but because the research wasn’t fashionable or relevant.

The benefits of such an approach wouldn’t be seen immediately. The whole point is to allocate money to a broad portfolio of scientific projects, some of which would fail miserably but some of which would have the potential to create the kind of breakthroughs that, by definition, are unpredictable in advance. This plan would therefore require a modicum of patience on the part of policymakers. But over the longer term, it would likely lead to a number of unforeseeable breakthroughs that would make the rest of the program worth it.

Plan of Action

The federal government needs to establish a new National Institute for High-Reward Research (NIHRR) as a stand-alone agency, not tied to the National Institutes of Health or the National Science Foundation. The NIHRR would be empowered to fund the potentially high-reward research that goes overlooked elsewhere. More specifically, the aim would be to cast a wide net for:

- Researchers (akin to Katalin Karikó or Francisco Mojica) who are perfectly well-qualified but have trouble getting funding elsewhere;

- Research projects or larger initiatives that are seen as contrary to whatever is trendy in a given field;

- Research projects or larger initiatives that are seen as irrelevant (e.g., bacterial or animal research that is seen as unrelated to human health).

NIHRR should be funded at, say, $100m per year as a starting point ($1 billion would be better). This is an admittedly ambitious proposal. It would mean increasing the scientific and R&D expenditure by that amount, or else reassigning existing funding (which would be politically unpopular). But it is a worthy objective, and indeed, should be seen as a starting point.

Significant stakeholders with an interest in a new NIHRR would obviously include universities and scholars who currently struggle for scientific funding. In a way, that stacks the deck against the idea, because the most politically powerful institutions and individuals might oppose anything that tampers with the status quo of how research funding is allocated. Nonetheless, there may be a number of high-status individuals (e.g., current Nobel winners) who would be willing to support this idea as something that would have aided their earlier work.

A new fund like this would also provide fertile ground for metascience experiments and other types of studies. Consider the striking fact that as yet, there is virtually no rigorous empirical evidence as to the relative strengths and weaknesses of top-down, strategically-driven scientific funding versus funding that is more open to seemingly irrelevant, curiosity-driven research. With a new program for the latter, we could start to derive comparisons between the results of that funding as compared to equally situated researchers funded through the regular pathways.

Moreover, a common metascience proposal in recent years is to use a limited lottery to distribute funding, on the grounds that some funding is fairly random anyway and we might as well make it official. One possibility would be for part of the new program to be disbursed by lottery amongst researchers who met a minimum bar of quality and respectability, and who had got a high enough score on “scientific novelty.” One could imagine developing an algorithm to make an initial assessment as well. Then we could compare the results of lottery-based funding versus decisions made by program officers versus algorithmic recommendations.

Conclusion

A new line of funding like the National Institute for High-Reward Research (NIHRR) could drive innovation and exploration by funding the potentially high-reward research that goes overlooked elsewhere. This would elevate worthy projects with unknown outcomes so that unfashionable or unpopular ideas can be explored. Funding these projects would have the added benefit of offering many opportunities to build in metascience studies from the outset, which is easier than retrofitting projects later.

This memo produced as part of the Federation of American Scientists and Good Science Project sprint. Find more ideas at Good Science Project x FAS

Absolutely, but that is also true for the current top-down approach of announcing lofty initiatives to “cure Alzheimer’s” and the like. Beyond that, the whole point of a true “high-risk, high-reward” research program should be to fund a large number of ideas that don’t pan out. If most research projects succeed, then it wasn’t a “high-risk” program after all.

Again, that would be a sign of potential success. Many of history’s greatest breakthroughs were mocked for those exact reasons at the time. And yes, some of the research will indeed be irrelevant or silly. That’s part of the bargain here. You can’t optimize both Type I and Type II errors at the same time (that is, false positives and false negatives). If we want to open the door to more research that would have been previously rejected on overly stringent grounds, then we also open the door to research that would have been correctly rejected on those grounds. That’s the price of being open to unpredictable breakthroughs.

How to evaluate success is a sticking point here, as it is for most of science. The traditional metrics (citations, patents, etc.) would likely be misleading, at least in the short-term. Indeed, as discussed above, there are cases where enormous breakthroughs took a few decades to be fully appreciated.

One simple metric in the shorter term would be something like this: “How often do researchers send in progress reports saying that they have been tackling a difficult question, and that they haven’t yet found the answer?” Instead of constantly promising and delivering success (which is often achieved by studying marginal questions and/or exaggerating results), scientists should be incentivized to honestly report on their failures and struggles.

Reduce Administrative Research Burden with ORCID and DOI Persistent Digital Identifiers

There exists a low-effort, low-cost way to reduce administrative burden for our scientists, and make it easier for everyone – scientists, funders, legislators, and the public – to document the incredible productivity of federal science agencies. If adopted throughout government research these tools would maximize interoperability across reporting systems, reduce the administrative burden and costs, and increase the accountability of our scientific community. The solution: persistent digital identifiers (Digital Object Identifiers, or DOIs) and Open Researcher and Contributor IDs (ORCIDs) for key personnel. ORCIDs are already used by most federal science agencies. We propose that federal science agencies also adopt digital object identifiers for research awards, an industry-wide standard. A practical and detailed implementation guide for this already exists.

The Opportunity

Tracking the impact and outputs of federal research awards is labor-intensive and expensive. Federally funded scientists spend over 900,000 hours a year writing interim progress reports alone. Despite that tremendous effort, our ability to analyze the productivity of federal research awards is limited. These reports only capture research products created while the award is active, but many exciting papers and data sets are not published until after the award is over, making it hard for the funder to associate them with a particular award or agency initiative. Further, these data are often not structured in ways that support easy analysis or collaboration. When it comes time for the funding agency to examine the impact of an award, a call for applications, or even an entire division, staff rely on a highly manual process that is time-intensive and expensive. Thus, such evaluations are often not done. Deep analysis of federal spending is next to impossible, and simple questions regarding which type of award is better suited for one scientific problem over another, or whether one administrative funding unit is more impactful than a peer organization with the same spending level, are rarely investigated by federal research agencies. These questions are difficult to answer without a simple way to tie award spending to specific research outputs such as papers, patents, and datasets.

To simplify tracking of research outputs, the Office of Science and Technology Policy (OSTP) directed federal research agencies to “assign unique digital persistent identifiers to all scientific research and development awards and intramural research protocols […] through their digital persistent identifiers.” This directive builds on work from the Trump White House in 2018 to reduce the burden on researchers and the National Security Strategy guidance. It is a great step forward, but it has yet to be fully implemented, and allows implementation to take different paths. Agencies are now taking a fragmented, agency-specific approach, which will undermine the full potential of the directive by making it difficult to track impact using the same metrics across federal agencies.

Without a unified federal standard, science publishers, awards management systems, and other disseminators of federal research output will continue to treat award identifiers as unstructured text buried within a long document, or URLs tucked into acknowledgement sections or other random fields of a research product. These ad hoc methods make it difficult to link research outputs to their federal funding. It leaves scientists and universities looking to meet requirements for multiple funding agencies, relying on complex software translations of different agency nomenclatures and award persistent identifiers, or, more realistically, continue to track and report productivity by hand. It remains too confusing and expensive to provide the level of oversight our federal research enterprise deserves.

There is an existing industry standard for associating digital persistent identifiers with awards that has been adopted by the Department of Energy and other funders such as the ALS Association, the American Heart Association, and the Wellcome Trust. It is a low-effort, low-cost way to reduce administrative burden for our scientists and make it easier for everyone – scientists, federal agencies, legislators, and the public – to document the incredible productivity of federal science expenditures.

Adopting this standard means funders can automate the reporting of most award products (e.g., scientific papers, datasets), reducing administrative burden, and allowing research products to be reliably tracked even after the award ends. Funders could maintain their taxonomy linking award DOIs to specific calls for proposals, study sections, divisions, and other internal structures, allowing them to analyze research products in much easier ways. Further, funders would be able to answer the fundamental questions about their programs that are usually too labor-intensive to even ask, such as: did a particular call for applications result in papers that answered the underlying question laid out in that call? How long should awards for a specific type of research problem last to result in the greatest scientific productivity? In the light of rapid advances in artificial intelligence (AI) and other analytic tools, making the linkages between research funding and products standardized and easy to analyze opens possibilities for an even more productive and accountable federal research enterprise going forward. In short, assigning DOIs to awards fulfills the requirements of the 2022 directive to maximize interoperability with other funder reporting systems, the promise of the 2018 NSTC report to reduce burden, and new possibilities for a more accountable and effective federal research enterprise.

Plan of Action

The overall goal is to increase accountability and transparency for federal research funding agencies and dramatically reduce the administrative burden on scientists and staff. Adopting a uniform approach allows for rapid evaluation and improvements across the research enterprise. It also enables and for the creation of comparable data on agency performance. We propose that federal science agencies adopt the same industry-wide standard – the DOI – for awards. A practical and detailed implementation guide already exists.

These steps support the existing directive and National Security Strategy guidance issued by OSTP and build on 2018 work from the NSTC:.

Recommendation 1. An interagency committee led by OSTP should coordinate and harmonize implementation to:

- Develop implementation timelines and budgets for each agency that are consistent with existing industry standards;

- Consult with other stakeholders such as scientific publishers and awardee institutions, but consider that guidance and industry standards already exist, so there is no need for lengthy consultation.

Recommendation 2. Agencies should fully adopt the industry standard persistent identifier infrastructure for research funding—DOIs—for awards. Specifically, funders should:

- Ensure they are listed in the Research Organization Registry at the administrative level (e.g., agency, division) that suits their reporting and analytic needs.

- Require and collect ORCIDs, a digital identifier for researchers widely used by academia and scientific publishers, for the key personnel of an award.

- Issue DOIs for individual awards, and link those awards to the appropriate organizational units and research funding initiatives in the metadata to facilitate evaluation.

Recommendation 3. Agencies should require the Principal Investigator (PI) to cite the award DOI in research products (e.g., scientific papers, datasets). This requirement could be included in the terms and conditions of each award. Using DOIs to automate much of progress reporting, as described below, provides a natural incentive for investigators to comply.

Recommendation 4. Agencies should use award persistent identifiers from ORCID and award DOI systems to identify research products associated with an award to reduce PI burden. Awardees would still be required to certify that the product arose directly from their federal research award. After the award and reporting obligation ends, the agency can continue to use these systems to link products to awards based on information provided by the product creators to the product distributors (e.g., authors citing an award DOI when publishing a paper), but without the direct certification of the awardee. This compromise provides the public and the funder with better information about an award’s output, but does not automatically hold the awardee liable if the product conflicts with a federal policy.

Recommendation 5. Agencies should adopt or incorporate award DOIs into their efforts to describe agency productivity and create more efficient and consistent practices for reporting research progress across all federal research funding agencies. Products attributable to the award should be searchable by individual awards, and by larger collections of awards, such as administrative Centers or calls for applications. As an example of this transparency, PubMed, with its publicly available indexing of the biomedical literature, supports the efforts of the National Institutes of Health (NIH)’s RePORTER), and could serve as a model for other fields as persistent identifiers for awards and research products become more available.

Recommendation 6. Congress should issue appropriations reporting language to ensure that implementation costs are covered for each agency and that the agencies are adopting a universal standard. Given that the DOI for awards infrastructure works even for small non-profit funders, the greatest costs will be in adapting legacy federal systems, not in utilizing the industry standard itself.

Challenges

We envision the main opposition to come from the agencies themselves, as they have multiple demands on their time and might have shortcuts to implementation that meet the letter of the requirement but do not offer the full benefits of an industry standard. This short-sighted position denies both the public transparency needed on research award performance and the massive time and cost savings for the agencies and researchers.

A partial implementation of this burden-reducing workflow already exists. Data feeds from ORCID and PubMed populate federal tools such as My Bibliography, and in turn support the biosketch generator in SciENcv or an agency’s Research Performance Progress Report. These systems are feasible because they build on PubMed’s excellent metadata and curation. But PubMed does not index all scientific fields.

Adopting DOIs for awards means that persistent identifiers will provide a higher level of service across all federal research areas. DOIs work for scientific areas not supported by PubMed. And even for the sophisticated existing systems drawing from PubMed, user effort could be reduced and accuracy increased if awards were assigned DOIs. Systems such as NIH RePORTER and PubMed currently have to pull data from citation of award numbers in the acknowledgment sections of research papers, which is more difficult to do.

Conclusion

OSTP and the science agencies have put forth a sound directive to make American science funding even more accountable and impactful, and they are on the cusp of implementation. It is part of a long-standing effort to reduce burden and make the federal research enterprise more accountable and effective. Federal research funding agencies are susceptible to falling into bureaucratic fragmentation and inertia by adopting competing approaches that meet the minimum requirements set forth by OSTP, but offer minimal benefit. If these agencies instead adopt the industry standard that is being used by many other funders around the world, there will be a marked reduction in the burden on awardees and federal agencies, and it will facilitate greater transparency, accountability, and innovation in science funding. Adopting the standard is the obvious choice and well within America’s grasp, but avoiding bureaucratic fragmentation is not simple. It takes leadership from each agency, the White House, and Congress.

This memo produced as part of the Federation of American Scientists and Good Science Project sprint. Find more ideas at Good Science Project x FAS

Use Artificial Intelligence to Analyze Government Grant Data to Reveal Science Frontiers and Opportunities

President Trump challenged the Director of the Office of Science and Technology Policy (OSTP), Michael Kratsios, to “ensure that scientific progress and technological innovation fuel economic growth and better the lives of all Americans”. Much of this progress and innovation arises from federal research grants. Federal research grant applications include detailed plans for cutting-edge scientific research. They describe the hypothesis, data collection, experiments, and methods that will ultimately produce discoveries, inventions, knowledge, data, patents, and advances. They collectively represent a blueprint for future innovations.

AI now makes it possible to use these resources to create extraordinary tools for refining how we award research dollars. Further, AI can provide unprecedented insight into future discoveries and needs, shaping both public and private investment into new research and speeding the application of federal research results.

We recommend that the Office of Science and Technology Policy (OSTP) oversee a multiagency development effort to fully subject grant applications to AI analysis to predict the future of science, enhance peer review, and encourage better research investment decisions by both the public and the private sector. The federal agencies involved should include all the member agencies of the National Science and Technology Council (NSTC).

Challenge and Opportunity

The federal government funds approximately 100,000 research awards each year across all areas of science. The sheer human effort required to analyze this volume of records remains a barrier, and thus, agencies have not mined applications for deep future insight. If agencies spent just 10 minutes of employee time on each funded award, it would take 16,667 hours in total—or more than eight years of full-time work—to simply review the projects funded in one year. For each funded award, there are usually 4–12 additional applications that were reviewed and rejected. Analyzing all these applications for trends is untenable. Fortunately, emerging AI can analyze these documents at scale. Furthermore, AI systems can work with confidential data and provide summaries that conform to standards that protect confidentiality and trade secrets. In the course of developing these public-facing data summaries, the same AI tools could be used to support a research funder’s review process.

There is a long precedent for this approach. In 2009, the National Institutes of Health (NIH) debuted its Research, Condition, and Disease Categorization (RCDC) system, a program that automatically and reproducibly assigns NIH-funded projects to their appropriate spending categories. The automated RCDC system replaced a manual data call, which resulted in savings of approximately $30 million per year in staff time, and has been evolving ever since. To create the RCDC system, the NIH pioneered digital fingerprints of every scientific grant application using sophisticated text-mining software that assembled a list of terms and their frequencies found in the title, abstract, and specific aims of an application. Applications for which the fingerprints match the list of scientific terms used to describe a category are included in that category; once an application is funded, it is assigned to categorical spending reports.

NIH staff soon found it easy to construct new digital fingerprints for other things, such as research products or even scientists, by scanning the title and abstract of a public document (such as a research paper) or by all terms found in the existing grant application fingerprints associated with a person.

NIH review staff can now match the digital fingerprints of peer reviewers to the fingerprints of the applications to be reviewed and ensure there is sufficient reviewer expertise. For NIH applicants, the RePORTER webpage provides the Matchmaker tool to create digital fingerprints of title, abstract, and specific aims sections, and match them to funded grant applications and the study sections in which they were reviewed. We advocate that all agencies work together to take the next logical step and use all the data at their disposal for deeper and broader analyses.

We offer five recommendations for specific use cases below:

Use Case 1: Funder support. Federal staff could use AI analytics to identify areas of opportunity and support administrative pushes for funding.

When making a funding decision, agencies need to consider not only the absolute merit of an application but also how it complements the existing funded awards and agency goals. There are some common challenges in managing portfolios. One is that an underlying scientific question can be common to multiple problems that are addressed in different portfolios. For example, one protein may have a role in multiple organ systems. Staff are rarely aware of all the studies and methods related to that protein if their research portfolio is restricted to a single organ system or disease. Another challenge is to ensure proper distribution of investments across a research pipeline, so that science progresses efficiently. Tools that can rapidly and consistently contextualize applications across a variety of measures, including topic, methodology, agency priorities, etc., can identify underserved areas and support agencies in making final funding decisions. They can also help funders deliberately replicate some studies while reducing the risk of unintentional duplication.

Use Case 2: Reviewer support. Application reviewers could use AI analytics to understand how an application is similar to or different from currently funded federal research projects, providing reviewers with contextualization for the applications they are rating.

Reviewers are selected in part for their knowledge of the field, but when they compare applications with existing projects, they do so based on their subjective memory. AI tools can provide more objective, accurate, and consistent contextualization to ensure that the most promising ideas receive funding.

Use Case 3: Grant applicant support: Research funding applicants could be offered contextualization of their ideas among funded projects and failed applications in ways that protect the confidentiality of federal data.

NIH has already made admirable progress in this direction with their Matchmaker tool—one can enter many lines of text describing a proposal (such as an abstract), and the tool will provide lists of similar funded projects, with links to their abstracts. New AI tools can build on this model in two important ways. First, they can help provide summary text and visualization to guide the user to the most useful information. Second, they can broaden the contextual data being viewed. Currently, the results are only based on funded applications, making it impossible to tell if an idea is excluded from a funded portfolio because it is novel or because the agency consistently rejects it. Private sector attempts to analyze award information (e.g., Dimensions) are similarly limited by their inability to access full applications, including those that are not funded. AI tools could provide high-level summaries of failed or ‘in process’ grant applications that protect confidentiality but provide context about the likelihood of funding for an applicant’s project.

Use Case 4: Trend mapping. AI analyses could help everyone—scientists, biotech, pharma, investors— understand emerging funding trends in their innovation space in ways that protect the confidentiality of federal data.

The federal science agencies have made remarkable progress in making their funding decisions transparent, even to the point of offering lay summaries of funded awards. However, the sheer volume of individual awards makes summarizing these funding decisions a daunting task that will always be out of date by the time it is completed. Thoughtful application of AI could make practical, easy-to-digest summaries of U.S. federal grants in close to real time, and could help to identify areas of overlap, redundancy, and opportunity. By including projects that were unfunded, the public would get a sense of the direction in which federal funders are moving and where the government might be underinvested. This could herald a new era of transparency and effectiveness in science investment.

Use Case 5: Results prediction tools. Analytical AI tools could help everyone—scientists, biotech, pharma, investors—predict the topics and timing of future research results and neglected areas of science in ways that protect the confidentiality of federal data.

It is standard practice in pharmaceutical development to predict the timing of clinical trial results based on public information. This approach can work in other research areas, but it is labor-intensive. AI analytics could be applied at scale to specific scientific areas, such as predictions about the timing of results for materials being tested for solar cells or of new technologies in disease diagnosis. AI approaches are especially well suited to technologies that cross disciplines, such as applications of one health technology to multiple organ systems, or one material applied to multiple engineering applications. These models would be even richer if the negative cases—the unfunded research applications—were included in analyses in ways that protect the confidentiality of the failed application. Failed applications may signal where the science is struggling and where definitive results are less likely to appear, or where there are underinvested opportunities.

Plan of Action

Leadership

We recommend that OSTP oversee a multiagency development effort to achieve the overarching goal of fully subjecting grant applications to AI analysis to predict the future of science, enhance peer review, and encourage better research investment decisions by both the public and the private sector. The federal agencies involved should include all the member agencies of the NSTC. A broad array of stakeholders should be engaged because much of the AI expertise exists in the private sector, the data are owned and protected by the government, and the beneficiaries of the tools would be both public and private. We anticipate four stages to this effort.

Recommendation 1. Agency Development

Pilot: Each agency should develop pilots of one or more use cases to test and optimize training sets and output tools for each user group. We recommend this initial approach because each funding agency has different baseline capabilities to make application data available to AI tools and may also have different scientific considerations. Despite these differences, all federal science funding agencies have large archives of applications in digital formats, along with records of the publications and research data attributed to those awards.

These use cases are relatively new applications for AI and should be empirically tested before broad implementation. Trend mapping and predictive models can be built with a subset of historical data and validated with the remaining data. Decision support tools for funders, applicants, and reviewers need to be tested not only for their accuracy but also for their impact on users. Therefore, these decision support tools should be considered as a part of larger empirical efforts to improve the peer review process.

Solidify source data: Agencies may need to enhance their data systems to support the new functions for full implementation. OSTP would need to coordinate the development of data standards to ensure all agencies can combine data sets for related fields of research. Agencies may need to make changes to the structure and processing of applications, such as ensuring that sections to be used by the AI are machine-readable.

Recommendation 2. Prizes and Public–Private Partnerships

OSTP should coordinate the convening of private sector organizations to develop a clear vision for the profound implications of opening funded and failed research award applications to AI, including predicting the topics and timing of future research outputs. How will this technology support innovation and more effective investments?

Research agencies should collaborate with private sector partners to sponsor prizes for developing the most useful and accurate tools and user interfaces for each use case refined through agency development work. Prize submissions could use test data drawn from existing full-text applications and the research outputs arising from those applications. Top candidates would be subject to standard selection criteria.

Conclusion

Research applications are an untapped and tremendously valuable resource. They describe work plans and are clearly linked to specific research products, many of which, like research articles, are already rigorously indexed and machine-readable. These applications are data that can be used for optimizing research funding decisions and for developing insight into future innovations. With these data and emerging AI technologies, we will be able to understand the trajectory of our science with unprecedented breadth and insight, perhaps to even the same level of accuracy that human experts can foresee changes within a narrow area of study. However, maximizing the benefit of this information is not inevitable because the source data is currently closed to AI innovation. It will take vision and resources to build effectively from these closed systems—our federal science agencies have both, and with some leadership, they can realize the full potential of these applications.

This memo produced as part of the Federation of American Scientists and Good Science Project sprint. Find more ideas at Good Science Project x FAS

Bold Goals Require Bold Funding Levels. The FY25 Requests for the U.S. Bioeconomy Fall Short

Over the past year, there has been tremendous momentum in policy for the U.S. bioeconomy – the collection of advanced industry sectors, like pharmaceuticals, biomanufacturing, and others, with biology at their core. This momentum began in part with the Bioeconomy Executive Order (EO) and the programs authorized in CHIPS and Science, and continued with the Office of Science and Technology Policy (OSTP) release of the Bold Goals for U.S. Biotechnology and Biomanufacturing (Bold Goals) report. The report highlighted ambitious goals that the Department of Energy (DOE), Department of Commerce (DOC), Human Health Services (HHS), National Science Foundation (NSF), and the Department of Agriculture (USDA) have committed to in order to further the U.S. bioeconomical enterprise.

However, these ambitious goals set by various agencies in the Bold Goals report will also require directed and appropriate funding, and this is where we have been falling short. Multiple bioeconomy-related programs were authorized through the bipartisan CHIPS & Science legislation but have yet to receive anywhere near their funding targets. Underfunding and the resulting lack of capacity has also led to a delay in the tasks under the Bioeconomy EO. In order for the bold goals outlined in the report to be realized, it will be imperative for the U.S. to properly direct and fund the many different endeavors under the U.S. bioeconomy.

Despite this need for funding for the U.S. bioeconomy, the recently-completed FY2024 (FY24) appropriations were modest for some science agencies but abysmal for others, with decreases seen across many different scientific endeavors across agencies. The DOC, and specifically the National Institute of Standards and Technology (NIST), saw massive cuts in funding base program funding, with earmarks swamping core activities in some accounts.

There remains some hope that the FY2025 (FY25) budget will alleviate some of the cuts that have been seen to science endeavors, and in turn, to programs related to the bioeconomy. But the strictures of the Fiscal Responsibility Act, which contributed to the difficult outcomes in FY24, remain in place for FY25 as well.

Bioeconomy in the FY25 Request

With this difficult context in mind, the Presidential FY25 Budget was released as well as the FY25 budgets for DOE, DOC, HHS, NSF, and USDA.

The President’s Budget makes strides toward enabling a strong bioeconomy by prioritizing synthetic biology metrology and standards within NIST and by directing OSTP to establish the Initiative Coordination Office to support the National Engineering Biology Research and Development Initiative. However, beyond these two instances, the President’s budget only offers limited progress for the bioeconomy because of mediocre funding levels.