Federation of American Scientists Welcomes Dr. Yong-Bee Lim as Associate Director of the Global Risk Team

Washington, D.C. – March 7, 2025 – The Federation of American Scientists (FAS) is pleased to welcome Dr. Yong-Bee Lim as the new Associate Director of Global Risk. In this role, Dr. Lim will help develop, organize, and implement FAS’s growing contribution in the area of catastrophic risk prevention, including on core areas of nuclear weapons, AI and national security, space and other emerging technologies.

“The role of informed, credible and engaging organizations in support of sound public policy is more important than ever” said Jon Wolfsthal, FAS Director of Global Risk. “Yong-Bee embodies what it means to be an effective policy entrepreneur and to make meaningful contributions to US and global security. We are really excited that he is now part of the FAS team.”

Dr. Lim is a recognized expert in biosecurity, emerging technologies, and converging risks through his former roles as Deputy Director of both the the Converging Risks Lab and the Janne E. Nolan Center at the Council on Strategic Risks, his research and leadership roles in academia, and through his work at key agencies (DoD, HHS/ASPR, and DoE) in the United States. He completed his Ph.D. in Biodefense from George Mason University’s Biodefense program, where he conducted critical work on understanding the safety, security, and cultural dimensions of the U.S.-based Do-It-Yourself Biology (DIYBio) community. His recent accolades include being in the inaugural fellowship class of the Editorial Fellows program at the Bulletin of the Atomic Scientists and his selection and involvement in the Emerging Leaders in Biosecurity Initiative hosted by the Johns Hopkins Center for Health Security.

“As emerging capabilities change the very contours of safety, security, and innovation, FAS has positioned itself to both highlight the global opportunities we must seize and address the global risks we must mitigate,” Lim said. “Founded in 1945, FAS continues to display thought leadership and impact because it has not forgotten its core mission: to ensure that scientific and technical expertise continue to have a seat at the policymaking table. I am honored to be part of an organization with a legacy and mission like FAS.”

ABOUT FAS

The Federation of American Scientists (FAS) works to advance progress on a broad suite of issues where science, technology, and innovation policy can deliver transformative impact, and seeks to ensure that scientific and technical expertise have a seat at the policymaking table. Established in 1945 by scientists in response to the atomic bomb, FAS continues to bring scientific rigor and analysis to address contemporary challenges. More information about FAS work at fas.org and Global Risk, here.

The Federation of American Scientists Calls on OMB to Maintain the Agency AI Use Case Inventories at Their Current Level of Detail

The federal government’s approach to deploying AI systems is a defining force in shaping industry standards, academic research, and public perception of these technologies. Public sentiment toward AI remains mixed, with many Americans expressing a lack of trust in AI systems. To fully harness the benefits of AI, the public must have confidence that these systems are deployed responsibly and enhance their lives and livelihoods.

The first Trump Administration’s AI policies clearly recognized the opportunity to promote AI adoption through transparency and public trust. President Trump’s Executive Order 13859 explicitly stated that agencies must design, develop, acquire, and use “AI in a manner that fosters public trust and confidence while protecting privacy, civil rights, civil liberties, and American values.” This commitment laid the foundation for increasing government accountability in AI use.

A major step in this direction was the AI Use Case Inventory, established under President Trump’s Executive Order 13960 and later codified in the 2023 Advancing American AI Act. The agency inventories have since become a crucial tool in fostering public trust and innovation in government AI use. Recent OMB guidance (M-24-10) has expanded its scope, standardizing AI definitions, and collecting information on potential adverse impacts. The detailed inventory enhances accountability by ensuring transparency in AI deployments, tracks AI successes and risks to improve government services, and supports AI vendors by providing visibility into public-sector AI needs, thereby driving industry innovation.

The end of 2024 marked a major leap in government transparency regarding AI use. Agency reporting on AI systems saw dramatic improvements, with federal AI inventories capturing more than 1,700 AI use cases —a 200% increase in reported use cases from the previous year. The Department of Homeland Security (DHS) alone reported 158 active AI use cases. Of these, 29 were identified as high-risk, with detailed documentation on how 24 of those use cases are mitigating potential risks. This level of disclosure is essential for maintaining public trust and ensuring responsible AI deployment.

OMB is set to release revisions to its AI guidance (M-24-10) in mid-March, presenting an opportunity to ensure that transparency remains a top priority.

To support continued transparency and accountability in government AI use, the Federation of American Scientists has written a letter urging OMB to maintain its detailed guidance on AI inventories. We believe that sustained transparency is crucial to ensuring responsible AI governance, fostering public trust, and enabling industry innovation.

A Federal Center of Excellence to Expand State and Local Government Capacity for AI Procurement and Use

The administration should create a federal center of excellence for state and local artificial intelligence (AI) procurement and use—a hub for expertise and resources on public sector AI procurement and use at the state, local, tribal, and territorial (SLTT) government levels. The center could be created by expanding the General Services Administration’s (GSA) existing Artificial Intelligence Center of Excellence (AI CoE). As new waves of AI technologies enter the market, shifting both practice and policy, such a center of excellence would help bridge the gap between existing federal resources on responsible AI and the specific, grounded challenges that individual agencies face. In the decades ahead, new AI technologies will touch an expanding breadth of government services—including public health, child welfare, and housing—vital to the wellbeing of the American people. An AI CoE federal center would equip public sector agencies with sustainable expertise and set a consistent standard for practicing responsible AI procurement and use. This resource ensures that AI truly enhances services, protects the public interest, and builds public trust in AI-integrated state and local government services.

Challenge and Opportunity

State, local, tribal, and territorial (SLTT) governments provide services that are critical to the welfare of our society. Among these: providing housing, child support, healthcare, credit lending, and teaching. SLTT governments are increasingly interested in using AI to assist with providing these services. However, they face immense challenges in responsibly procuring and using new AI technologies. While grappling with limited technical expertise and budget constraints, SLTT government agencies considering or deploying AI must navigate data privacy concerns, anticipate and mitigate biased model outputs, ensure model outputs are interpretable to workers, and comply with sector-specific regulatory requirements, among other responsibilities.

The emergence of foundation models (large AI systems adaptable to many different tasks) for public sector use exacerbates these existing challenges. Technology companies are now rapidly developing new generative AI services tailored towards public sector organizations. For example, earlier this year, Microsoft announced that Azure OpenAI Service would be newly added to Azure Government—a set of AI services that target government customers. These types of services are not specifically created for public sector applications and use contexts, but instead are meant to serve as a foundation for developing specific applications.

For SLTT government agencies, these generative AI services blur the line between procurement and development: Beyond procuring specific AI services, we anticipate that agencies will increasingly be tasked with the responsible use of general AI services to develop specific AI applications. Moreover, recent AI regulations suggest that responsibility and liability for the use and impacts of procured AI technologies will be shared by the public sector agency that deploys them, rather than just resting with the vendor supplying them.

SLTT agencies must be well-equipped with responsible procurement practices and accountability mechanisms pivotal to moving forward given the shifts across products, practice, and policy. Federal agencies have started to provide guidelines for responsible AI procurement (e.g., Executive Order 13960, OMB-M-21-06, NIST RMF). But research shows that SLTT governments need additional support to apply these resources.: Whereas existing federal resources provide high-level, general guidance, SLTT government agencies must navigate a host of challenges that are context-specific (e.g., specific to regional laws, agency practices, etc.). SLTT government agency leaders have voiced a need for individualized support in accounting for these context-specific considerations when navigating procurement decisions.

Today, private companies are promising state and local government agencies that using their AI services can transform the public sector. They describe diverse potential applications, from supporting complex decision-making to automating administrative tasks. However, there is minimal evidence that these new AI technologies can improve the quality and efficiency of public services. There is evidence, on the other hand, that AI in public services can have unintended consequences, and when these technologies go wrong, they often worsen the problems they are aimed at solving. For example, by increasing disparities in decision-making when attempting to reduce them.

Challenges to responsible technology procurement follow a historical trend: Government technology has frequently been critiqued for failures in the past decades. Because public services such as healthcare, social work, and credit lending have such high stakes, failures in these areas can have far-reaching consequences. They also entail significant financial costs, with millions of dollars wasted on technologies that ultimately get abandoned. Even when subpar solutions remain in use, agency staff may be forced to work with them for extended periods despite their poor performance.

The new administration is presented with a critical opportunity to redirect these trends. Training each relevant individual within SLTT government agencies, or hiring new experts within each agency, is not cost- or resource-effective. Without appropriate training and support from the federal government, AI adoption is likely to be concentrated in well-resourced SLTT agencies, leaving others with fewer resources (and potentially more low income communities) behind. This could lead to disparate AI adoption and practices among SLTT agencies, further exacerbating existing inequalities. The administration urgently needs a plan that supports SLTT agencies in learning how to handle responsible AI procurement and use–to develop sustainable knowledge about how to navigate these processes over time—without requiring that each relevant individual in the public sector is trained. This plan also needs to ensure that, over time, the public sector workforce is transformed in their ability to navigate complicated AI procurement processes and relationships—without requiring constant retraining of new waves of workforces.

In the context of federal and SLTT governments, a federal center of excellence for state and local AI procurement would accomplish these goals through a “hub and spoke” model. This center of excellence would serve as the “hub” that houses a small number of selected experts from academia, non-profit organizations, and government. These experts would then train “spokes”—existing state and local public sector agency workers—in navigating responsible procurement practices. To support public sector agencies in learning from each others’ practices and challenges, this federal center of excellence could additionally create communication channels for information- and resource-sharing across the state and local agencies.

Procured AI technologies in government will serve as the backbone of local public services for decades to come. Upskilling government agencies to make smart decisions about which AI technologies to procure (and which are best avoided) would not only protect the public from harmful AI systems but would also save the government money by decreasing the likelihood of adopting expensive AI technologies that end up getting dropped.

Plan of Action

A federal center of excellence for state and local AI procurement would ensure that procured AI technologies are responsibly selected and used to serve as a strong and reliable backbone for public sector services. This federal center of excellence can support both intra-agency and inter-agency capacity-building and learning about AI procurement and use—that is, mechanisms to support expertise development within a given public sector agency and between multiple public sector agencies. This federal center of excellence would not be deliberative (i.e., SLTT governments would receive guidance and support but would not have to seek approval on their practices). Rather, the goal would be to upskill SLTT agencies so they are better equipped to navigate their own AI procurement and use endeavors.

To upskill SLTT agencies through inter-agency capacity-building, the federal center of excellence would house experts in relevant domain areas (e.g., responsible AI, public interest technology, and related topics). Fellows would work with cohorts of public sector agencies to provide training and consultation services. These fellows, who would come from government, academia, and civil society, would build on their existing expertise and experiences with responsible AI procurement, integrating new considerations proposed by federal standards for responsible AI (e.g., Executive Order 13960, OMB-M-21-06, NIST RMF). The fellows would serve as advisors to help operationalize these guidelines into practical steps and strategies, helping to set a consistent bar for responsible AI procurement and use practices along the way.

Cohorts of SLTT government agency workers, including existing agency leaders, data officers, and procurement experts, would work together with an assigned advisor to receive consultation and training support on specific tasks that their agency is currently facing. For example, for agencies or programs with low AI maturity or familiarity (e.g., departments that are beginning to explore the adoption of new AI tools), the center of excellence can help navigate the procurement decision-making process, help them understand their agency-specific technology needs, draft procurement contracts, select amongst proposals, and negotiate plans for maintenance. For agencies and programs with high AI maturity or familiarity, the advisor can train the programs about unexpected AI behaviors and mitigation strategies, when this arises. These communication pathways would allow federal agencies to better understand the challenges state and local governments face in AI procurement and maintenance, which can help seed ideas for improving existing resources and create new resources for AI procurement support.

To scaffold intra-agency capacity-building, the center of excellence can build the foundations for cross-agency knowledge-sharing. In particular, it would include a communication platform and an online hub of procurement resources, both shared amongst agencies. The communication platform would allow state and local government agency leaders who are navigating AI procurement to share challenges, learned lessons, and tacit knowledge to support each other. The online hub of resources can be collected by the center of excellence and SLTT government agencies. Through the online hub, agencies can upload and learn about new responsible AI resources and toolkits (e.g., such as those created by government and the research community), as well as examples of procurement contracts that agencies themselves used.

To implement this vision, the new administration should expand the U.S. General Services Administration’s (GSA) existing Artificial Intelligence Center of Excellence (AI CoE), which provides resources and infrastructural support for AI adoption across the federal government. We propose expanding this existing AI CoE to include the components of our proposed center of excellence for state and local AI procurement and use. This would direct support towards SLTT government agencies—which are currently unaccounted for in the existing AI CoE—specifically via our proposed capacity-building model.

Over the next 12 months, the goals of expanding the AI CoE would be three-fold:

1. Develop the core components of our proposed center of excellence within the AI CoE.

- Recruit a core set of fellows with expertise in responsible AI, public interest technology, and related topics from government, academia, and civil society for a 1-2 year placement;

- Develop a centralized onboarding and training program for the fellows to set standards for responsible AI procurement and use guidelines and goals;

- Create a research strategy to streamline documentation of SLTT agencies’ on-the-ground practices and challenges for procuring new AI technologies, which could help prepare future fellows.

2. Launch collaborations for the first sample of SLTT government agencies. Focus on building a path for successful collaborations:

- Identify a small set of state and local government agencies who desire federal support in navigating AI procurement and use (e.g., deciding which AI use cases to adopt, how to effectively evaluate AI deployments through time, what organizational policies to create to help govern AI use);

- Ensure there is a clear communication pathway between the agency and their assigned fellow;

- Have each fellow and agency pair create a customized plan of action to ensure the agency is upskilled in their ability to independently navigate AI procurement and use with time.

3. Build a path for our proposed center of excellence to grow and gain experience. If the first few collaborations show strong reviews, design a scaling strategy that will:

- Incorporate the center of excellence’s core budget into future budget planning;

- Identify additional fellows for the program;

- Roll out the program to additional state and local government agencies.

Conclusion

Expanding the existing AI CoE to include our proposed federal center of excellence for AI procurement and use can help ensure that SLTT governments are equipped to make informed, responsible decisions about integrating AI technologies into public services. This body would provide necessary guidance and training, helping to bridge the gap between high-level federal resources and the context-specific needs of SLTT agencies. By fostering both intra-agency and inter-agency capacity-building for responsible AI procurement and use, this approach builds sustainable expertise, promotes equitable AI adoption, and protects public interest. This ensures that AI enhances—rather than harms—the efficiency and quality of public services. As new waves of AI technologies continue to enter the public sector, touching a breadth of services critical to the welfare of the American people, this center of excellence will help maintain high standards for responsible public sector AI for decades to come.

This action-ready policy memo is part of Day One 2025 — our effort to bring forward bold policy ideas, grounded in science and evidence, that can tackle the country’s biggest challenges and bring us closer to the prosperous, equitable and safe future that we all hope for whoever takes office in 2025 and beyond.

PLEASE NOTE (February 2025): Since publication several government websites have been taken offline. We apologize for any broken links to once accessible public data.

Federal agencies have published numerous resources to support responsible AI procurement, including the Executive Order 13960, OMB-M-21-06, NIST RMF. Some of these resources provide guidance on responsible AI development in organizations broadly, across the public, private, and non-profit sectors. For example, the NIST RMF provides organizations with guidelines to identify, assess, and manage risks in AI systems to promote the deployment of more trustworthy and fair AI systems. Others focus on public sector AI applications. For instance, the OMB Memorandum published by the Office of Management and Budget describes strategies for federal agencies to follow responsible AI procurement and use practices.

Research describes how these forms of resources often require additional skills and knowledge that make it challenging for agencies to effectively use on their own. A federal center of excellence for state and local AI procurement could help agencies learn to use these resources. Adapting these guidelines to specific SLTT agency contexts necessitates a careful task of interpretation which may, in turn, require specialized expertise or resources. The creation of this federal center of excellence to guide responsible SLTT procurement on-the-ground can help bridge this critical gap. Fellows in the center of excellence and SLTT procurement agencies can build on this existing pool of guidance to build a strong theoretical foundation to guide their practices.

The hub and spoke model has been used across a range of applications to support efficient management of resources and services. For instance, in healthcare, providers have used the hub and spoke model to organize their network of services; specialized, intensive services would be located in “hub” healthcare establishments whereas secondary services would be provided in “spoke” establishments, allowing for more efficient and accessible healthcare services. Similar organizational networks have been followed in transportation, retail, and cybersecurity. Microsoft follows a hub and spoke model to govern responsible AI practices and disseminate relevant resources. Microsoft has a single centralized “hub” within the company that houses responsible AI experts—those with expertise on the implementation of the company’s responsible AI goals. These responsible AI experts then train “spokes”—workers residing in product and sales teams across the company, who learn about best practices and support their team in implementing them.

During the training, experts would form a stronger foundation for (1) on-the-ground challenges and practices that public sector agencies grapple with when developing, procuring, and using AI technologies and (2) existing AI procurement and use guidelines provided by federal agencies. The content of the training would be taken from syntheses of prior research on public sector AI procurement and use challenges, as well as existing federal resources available to guide responsible AI development. For example, prior research has explored public sector challenges to supporting algorithmic fairness and accountability and responsible AI design and adoption decisions, amongst other topics.

The experts who would serve as fellows for the federal center of excellence would be individuals with expertise and experience studying the impacts of AI technologies and designing interventions to support more responsible AI development, procurement, and use. Given the interdisciplinary nature of the expertise required for the role, individuals should have an applied, socio-technical background on responsible AI practices, ideally (but not necessarily) for the public sector. The individual would be expected to have the skills needed to share emerging responsible AI practices, strategies, and tacit knowledge with public sector employees developing or procuring AI technologies. This covers a broad range of potential backgrounds.

For example, a professor in academia who studies how to develop public sector AI systems that are more fair and aligned with community needs may be a good fit. A socio-technical researcher in civil society with direct experience studying or developing new tools to support more responsible AI development, who has intuition over which tools and practices may be more or less effective, may also be a good candidate. A data officer in a state government agency who has direct experience procuring and governing AI technologies in their department, with an ability to readily anticipate AI-related challenges other agencies may face, may also be a good fit. The cohort of fellows should include a balanced mix of individuals coming from government, academia, and civil society.

Strengthening Information Integrity with Provenance for AI-Generated Text Using ‘Fuzzy Provenance’ Solutions

Synthetic text generated by artificial intelligence (AI) can pose significant threats to information integrity. When users accept deceptive AI-generated content—such as large-scale false social media posts by malign foreign actors—as factual, national security is put at risk. One way to help mitigate this danger is by giving users a clear understanding of the provenance of the information they encounter online.

Here, provenance refers to any verifiable indication of whether text was generated by a human or by AI, for example by using a watermark. However, given the limitations of watermarking AI-generated text, this memo also introduces the concept of fuzzy provenance, which involves identifying exact text matches that appear elsewhere on the internet. As these matches will not always be available, the descriptor “fuzzy” is used. While this information will not always establish authenticity with certainty, it offers users additional clues about the origins of a piece of text.

To ensure platforms can effectively provide this information to users, the National Institute of Standards and Technology (NIST)’s AI Safety Institute should develop guidance on how to display to users both provenance and fuzzy provenance—where available—within no more than one click. To expand the utility of fuzzy provenance, NIST could also issue guidance on how generative AI companies could allow the records of their free AI models to be crawled and indexed by search engines, thereby making potential matches to AI-generated text easier to discover. Tradeoffs surrounding this approach are explored further in the FAQ section.

By creating a reliable, user-friendly framework for surfacing these details, NIST would empower readers to better discern the trustworthiness of the text they encounter, thereby helping to counteract the risks posed by deceptive AI-generated content.

Challenge and Opportunity

Synthetic Text and Information Integrity

In the past two years, generative AI models have become widely accessible, allowing users to produce customized text simply by providing prompts. As a result, there has been a rapid proliferation of “synthetic” text—AI-generated content—across the internet. As NIST’s Generative Artificial Intelligence Profile notes, this means that there is a “[l]owered barrier of entry to generated text that may not distinguish fact from opinion or fiction or acknowledge uncertainties, or could be leveraged for large scale dis- and mis-information campaigns.”

Information integrity risks stemming from synthetic text—particularly when generated for non-creative purposes—can pose a serious threat to national security. For example, in July 2024 the Justice Department disrupted Russian generative-AI-enabled disinformation bot farms. These Russian bots produced synthetic text, including in the form of social media posts by fake personas, meant to promote messages aligned with the interests of the Russian government.

Provenance Methods For Reducing Information Integrity Risks

NIST has an opportunity to provide community guidance to reduce the information integrity risks posed by all types of synthetic content. The main solution currently being considered by NIST for reducing the risks of synthetic content in general is provenance, which refers to whether a piece of content was generated by AI or a human. As described by NIST, provenance is often ascertained by creating a non-fungible watermark, or a cryptographic signature for a piece of content. The watermark is permanently associated with the piece of content. Where available, provenance information is helpful because knowing the origin of text can help a user know whether to rely on the facts it contains. For example, an AI-generated news report may currently be less trustworthy than a human news report because the former is more prone to fabrications.

However, there are currently no methods widely accepted as effective for determining the provenance of synthetic text. As NIST’s report, Reducing Risks Posed by Synthetic Content, details, “[t]he effectiveness of synthetic text detection is subject to ongoing debate” (Sec. 3.2.2.4). Even if a piece of text is originally AI-generated with a watermark (e.g., by generating words with a unique statistical pattern), people can easily copy a piece of text by paraphrasing (especially via AI), without transferring the original watermark. Text watermarks are also vulnerable to adversarial attacks, with malicious actors able to mimic the watermark signature and make text appear watermarked when it is not.

Plan of Action

To capture the benefits of provenance, while mitigating some of its weaknesses, NIST should issue guidance on how platforms can make available to users both provenance and “fuzzy provenance” of text. Fuzzy provenance is coined here to refer to exact text matches on the internet, which can sometimes reflect provenance but not necessarily (thus “fuzzy”). Optionally, NIST could also consider issuing guidance on how generative AI companies can make their free models’ records available to be crawled and indexed by search engines, so that fuzzy provenance information would show text matches with generative AI model records. There are tradeoffs to this recommendation, which is why it is optional; see FAQs for further discussion. Making both provenance and fuzzy provenance information available (in no more than one click) will give users more information to help them evaluate how trustworthy a piece of text is and reduce information integrity risks.

Combined Provenance and Fuzzy Provenance Approach

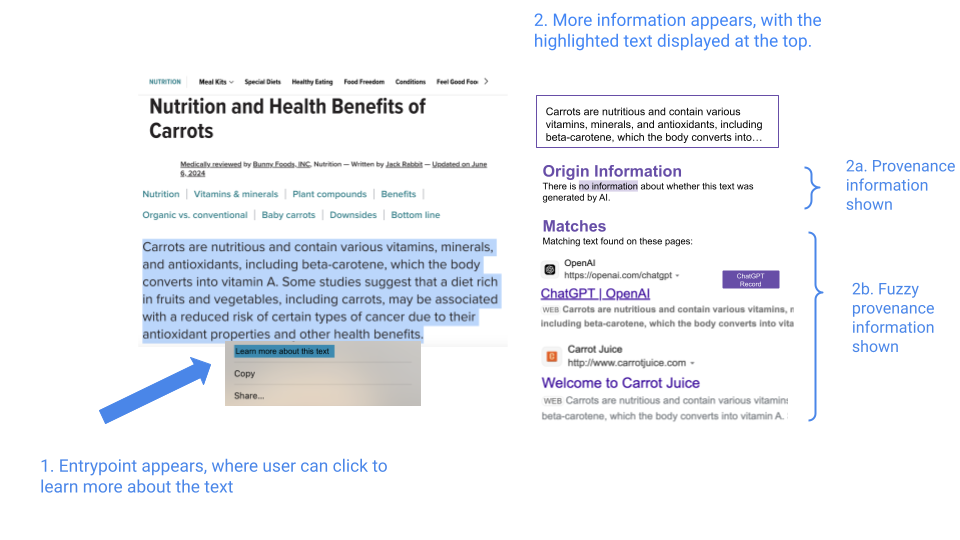

Figure 1. Mock implementation of combined provenance and fuzzy provenance

The above image captures what an implementation of the combined provenance and fuzzy provenance guidance might include. When a user highlights a piece of text that is sufficiently long, they can click “learn more about this text” to find more information.

There are ways to communicate provenance and fuzzy provenance so that it is both useful and easy-to-understand. In this concept showing the provenance of text, for example:

- Provenance information is shown under the heading “Origin Information”. This includes whether there is conclusive metadata (like watermarks) showing the text was generated by AI, according to C2PA standards, where available.

- Fuzzy provenance information is shown under the heading “Matches,” and includes other websites that have an exact text match to the highlighted text, similar to the results that come up when using a search engine. Furthermore, depending on NIST guidance, generative AI companies could have their free models’ records available to be crawled and indexed by search engines. This would enable these records to appear in the results if they contain an exact text match. These records would be clearly labeled (e.g., “ChatGPT Record”) and ranked at the top.

- See the following video for what this might look like for a user: user journeys.

Benefits of the Combined Approach

Showing both provenance and fuzzy provenance information provides users with critical context to evaluate the trustworthiness of a piece of text. Between provenance and fuzzy provenance, users would have access to information about many pieces of high-impact text, especially claims that could be particularly harmful for individuals, groups, or society at large. Making all this information immediately available also reduces friction for users so that they can get this information right where they encounter text.

Provenance information can be helpful to provide to users when it is available. For instance, knowing that a tech support company’s website description was AI-generated may encourage users to check other sources (like reviews) to see if the company is a real entity (and AI was used just to generate the description) or a fake entity entirely, before giving a deposit to hire the company (see user journey 1 in this video for an example).

Where clear provenance information is not available, fuzzy provenance can help fill the gap by providing valuable context to users in several ways:

- First, if there is no exact text match on the internet, it may indicate that the content is original—whether AI-generated or human-created—which can be especially relevant for assessing the trustworthiness of certain documents (see user journey 2 in this video for an example).

- Second, when presented with a misleading claim like “ginger is 10000x more effective than chemotherapy”, seeing that the text matches are from fact-check sites and unreliable sources such as other social media posts can encourage users to further investigate that claim (see user journey 3 in this video for an example).

- Third, absent any text matches from reliable sources, knowing that a claim (e.g., about carrots and cancer) matched an AI model’s record may cause a user to be skeptical, as they may realize that the text has the potential to be false content generated by AI (see user journey 4 in this video for an example).

Fuzzy provenance is also effective because it shows context and gives users autonomy to decide how to interpret that context. Academic studies have found that users tend to be more receptive when presented with further information they can use for their own critical thinking compared to being shown a conclusion directly (like a label), which can even backfire or be misinterpreted. This is why users may trust contextual methods like crowdsourced information more than provenance labels.

Finally, fuzzy provenance methods are generally feasible at scale, since they can be easily implemented with existing search engine capabilities (via an exact text match search). Furthermore, since fuzzy provenance only relies on exact text matching with other sources on the internet, it works without needing coordination among text-producers or compliance from bad actors.

Conclusion

To reduce the information integrity risks posed by synthetic text in a scalable and effective way, the National Institute for Standards and Technology (NIST) should develop community guidance on how platforms hosting text-based digital content can make accessible (in no more than one click) the provenance and “fuzzy provenance” of the piece of text, when available. NIST should also consider issuing guidance on how AI companies could make their free generative AI records available to be crawled by search engines, to amplify the effectiveness of “fuzzy provenance”.

This action-ready policy memo is part of Day One 2025 — our effort to bring forward bold policy ideas, grounded in science and evidence, that can tackle the country’s biggest challenges and bring us closer to the prosperous, equitable and safe future that we all hope for whoever takes office in 2025 and beyond.

PLEASE NOTE (February 2025): Since publication several government websites have been taken offline. We apologize for any broken links to once accessible public data.

Making free generative AI records available to be crawled by search engines includes tradeoffs, which is why it is an optional recommendation to consider. Below are some questions regarding implementation guidance, and trade offs including privacy and proprietary information.

Guidance could instruct AI model companies how to make their free generative AI conversation records available to be crawled and indexed by search engines. Similar to ChatGPT logs or Perplexity threads, a unique URL would be created for each conversation, capturing the date it occurred. The key difference is that all free model conversation records would be made available, but only with the AI outputs of the conversation, after removing personally identifiable information (PII) (see “Privacy considerations” section below). Because users can already choose to share conversations with each other (meaning the conversation logs are retained), and conversation logs for major model providers do not currently appear to have an expiration date, this requirement shouldn’t impose an additional storage burden for AI model companies.

Guidance could instruct search engines how to crawl and index these model logs so that queries with exact text matches to the AI outputs would surface the appropriate model logs. This would not be very different from search engines crawling/indexing other types of new URLs and should be well-within existing search engine capabilities. In terms of storage, since only free model logs would be crawled and indexed, and most free models rate-limit the number of user messages allowed, storage should also not be a concern. For instance, even with 200 million weekly active users for ChatGPT, the number of conversations in a year would only be on the order of billions, which is well-within the current scale that existing search engines have to operate to enable users to “search the web”.

- Output filtering on the AI outputs should be done to remove any personal identifiable information (PII) present in the model’s responses. However, it might still be possible to extrapolate who the original user was just by looking at the AI outputs taken together and inferring some of the user prompts. This is a privacy concern that should be further investigated. Some possible mitigations include additionally removing any location references of a certain granularity (i.e. removing mentions of neighborhoods, but retaining mentions of states) and presenting AI responses in the conversation in a randomized order.

- Removals should be made possible by a user-initiated process demonstrating privacy concerns, similar to existing search engine removal protocols.

- User consent would also be an important consideration here. NIST could propose that free model users must “opt-in”, or that free model record crawling/indexing be “opt-out” by default for users, though this may greatly compromise the reliability of fuzzy provenance.

- Training on AI-generated text: AI companies are concerned about accidentally picking up on too much AI-generated text on the web and training on that instead of higher human-generated text, thus degrading the quality of their own generative models. However, because they would have identifiable domain prefixes (ie chatgpt.com, perplexity.ai), it would be easy to exclude these AI-generated conversation logs if desired during training. Indeed, provenance and fuzzy provenance may help AI companies avoid unintentionally training on AI-generated text.

- Sharing model outputs: On the flipside, AI companies might be concerned that making so many AI-generated model outputs available for competitors to access may result in helping competitors improve their own models. This is a fair concern, though partially mitigated by a) specific user inputs are not available, and only the AI outputs; and b) only free model outputs would be logged, rather than any premium models, thus providing some proprietary protection. However, it is still possible that competitors may be able to enhance their own responses by training on the structure of AI outputs from other models at scale.

Blank Checks for Black Boxes: Bring AI Governance to Competitive Grants

The misuse of AI in federally-funded projects can risk public safety and waste taxpayer dollars.

The Trump administration has a pivotal opportunity to spot wasteful spending, promote public trust in AI, and safeguard Americans from unchecked AI decisions. To tackle AI risks in grant spending, grant-making agencies should adopt trustworthy AI practices in their grant competitions and start enforcing them against reckless grantees.

Federal AI spending could soon skyrocket. One ambitious legislative plan from a Senate AI Working Group calls for doubling non-defense AI spending to $32 billion a year by 2026. That funding would grow AI across R&D, cybersecurity, testing infrastructure, and small business support.

Yet as federal AI investment accelerates, safeguards against snake oil lag behind. Grants can be wasted on AI that doesn’t work. Grants can pay for untested AI with unknown risks. Grants can blur the lines of who is accountable for fixing AI’s mistakes. And grants offer little recourse to those affected by an AI system’s flawed decisions. Such failures risk exacerbating public distrust of AI, discouraging possible beneficial uses.

Oversight for federal grant spending is lacking, with:

- No AI-specific policies vetting discretionary grants

- Varying AI expertise on grant judging panels

- Unclear AI quality standards set by grantmaking agencies

- Little-to-no pre- or post-award safeguards that identify and monitor high-risk AI deployments.

Watchdogs, meanwhile, play a losing game, chasing after errant programs one-by-one only after harm has been done. Luckily, momentum is building for reform. Policymakers recognize that investing in untrustworthy AI erodes public trust and stifles genuine innovation. Steps policymakers could take include setting clear AI quality standards, training grant judges, monitoring grantee’s AI usage, and evaluating outcomes to ensure projects achieve their potential. By establishing oversight practices, agencies can foster high-potential projects for economic competitiveness, while protecting the public from harm.

Challenge and Opportunity

Poor AI Oversight Jeopardizes Innovation and Civil Rights

The U.S. government advances public goals in areas like healthcare, research, and social programs by providing various types of federal assistance. This funding can go to state and local governments or directly to organizations, nonprofits, and individuals. When federal agencies award grants, they typically do so expecting less routine involvement than they would with other funding mechanisms, for example cooperative agreements. Not all federal grants look the same—agencies administer mandatory grants, where the authorizing statute determines who receives funding, and competitive grants (or “discretionary grants”), where the agency selects award winners. In competitive grants, agencies have more flexibility to set program-specific conditions and award criteria, which opens opportunities for policymakers to structure how best to direct dollars to innovative projects and mitigate emerging risks.

These competitive grants fall short on AI oversight. Programmatic policy is set in cross-cutting laws, agency-wide policies, and grant-specific rules; a lack of AI oversight mars all three. To date, no government-wide AI regulation extends to AI grantmaking. Even when President Biden’s 2023 AI Executive Order directed agencies to implement responsible AI practices, the order’s implementing policies exempted grant spending (see footnote 25) entirely from the new safeguards. In this vacuum, the 26 grantmaking agencies are on their own to set agency-wide policies. Few have. Agencies can also set AI rules just for specific funding opportunities. They do not. In fact, in a review of a large set of agency discretionary grant programs, only a handful of funding notices announced a standard for AI quality in a proposed program. (See: One Bad NOFO?) The net result? A policy and implementation gap for the use of AI in grant-funded programs.

Funding mistakes damage agency credibility, stifle innovation, and undermines the support for people and communities financial assistance aims to provide. Recent controversies highlight how today’s lax measures—particularly in setting clear rules for federal financial assistance, monitoring how they are used, and responding to public feedback—have led to inefficient and rights-trampling results. In just the last few years, some of the problems we have seen include:

- The Department of Housing and Urban Development set few rules on a grant to protect public housing residents, letting officials off the hook when they bought facial recognition cameras to surveil and evict residents.

- Senators called for a pause on predictive policing grants, finding the Department of Justice failed to check that their grantees’ use of AI complied with civil rights laws—the same laws on which the grant awards were conditioned.

- The National Institute of Justice issued a recidivism forecasting challenge which researchers argued “incentivized exploiting the metrics used to judge entrants, leading to the development of trivial solutions that could not realistically work in practice.”

Any grant can attract controversy, and these grants are no exception. But the cases above spotlight transparency, monitoring, and participation deficits—the same kinds of AI oversight problems weakening trust in government that policymakers aim to fix in other contexts.

Smart spending depends on careful planning. Without it, programs may struggle to drive innovation or end up funding AI that infringes peoples’ rights. OMB, as well as agency Inspectors General, and grant managers will need guidance to evaluate what money is going towards AI and how to implement effective oversight. Government will face tradeoffs and challenges promoting AI innovation in federal grants, particularly due to:

1) The AI Screening Problem. When reviewing applications, agencies might fail to screen out candidates that exaggerate their AI capabilities—or fail to report bunk AI use altogether. Grantmaking requires calculated risks on ideas that might fail. But grant judges who are not experts in AI can make bad bets. Applicants will pitch AI solutions directly to these non-experts, and grant winners, regardless of their original proposal, will likely purchase and deploy AI, creating additional oversight challenges.

2) The grant-procurement divide. When planning a grant, agencies might set overly burdensome restrictions that dissuade qualified applicants from applying or otherwise take up too much time, getting in the way of grant goals. Grants are meant to be hands-off; fostering breakthroughs while preventing negligence will be a challenging needle to thread.

3) Limited agency capacity. Agencies may be unequipped to monitor grant recipients’ use of AI. After awarding funding, agencies can miss when vetted AI breaks down on launch. While agencies audit grantees, those audits typically focus on fraud and financial missteps. In some cases, agencies may not be measuring grantee performance well at all (slides 12-13). Yet regular monitoring, similar to the oversight used in procurement, will be necessary to catch emergent problems that affect AI outcomes. Enforcement, too, could be cause for concern; agencies clawback funds for procedural issues, but “almost never withhold federal funds when grantees are out of compliance with the substantive requirements of their grant statutes.” Even as the funding agency steps away, an inaccurate AI system can persist, embedding risks over a longer period of time.

Plan of Action

Recommendation 1. OMB and agencies should bake-in pre-award scrutiny through uniform requirements and clearer guidelines

- Agencies should revise funding notices to require applicants disclose plans to use AI, with a greater level of disclosure required for funding in a foreseeable high-risk context. Agencies should take care not to overburden applicants with disclosures on routine AI uses, particularly as the tools grow in popularity. States may be a laboratory to watch for policy innovation; Illinois, for example, has proposed AI disclosure policies and penalties for its state grants.

- Agencies should make AI-related grant policies clearer to prospective applicants. Such a change would be consistent with OMB policy and the latest Uniform Guidance, the set of rules OMB sets for agencies to manage their grants. For example, in grant notices, any AI-related review criteria should be plainly stated, rather than inferred from a project’s description. Any AI restrictions should be spelled out too, not merely incorporated by reference. More generally, agencies should consider simplifying grant notices, publishing yearly AI grant priorities, hosting information sessions, and/or extending public comment periods to significant AI-related discretionary spending.

- Agencies could consider generally-applicable metrics with which to evaluate applicants’ suggested AI uses. For example, agencies may require applicants to demonstrate they have searched for less discriminatory algorithms in the development of an automated system.

- OMB could formally codify pre-award AI risk assessments in the Uniform Guidance, the set of rules OMB sets for agencies to manage their grants. OMB updates its guidance periodically (with the most recent updates in 2024) and can also issue smaller revisions.

- Agencies could also provide resources targeted at less established AI developers, who might otherwise struggle to meet auditing and compliance standards.

Recommendation 2. OMB and grant marketplaces should coordinate information sharing between agencies

To support review of AI-related grants, OMB and grantmaking agency staff should pool knowledge on AI’s tricky legal, policy, and technical matters.

- OMB, through its Council on Federal Financial Assistance, should coordinate information-sharing between grantmaking agencies on AI risks.

- The White House Office of Science and Technology Policy, the National Institute of Standards and Technology, and the Administrative Conference of the United States (ACUS) should support agencies by devising what information agencies should collect on their grantee’s use of AI so as to limit administrative burden on grantees.

- OMB can share expertise on monitoring and managing grant risks, best practices and guides, and trade offs; other relevant interagency councils can share grant evaluation criteria and past performance templates across agencies.

- Online grants marketplaces, such as the Grants Quality Service Management Office Marketplace operated by the Department of Health and Human Services, should improve grantmakers’ decisions by sharing AI-specific information like an applicants’ AI quality, audit and enforcement history, where applicable.

Recommendation 3. Agencies should embrace targeted hiring and talent exchanges for grant review boards

Agencies should have experts in a given AI topic judging grant competitions. To do so requires overcoming talent acquisition challenges. To that end:

- Agency Chief AI Officers should assess grant office needs as part of their OMB-required assessments on AI talent. Those officers should also include grant staff in any AI trainings, and, when prudent, agency-wide risk assessment meetings

- Agencies should staff review boards with technical experts through talent exchanges and targeted hiring.

- OMB should coordinate drop-in technical experts who can sit in as consultants across agencies.

- OMB should support the training of federal grants staff on matters that touch AI– particularly as surveyed grant managers see training as an area of need.

Recommendation 4. Agencies should step up post-award monitoring and enforcement

You can’t improve what you don’t measure—especially when it comes to AI. Quantifying, documenting, and enforcing against careless AI uses can be a new task for grantmaking agencies. Incident reporting will improve the chances that existing cross-cutting regulations, including civil rights laws, can reel back AI gone awry.

- Congress can delegate investigative authority to agencies with AI audit expertise. Such an effort might mirror the cross-agency approach taken by the Department of Justice’s Procurement Collusion Strike Force, which investigates antitrust crimes in procurement and grantmaking.

- Congress can require agencies to cut off funds when grantees show repeated or egregious violations of grant terms pertaining to AI use. Agencies, where authorized, should voluntarily enforce against repeat bad players through spending clawbacks, cutoffs or ban lists.

- Agencies should consider introducing dispute resolution procedures that give redress to enforced-upon grantees.

Recommendation 5. Agencies should encourage and fund efforts to investigate and measure AI harms

- Agencies should invest in establishing measurement and standards within their topic areas on which to evaluate prospective applicants. For example, the National Institute of Justice recently opened funding to research evaluating the use of AI in the criminal legal system.

- Agencies should follow through on longstanding calls to encourage public whistleblowing on grantee missteps, particularly around AI.

- Agencies should solicit feedback from the public through RFIs on grants.gov on how to innovate in AI in their specific research or topic area.

Conclusion

Little limits how grant winners can spend federal dollars on AI. With the government poised to massively expand its spending on AI, that should change.

The federal failure to oversee AI use in grants erodes public trust, civil rights, effective service delivery and the promise of government-backed innovation. Congressional efforts to remedy these problems–starting probes, drafting letters–are important oversight measures, but only come after the damage is done.

Both the Trump and Biden administrations have recognized that AI is exceptional and needs exceptional scrutiny. Many of the lessons learned from scrutinizing federal agency AI procurement apply to grant competitions. Today’s confluence of public will, interest, and urgency is a rare opportunity to widen the aperture of AI governance to include grantmaking.

This action-ready policy memo is part of Day One 2025 — our effort to bring forward bold policy ideas, grounded in science and evidence, that can tackle the country’s biggest challenges and bring us closer to the prosperous, equitable and safe future that we all hope for whoever takes office in 2025 and beyond.

PLEASE NOTE (February 2025): Since publication several government websites have been taken offline. We apologize for any broken links to once accessible public data.

Enabling statutes for agencies often are the authority for grant competitions. For grant competitions, the statutory language leaves it to agencies to place further specific policies on the competition. Additionally, laws, like the DATA Act and Federal Grant and Cooperative Agreement Act, offer definitions and guidance to agencies in the use of federal funds.

Agencies already conduct a great deal of pre-award planning to align grantmaking with Executive Orders. For example, in one survey of grantmakers, a little over half of respondents updated their pre-award processes, such as applications and organization information, to comply with an Executive Order. Grantmakers aligning grant planning with the Trump administration’s future Executive Orders will likely follow similar steps.

A wide range of states, local governments, companies, and individuals receive grant competition funds. Spending records, available on USASpending.gov, give some insight into where grant funding goes, though these records too, can be incomplete.

Fighting Fakes and Liars’ Dividends: We Need To Build a National Digital Content Authentication Technologies Research Ecosystem

The U.S. faces mounting challenges posed by increasingly sophisticated synthetic content. Also known as digital media ( images, audio, video, and text), increasingly, these are produced or manipulated by generative artificial intelligence (AI). Already, there has been a proliferation in the abuse of generative AI technology to weaponize synthetic content for harmful purposes, such as financial fraud, political deepfakes, and the non-consensual creation of intimate materials featuring adults or children. As people become less able to distinguish between what is real and what is fake, it has become easier than ever to be misled by synthetic content, whether by accident or with malicious intent. This makes advancing alternative countermeasures, such as technical solutions, more vital than ever before. To address the growing risks arising from synthetic content misuse, the National Institute of Standards and Technology (NIST) should take the following steps to create and cultivate a robust digital content authentication technologies research ecosystem: 1) establish dedicated university-led national research centers, 2) develop a national synthetic content database, and 3) run and coordinate prize competitions to strengthen technical countermeasures. In turn, these initiatives will require 4) dedicated and sustained Congressional funding of these initiatives. This will enable technical countermeasures to be able to keep closer pace with the rapidly evolving synthetic content threat landscape, maintaining the U.S.’s role as a global leader in responsible, safe, and secure AI.

Challenge and Opportunity

While it is clear that generative AI offers tremendous benefits, such as for scientific research, healthcare, and economic innovation, the technology also poses an accelerating threat to U.S. national interests. Generative AI’s ability to produce highly realistic synthetic content has increasingly enabled its harmful abuse and undermined public trust in digital information. Threat actors have already begun to weaponize synthetic content across a widening scope of damaging activities to growing effect. Project losses from AI-enabled fraud are anticipated to reach up to $40 billion by 2027, while experts estimate that millions of adults and children have already fallen victim to being targets of AI-generated or manipulated nonconsensual intimate media or child sexual abuse materials – a figure that is anticipated to grow rapidly in the future. While the widely feared concern of manipulative synthetic content compromising the integrity of the 2024 U.S. election did not ultimately materialize, malicious AI-generated content was nonetheless found to have shaped election discourse and bolstered damaging narratives. Equally as concerning is the accumulative effect this increasingly widespread abuse is having on the broader erosion of public trust in the authenticity of all digital information. This degradation of trust has not only led to an alarming trend of authentic content being increasingly dismissed as ‘AI-generated’, but has also empowered those seeking to discredit the truth, or what is known as the “liar’s dividend”.

A. In March 2023, a humorous synthetic image of Pope Francis, first posted on Reddit by creator Pablo Xavier, wearing a Balenciaga coat quickly went viral across social media.

B. In May 2023, this synthetic image was duplicitously published on X as an authentic photograph of an explosion near the Pentagon. Before being debunked by authorities, the image’s widespread circulation online caused significant confusion and even led to a temporary dip in the U.S. stock market.

Research has demonstrated that current generative AI technology is able to produce synthetic content sufficiently realistic enough that people are now unable to reliably distinguish between AI-generated and authentic media. It is no longer feasible to continue, as we currently do, to rely predominantly on human perception capabilities to protect against the threat arising from increasingly widespread synthetic content misuse. This new reality only increases the urgency of deploying robust alternative countermeasures to protect the integrity of the information ecosystem. The suite of digital content authentication technologies (DCAT), or techniques, tools, and methods that seek to make the legitimacy of digital media transparent to the observer, offers a promising avenue for addressing this challenge. These technologies encompass a range of solutions, from identification techniques such as machine detection and digital forensics to classification and labeling methods like watermarking or cryptographic signatures. DCAT also encompasses technical approaches that aim to record and preserve the origin of digital media, including content provenance, blockchain, and hashing.

Evolution of Synthetic Media

Published in 2018, this now infamous PSA sought to illustrate the dangers of synthetic content. It shows an AI-manipulated video of President Obama, using narration from a comedy sketch by comedian Jordan Peele.

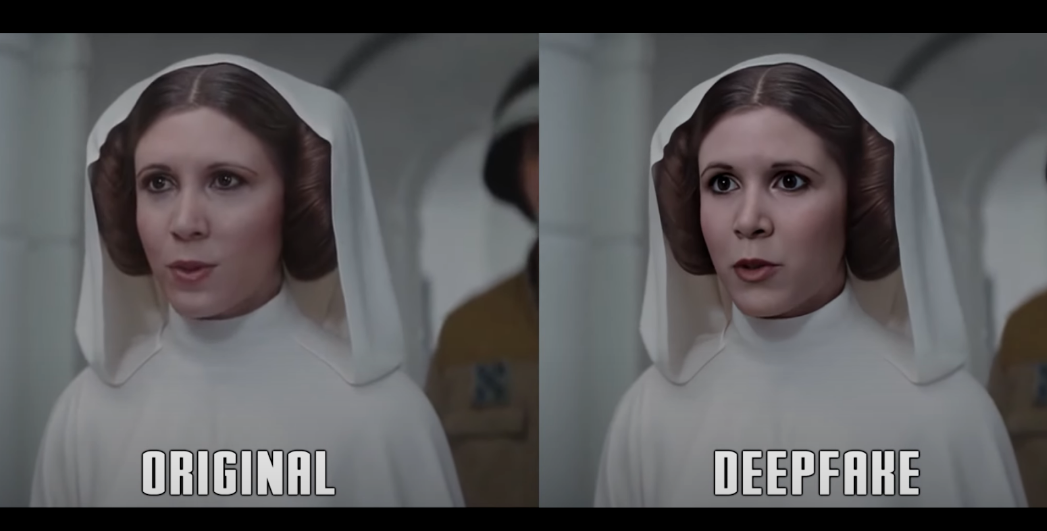

In 2020, a hobbyist creator employed an open-source generative AI model to ‘enhance’ the Hollywood CGI version of Princess Leia in the film Rouge One.

The hugely popular Tiktok account @deeptomcruise posts parody videos featuring a Tom Cruise imitator face-swapped with the real Tom Cruise’s real face, including this 2022 video, racking up millions of views.

The 2024 film Here relied extensively on generative AI technology to de-age and face-swap actors in real-time as they were being filmed.

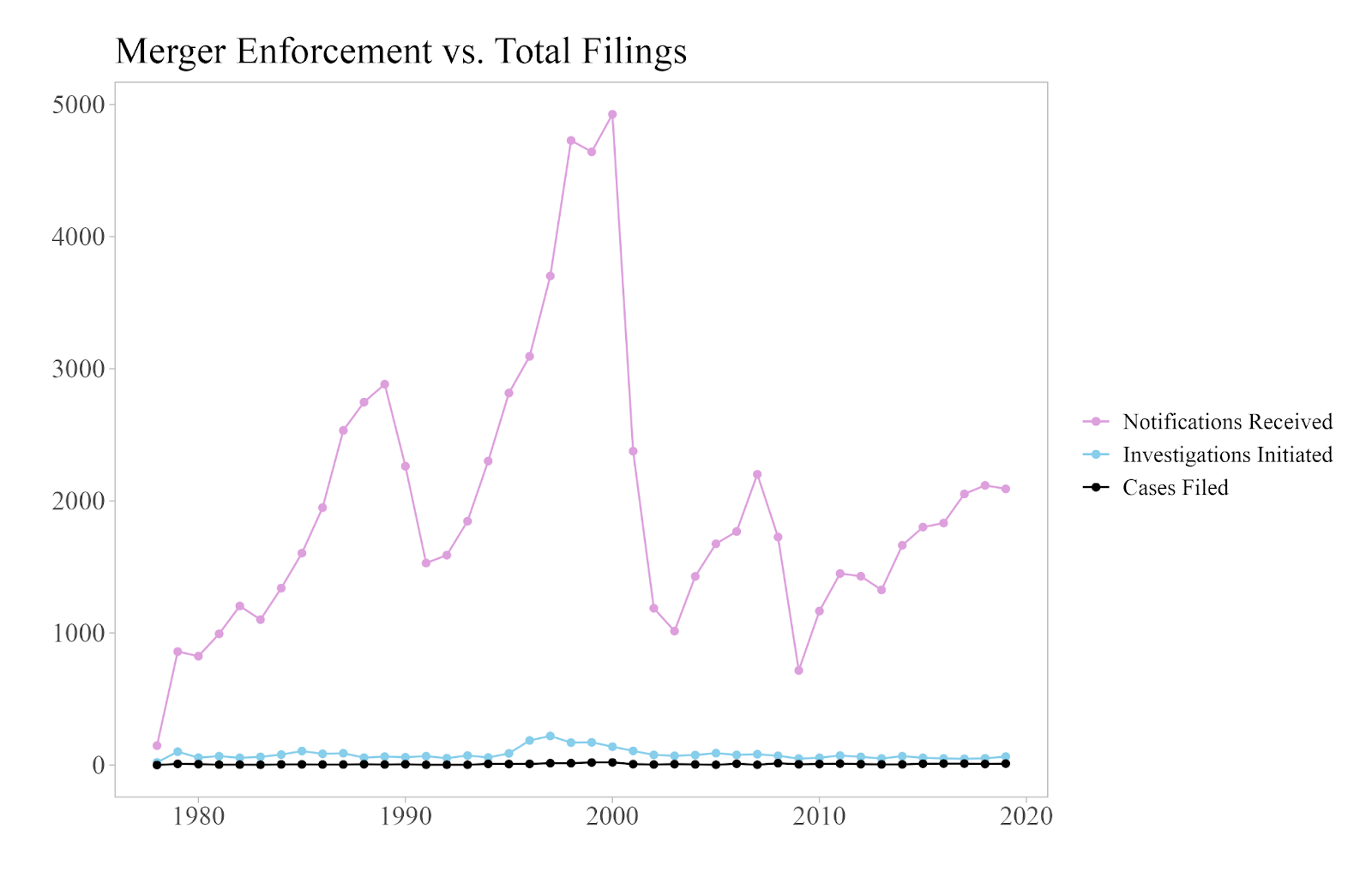

Robust DCAT capabilities will be indispensable for defending against the harms posed by synthetic content misuse, as well as bolstering public trust in both information systems and AI development. These technical countermeasures will be critical for alleviating the growing burden on citizens, online platforms, and law enforcement to manually authenticate digital content. Moreover, DCAT will be vital for enforcing emerging legislation, including AI labeling requirements and prohibitions on illegal synthetic content. The importance of developing these capabilities is underscored by the ten bills (see Fig 1) currently under Congressional consideration that, if passed, would require the employment of DCAT-relevant tools, techniques, and methods.

However, significant challenges remain. DCAT capabilities need to be improved, with many currently possessing weaknesses or limitations such brittleness or security gaps. Moreover, implementing these countermeasures must be carefully managed to avoid unintended consequences in the information ecosystem, like deploying confusing or ineffective labeling to denote the presence of real or fake digital media. As a result, substantial investment is needed in DCAT R&D to develop these technical countermeasures into an effective and reliable defense against synthetic content threats.

The U.S. government has demonstrated its commitment to advancing DCAT to reduce synthetic content risks through recent executive actions and agency initiatives. The 2023 Executive Order on AI (EO 14110) mandated the development of content authentication and tracking tools. Charged by the EO 14110 to address these challenges, NIST has taken several steps towards advancing DCAT capabilities. For example, NIST’s recently established AI Safety Institute (AISI) takes the lead in championing this work in partnership with NIST’s AI Innovation Lab (NAIIL). Key developments include: the dedication of one of the U.S. Artificial Intelligence Safety Institute Consortium’s (AISIC) working groups to identifying and advancing DCAT R&D; the publication of NIST AI 100-4, which “examines the existing standards, tools, methods, and practices, as well as the potential development of further science-backed standards and techniques” regarding current and prospective DCAT capabilities; and the $11 million dedicated to international research on addressing dangers arising from synthetic content announced at the first convening of the International Network of AI Safety Institutes. Additionally, NIST’s Information Technology Laboratory (ITL) has launched the GenAI Challenge Program to evaluate and advance DCAT capabilities. Meanwhile, two pending bills in Congress, the Artificial Intelligence Research, Innovation, and Accountability Act (S. 3312) and the Future of Artificial Intelligence Innovation Act (S. 4178), include provisions for DCAT R&D.

Although these critical first steps have been taken, an ambitious and sustained federal effort is necessary to facilitate the advancement of technical countermeasures such as DCAT. This is necessary to more successfully combat the risks posed by synthetic content—both in the immediate and long-term future. To gain and maintain a competitive edge in the ongoing race between deception and detection, it is vital to establish a robust national research ecosystem that fosters agile, comprehensive, and sustained DCAT R&D.

Plan of Action

NIST should engage in three initiatives: 1) establishing dedicated university-based DCAT research centers, 2) curating and maintaining a shared national database of synthetic content for training and evaluation, as well as 3) running and overseeing regular federal prize competitions to drive innovation in critical DCAT challenges. The programs, which should be spearheaded by AISI and NAIIL, are critical for enabling the creation of a robust and resilient U.S. DCAT research ecosystem. In addition, the 118th Congress should 4) allocate dedicated funding to supporting these enterprises.

These recommendations are not only designed to accelerate DCAT capabilities in the immediate future, but also to build a strong foundation for long-term DCAT R&D efforts. As generative AI capabilities expand, authentication technologies must too keep pace, meaning that developing and deploying effective technical countermeasures will require ongoing, iterative work. Success demands extensive collaboration across technology and research sectors to expand problem coverage, maximize resources, avoid duplication, and accelerate the development of effective solutions. This coordinated approach is essential given the diverse range of technologies and methodologies that must be considered when addressing synthetic content risks.

Recommendation 1. Establish DCAT Research Institutes

NIST should establish a network of dedicated university-based research to scale up and foster long-term, fundamental R&D on DCAT. While headquartered at leading universities, these centers would collaborate with academic, civil society, industry, and government partners, serving as nationwide focal points for DCAT research and bringing together a network of cross-sector expertise. Complementing NIST’s existing initiatives like the GenAI Challenge, the centers’ research priorities would be guided by AISI and NAIIL, with expert input from the AISIC, the International Network of AISI, and other key stakeholders.

A distributed research network offers several strategic advantages. It leverages elite expertise from industry and academia, and having permanent institutions dedicated to DCAT R&D enables the sustained, iterative development of authentication technologies to better keep pace with advancing generative AI capabilities. Meanwhile, central coordination by AISI and NAIIL would also ensure comprehensive coverage of research priorities while minimizing redundant efforts. Such a structure provides the foundation for a robust, long-term research ecosystem essential for developing effective countermeasures against synthetic content threats.

There are multiple pathways via which dedicated DCAT research centers could be stood up. One approach is direct NIST funding and oversight, following the model of Carnegie Mellon University’s AI Cooperative Research Center. Alternatively, centers could be established through the National AI Research Institutes Program, similar to the University of Maryland’s Institute for Trustworthy AI in Law & Society, leveraging NSF’s existing partnership with NIST.

The DCAT research agenda could be structured in two ways. Informed by NIST’s report NIST AI 100-4, a vertical approach could be taken to centers’ research agendas, assigning specific technologies to each center (e.g. digital watermarking, metadata recording, provenance data tracking, or synthetic content detection). Centers would focus on all aspects of a specific technical capability, including: improving the robustness and security of existing countermeasures; developing new techniques to address current limitations; conducting real-world testing and evaluation, especially in a cross-platform environment; and studying interactions with other technical safeguards and non-technical countermeasures like regulations or educational initiatives. Conversely, a horizontal approach might seek to divide research agendas across areas such as: the advancement of multiple established DACT techniques, tools, and methods; innovation of novel techniques, tools, and methods; testing and evaluation of combined technical approaches in real-world settings; examining the interaction of multiple technical countermeasures with human factors such as label perception and non-technical countermeasures. While either framework provides a strong foundation for advancing DCAT capabilities, given institutional expertise and practical considerations, a hybrid model combining both approaches is likely the most feasible option.

Recommendation 2. Build and Maintain a National Synthetic Content Database

NIST should also build and maintain a national database of synthetic content database to advance and accelerate DCAT R&D, similar to existing federal initiatives such as NIST’s National Software Reference Library and NSF’s AI Research Resource pilot. Current DCAT R&D is severely constrained by limited access to diverse, verified, and up-to-date training and testing data. Many researchers, especially in academia, where a significant portion of DCAT research takes place, lack the resources to build and maintain their own datasets. This results in less accurate and more narrowly applicable authentication tools that struggle to keep pace with rapidly advancing AI capabilities.

A centralized database of synthetic and authentic content would accelerate DCAT R&D in several critical ways. First, it would significantly alleviate the effort on research teams to generate or collect synthetic data for training and evaluation, encouraging less well-resourced groups to conduct research as well as allowing researchers to focus more on other aspects of R&D. This includes providing much-needed resources for the NIST-facilitated university-based research centers and prize competitions proposed here. Moreover, a shared database would be able to provide more comprehensive coverage of the increasingly varied synthetic content being created today, permitting the development of more effective and robust authentication capabilities. The database would be useful for establishing standardized evaluation metrics for DCAT capabilities – one of NIST’s critical aims for addressing the risks posed by AI technology.

A national database would need to be comprehensive, encompassing samples of both early and state-of-the-art synthetic content. It should have controlled laboratory-generated along with verified “in the wild” or real world synthetic content datasets, including both benign and potentially harmful examples. Further critical to the database’s utility is its diversity, ensuring synthetic content spans multiple individual and combined modalities (text, image, audio, video) and features varied human populations as well as a variety of non-human subject matter. To maintain the database’s relevance as generative AI capabilities continue to evolve, routinely incorporating novel synthetic content that accurately reflects synthetic content improvements will also be required.

Initially, the database could be built on NIST’s GenAI Challenge project work, which includes “evolving benchmark dataset creation”, but as it scales up, it should operate as a standalone program with dedicated resources. The database could be grown and maintained through dataset contributions by AISIC members, industry partners, and academic institutions who have either generated synthetic content datasets themselves or, as generative AI technology providers, with the ability to create the large-scale and diverse datasets required. NIST would also direct targeted dataset acquisition to address specific gaps and evaluation needs.

Recommendation 3. Run Public Prize Competitions on DCAT Challenges

Third, NIST should set up and run a coordinated prize competition program, while also serving as federal oversight leads for prize competitions run by other agencies. Building on existing models such as the DARPA SemaFor’s AI FORCE and the FTC’s Voice Cloning challenge, the competitions would address expert-identified priorities as informed by the AISIC, International Network of AISI, and proposed DCAT national research centers. Competitions represent a proven approach to spurring innovation for complex technical challenges, enabling the rapid identification of solutions through diverse engagement. In particular, monetary prize competitions are especially successful at ensuring engagement. For example, the 2019 Kaggle Deepfake Detection competition, which had a prize of $1 million, fielded twice as many participants as the 2024 competition, which gave no cash prize.

By providing structured challenges and meaningful incentives, public competitions can accelerate the development of critical DCAT capabilities while building a more robust and diverse research community. Such competitions encourage novel technical approaches, rapid testing of new methods, facilitate the inclusion of new or non-traditional participants, and foster collaborations. The more rapid-cycle and narrow scope of the competitions would also complement the longer-term and broader research being conducted by the national DCAT research centers. Centralized federal oversight would also prevent the implementation gaps which have occurred in past approved federal prize competitions. For instance, the 2020 National Defense Authorization Act (NDAA) authorized a $5 million machine detection/deepfakes prize competition (Sec. 5724), and the 2024 NDAA authorized a ”Generative AI Detection and Watermark Competition” (Sec. 1543). However, neither prize competition has been carried out, and Watermark Competition has now been delayed to 2025. Centralized oversight would also ensure that prize competitions are run consistently to address specific technical challenges raised by expert stakeholders, encouraging more rapid development of relevant technical countermeasures.

Some examples of possible prize competitions might include: machine detection and digital forensic methods to detect partial or fully AI-generated content across single or multimodal content; assessing the robustness, interoperability, and security of watermarking and other labeling methods across modalities; testing innovations in tamper-evident or -proofing content provenance tools and other data origin techniques. Regular assessment and refinement of competition categories will ensure continued relevance as synthetic content capabilities evolve.

Recommendation 4. Congressional Funding of DCAT Research and Activities

Finally, the 118th Congress should allocate funding for these three NIST initiatives in order to more effectively establish the foundations of a strong DCAT national research infrastructure. Despite widespread acknowledgement of the vital role of technical countermeasures in addressing synthetic content risks, the DCAT research field remains severely underfunded. Although recent initiatives, such as the $11 million allocated to the International Network of AI Safety Institutes, are a welcome step in the right direction, substantially more investment is needed. Thus far, the overall financing of DCAT R&D has been only a drop in the bucket when compared to the many billions of dollars being dedicated by industry alone to improve generative AI technology.

This stark disparity between investment in generative AI versus DCAT capabilities presents an immediate opportunity for Congressional action. To address the widening capability gap, and to support pending legislation which will be reliant on technical countermeasures such as DCAT, the 118th Congress should establish multi-year appropriations with matching fund requirements. This will encourage private sector investment and permit flexible funding mechanisms to address emerging challenges. This funding should be accompanied by regular reporting requirements to track progress and impact.

One specific action that Congress could take to jumpstart DCAT R&D investment would be to reauthorize and appropriate the budget that was earmarked for the unexecuted machine detection competition it approved in 2020. Despite the 2020 NDAA authorizing $5 million for it, no SAC-D funding was allocated, and the competition never took place. Another action would be for Congress to explicitly allocate prize money for the watermarking competition authorized by the 2024 NDAA, which currently does not have any monetary prize attached to it, to encourage higher levels of participation in the competition when it takes place this year.

Conclusion

The risks posed by synthetic content present an undeniable danger to U.S. national interests and security. Advancing DCAT capabilities is vital for protecting U.S. citizens against both the direct and more diffuse harms resulting from the proliferating misuse of synthetic content. A robust national DCAT research ecosystem is required to accomplish this. Critically, this is not a challenge that can be addressed through one-time solutions or limited investment—it will require continuous work and dedicated resources to ensure technical countermeasures keep pace alongside increasingly sophisticated synthetic content threats. By implementing these recommendations with sustained federal support and investment, the U.S. will be able to more successfully address current and anticipated synthetic content risks, further reinforcing its role as a global leader in responsible AI use.