Public Participation IS the Ingenuity We Need

Building Blocks To Make Public Participation Solutions Work

Participation is not a distraction from governing — it is how government governs well. When treated as compliance, it comes too late and excludes those most affected, weakening legitimacy. Designed as a strategic asset, it builds trust, eases implementation, and supports more durable decisions.

Implications for democratic governance

- Participation is how decisions are made. Engagement should focus on clearly defined choices, constraints, and tradeoffs so decisions can move forward.

- Who participates, and when, shapes what government hears. Participation must reflect the full scope of impacts, not just the most visible or organized voices.

- Legitimacy depends on follow-through. Agencies should explain how input was considered so people can make sense of the outcome, even when they disagree.

Capacity needs

- Learn and adapt throughout the process. Track who is participating, what input is generated, and how it is used — then adjust the approach as needed.

- Engage early enough to matter — and continuously where possible. Bring people in while options are still open, and stay engaged so feedback informs both decisions and implementation.

- Structure input for decisions. Ask targeted questions and use formats that help compare impacts, priorities, and implementation considerations.

- Train for real-world engagement. Equip staff to facilitate conversations, synthesize input into key themes, and navigate disagreement.

- Make participation feasible. Offer enough lead time, plain-language materials, and multiple ways to engage (e.g., virtual, in-person, asynchronous) that account for different access needs.

Government, at its best, is democracy’s promise made real: the mechanism through which a society turns values into action and public voice into policy. But that mechanism has corroded. Rebuilding it starts with something simple — treating the public not as a problem to manage, but as a source of ingenuity government cannot function without.

Public participation, as the federal government executes it today, rarely builds trust: the public hearing held after decisions are already made, the comment period that produces thousands of responses with no visible impact, the listening session where officials take notes but never engage. The other version, where a highly organized few monopolizes public ears and distorts public response for their niche interests, is equally demoralizing. Critics are right to call out this failure. Across the political spectrum, there is a shared diagnosis: the current system of public engagement too often functions as a series of veto points, rewarding obstruction over problem-solving and delay over delivery.

That erosion matters enormously right now. Americans have grown deeply skeptical that government can solve hard problems, and climate change, with its complexity and its demands on every sector of the economy, may be the hardest challenge it has ever faced. As the authors ask in the opening argument of the Center for Regulatory Ingenuity, if government can’t effectively address challenges it deems an “existential threat,” what good is it, and can democracy overcome this downward spiral of mutually reinforcing cynicism?

We believe it can. Today, we are living through a mid-transition moment in climate policy, in which the technologies we need exist, the economics are increasingly favorable, and many obstacles are governmental: slow processes, fragile coalitions, and policies that get built and then litigated into irrelevance. In that context, the instinct to streamline is understandable. But a government that privileges artificially weighted listening, or avoids listening because it didn’t plan well, doesn’t move faster. It moves blindly or with bias. It builds the wrong things, in the wrong places, for the wrong reasons, and then wonders why nothing sticks. The argument here is not for more or less participation but for better participation, treated as a strategic asset rather than a box to check, and designed with the same rigor as any other policy instrument. Done well, public engagement demonstrates that government can listen, adapt, and earn trust. At a moment when democratic institutions are fragile, that demonstration is not incidental to the work of governing, it’s central to it.

Climate change is not just a technical problem, it’s a governance problem, and increasingly, a democratic one. It reaches into every community, every economy, and every aspect of daily life, from how people power their homes to how they move through their cities to whether their communities remain livable at all. There is no technocratic solution that can bypass the public. The choices climate demands (where to build, what to prioritize, who bears costs, and who benefits) are fundamentally democratic choices. If democratic government cannot make those choices in ways that are effective and legitimate, it will not just fail on climate. It will fail at its most essential purpose: helping people shape the conditions of their shared future.

Definitions and the Role in Governance

Before making the case for better participation, it helps to be precise about what we mean in a space where a range of practices often get conflated.

In January 2025, the White House Office of Management and Budget issued a memorandum that the authors of this paper helped develop, laying out a federal framework for broadening public participation and community engagement. The memo itself was built through the practices it recommends: a public request for information drew input from hundreds of participants and nearly 300 written comments, documented in a public summary that informed the final guidance. It offers definitions worth building on. Public participation is any process that engages the public in government decision-making — helping shape policies, regulations, or research, or soliciting new ideas and innovations. It is inherently transactional: it seeks to inform and obtain input from those interested in or affected by agency action. Community engagement, by contrast, is primarily relational — the consistent building of relationships with communities over time, informed by the history those communities have with an agency, and transparent about the real opportunities and real limitations of that relationship.

Public participation without community engagement can produce processes that feel hollow — technically open, but not genuinely accessible to the people most affected. Community engagement without public participation can build trust and goodwill that never translates into actual influence over decisions. Together, they reinforce each other: relationships make meaningful input possible, and meaningful input, when reflected in decisions, strengthens those relationships over time. But they are not interchangeable, and not always needed in equal measure. One of the memo’s most important contributions is helping government actors think more carefully about which tool fits which moment; a public comment period serves a different purpose than a years-long relationship with a frontline community, and conflating them produces both bad process and bad outcomes. Resources like EPA’s Public Involvement Spectrum make this concrete, mapping participation options from basic outreach through information exchange, recommendations, agreements, and stakeholder action, each carrying a different promise to the public and requiring a different level of agency commitment and design.

What the OMB memo does is not add new mandates (federal statutes from the Administrative Procedure Act to the National Environmental Policy Act already require it across a wide range of agency functions). It strengthens and clarifies what good practice looks like within those existing requirements while making that guidance accessible to anyone in government who wants to apply it beyond those requirements.

That the memo exists at all reflects a recognition that the statutory floor was never enough and democratic practice does not end on election day. The case for meaningful participation rests on the idea that government derives its legitimacy from the people it serves, and that legitimacy has to be earned continuously.

Why Engagement Often Fails

If the case for participation is so strong, why does it so often fail to deliver? Not because the theory is wrong! But because the dominant models were built for a different era, and even well-intentioned efforts, when filtered through broken processes, can deepen the cynicism they were meant to address.

This matters because bad engagement actively fuels the cynicism it was supposed to address. Every bad process reinforces the conviction that participation is theater (or participation is illegitimate, or corruption sanitized) and makes it harder to do the real thing next time. These failures matter in any policy domain, but for climate they are something closer to existential — not just for policy outcomes, but for faith in democratic governance itself.

The Design Failures Are Structural, Not Incidental

The most common problems with public engagement are baked into the dominant models. Traditional notice-and-comment processes, for instance, were designed for transparency, judicial review, and weighting technical and legal expertise over lived experience — and they achieve those aims in a narrow procedural sense. But transparency is not the same as accessibility, and access is not the same as influence. As Nicholas Bagley has argued, procedural rules now actively exacerbate the very problems they were designed to solve. Notice-and-comment tends to reward the organized, the well-resourced, or the professionally represented, producing voluminous records that reflect the priorities of those who could afford to engage, not necessarily the communities most affected by the decision. A single well-funded group can submit thousands of pages of technical comments; a frontline community facing the same decision may have no idea the comment period exists, and no capacity to respond to it even if they did.

Formal public hearings share similar pathologies. They are designed to create a record, not a dialogue, and they tend to produce exactly what their format invites. Participants deliver prepared statements into a microphone. Agency officials listen without responding. The atmosphere is frequently adversarial, structured in ways that entrench “us versus them” dynamics rather than creating any genuine opportunity for exchange, learning, or compromise. People leave feeling unheard not because their words weren’t recorded, but because nothing about the process suggested anyone was actually listening.

One-way communication runs across many engagement formats. As the IAP2 Public Participation Toolbox makes clear, even well-designed information-sharing tools — fact sheets, websites, press releases — have significant limitations when they substitute for genuine dialogue rather than supporting it. Listening sessions, informational webinars, and town halls designed primarily to transmit information leave the public as a passive audience (or unheard correspondent) rather than active participants. When people cannot ask questions, push back, or engage in real exchange with the decision-makers who affect their lives, the engagement reinforces rather than reduces the distance between government and community.

Timing compounds all of these design problems. Engagement that happens too late in the decision-making process — after the key choices have been made, after alternatives have narrowed, after political and financial commitments are in place — is unlikely to meaningfully influence outcomes no matter how well it is conducted. Collaboration works best when it begins early, when there is still genuine room to shape the purpose, alternatives, and design of a proposed action. By the time a formal public comment period opens on many major decisions, the substantive work is essentially done. Community input at that stage can affect the margins but rarely the fundamentals and communities know it. When people show up, offer input, and watch nothing change, they stop showing up.

The scale and complexity of engagement materials create their own barrier. Technical documents running hundreds of pages, written in regulatory language accessible only to specialists, are not a neutral feature of the process — they are a filter. Effective strategic communication requires understanding who the audience is, what they already know, and how the issue connects to their lives, none of which is served by dense regulatory documents distributed through official channels. When the baseline requirement for participation is fluency in administrative law and agency-specific jargon, the people best positioned to engage are lawyers and lobbyists, not the residents of a community downstream from a proposed facility. Simplifying materials is not dumbing them down. It is recognizing that the expertise of affected communities is just as relevant as the expertise of credentialed professionals — and that accessing it requires meeting people where they are, not where the agency finds it convenient.

Perhaps most corrosively, some procedural tools originally designed to protect communities have been repurposed as obstruction. When participation requirements become veto points and when the primary function of an engagement process is to create grounds for litigation rather than to genuinely improve decisions, they undermine both the efficiency that critics of government rightly demand and the democratic accountability that participation is supposed to deliver. Small, organized, well-resourced groups can exploit procedural requirements to delay or block decisions that broader communities support, effectively capturing processes intended for the many and wielding them on behalf of the few. This is the participation failure that most directly drives the “just build it” impulse and it is a legitimate grievance. A system that was designed to give communities a voice has, in too many cases, been captured, producing neither democratic legitimacy nor efficient delivery. At the same time, when communities are left out of upstream planning, these veto points often become one of the only avenues available to influence decisions.

The Barriers to Inclusion Run Just as Deep

Even when processes are reasonably well-designed, they often fail to reach the communities that matter most. The gap between who participates and who is affected often follows the contours of existing inequality with uncomfortable precision.

Awareness is the first and most basic barrier. Many people simply don’t know that an opportunity to engage exists. Believe it or not, federal agency websites are not where most Americans spend their time, and the Federal Register is not how most communities learn about decisions that will affect them (even for experienced users). When engagement opportunities are announced through official channels that already skew toward the educated, the connected, and the English-proficient, the resulting participant pool reflects those skews. Compounding this is a lack of clarity about what participation is even for: many people who are aware of an opportunity don’t understand how their input could make a difference, or whether it ever has. That uncertainty is itself a barrier, and it is one that agencies rarely address directly.

The materials and communications that agencies use to invite and support participation often create their own exclusions. Technical documents written for specialists, notices distributed through unfamiliar channels, comment periods with deadlines that give working families no realistic time to respond, engagement formats that assume reliable broadband and digital literacy — none of these are neutral design choices. A sound public engagement plan starts by understanding audience needs and building authentic, reciprocal relationships — the opposite of defaulting to formats that are convenient for the agency. Each design choice narrows the pool of who can meaningfully participate, and the cumulative effect is systematic. When engagement materials are only available in English in communities where many residents speak other languages primarily, the process has already decided who counts. When comment periods close before community organizations have had time to mobilize their members, the timeline has already decided who counts. These are decisions that agencies make, often without fully recognizing them as decisions at all.

Physical access barriers operate similarly. Transportation costs, distance to venues, the inaccessibility of meeting spaces for people with disabilities, the difficulty of attending a weekday hearing while working multiple jobs — these are all practical obstacles that might seem minor in isolation but compound into systematic exclusion for communities that are often most directly exposed to the environmental and infrastructure decisions being made. Virtual engagement has opened some of these doors, but it has closed others: digital access gaps, limited bandwidth in rural and low-income communities, and the particular challenges of meaningful online participation for older adults or those with limited technology experience mean that remote options solve some access problems while creating new ones.

Trust may be the most intractable barrier of all, because it cannot be addressed by logistical improvements alone. Communities that have experienced past harms from government (broken promises, extractive processes, decisions that hurt them and were made without them) carry a rational skepticism about whether this time will be any different. That skepticism is not ignorance or apathy. It is an accurate reading of a track record. Overcoming it requires more than a well-designed meeting or a plain-language summary. It requires demonstrated consistency over time: showing up when there is no immediate decision to be made, acknowledging historical harms directly rather than implicitly, and following through on commitments in ways that are visible and verifiable. This is precisely what Hollie Gilman and Sabeel Rahman mean when they argue in Civic Power that meaningful participation isn’t ultimately about better meetings — it is about redistributing power so that those closest to the problem are genuinely part of the solution.

Privacy concerns add another dimension that is easy to overlook from a position of power or privilege. In communities with historically complex or adversarial relationships with government – such as those experiencing overpolicing, immigrant communities, or other underserved groups – the act of identifying oneself in public participation can seem risky. Showing up on the record or speaking at a public hearing can feel less like civic engagement and more like exposure. These are not irrational fears; they reflect lived experience and how government has used information, access, interests, and related levers against communities. Genuine participation in these contexts will not occur just by invitation. Agencies must actively and continuously address the conditions that make showing up feel unsafe (e.g., by using intermediaries, anonymous or aggregated input, or other protective measures) and acknowledge the prior harm.

One additional factor shapes who participates and whether their participation endures: organization. Even when agencies improve conditions for participation, durable community voice does not emerge automatically; it depends on collective capacity, especially in communities historically marginalized by race or class. Without organizational support, individuals often lack the connections, information, and trust to translate lived experience into effective engagement. Organization helps aggregate that experience, sustain engagement, and convert it into usable input for government. In its absence, participation remains fragmented, reinforcing the advantage of those already organized and resourced.

The Benefits of Effective Engagement as a Strategic Asset

In the early 2000s, a proposed bridge crossing the St. Croix River (a National Scenic Riverway on the Minnesota-Wisconsin border) had been stuck in gridlock for five decades. When serious planning had kicked off in the 1980s and 1990s, the 1931 structure was already fracture-critical. Stakeholder groups and a disparate range of public institutions, representing sharply conflicting interests and mutual distrust, had reliably blocked every attempt to move forward.

What finally broke the logjam wasn’t a better technical study or a more powerful agency directive, a least common denominator solution, or a decision to simply ignore the interests of a set of stakeholders. It was a deliberate shift to structured collaboration rather than the usual method of talking at one another with concerns, with no means of finding points of alignment, resolution, or tradeoffs. Twenty-eight stakeholder groups shaped the bridge’s location and design, with a comprehensive mitigation package addressing the natural, social, and cultural impacts of the new bridge. Moreover,

Relationships and communication among the stakeholders improved remarkably during the problem-solving process. In the words of one stakeholder, “We were able to spend the time necessary to get over our natural inclination to not trust people from the other side. […] We had enough time and enough space to come to a conclusion that everybody could feel comfortable with.”

That outcome wasn’t the result of exhaustion, but of deliberate design and an emphasis on negotiation rather than government serving as an answering machine that never calls back.

Before making that case, it is worth naming what good engagement is not. Smart, well-designed participation is not a mechanism for local communities to veto decisions that serve broader public interests. When a neighborhood is asked whether it wants new apartments, or a community facing a new transmission line is simply asked whether they approve, the answer is predictably no — and designing processes that guarantee that outcome is not good participation! It is a design failure that produces exactly the NIMBY dynamics that rightly frustrate those who want to build. The problem is not that people oppose things. It is that poorly designed processes over-sample the most proximate opposition, structure engagement queries without a sense of purpose or audience, start with technicalities rather than longstanding trust, avoid the potential for early negotiation, or systematically exclude the regional, national, and future stakeholders who have just as much at stake in the outcome. These failures aren’t inevitable.

Good engagement produces better decisions.

Agencies have technical expertise, legal authority, and institutional knowledge, but they routinely lack on-the-ground understanding of how policies will actually land in specific communities, what tradeoffs matter most to the people affected, and what solutions might work that no one in a headquarters office has thought of yet. Meaningful participation fills those gaps.Well-designed engagement generates solutions that are more effective, empowers people from different backgrounds, and builds the local networks that make implementation actually work. It brings in lived experience that data alone cannot capture, surfaces local knowledge that improves policy design, and produces decisions that are more responsive to the full range of affected interests rather than the loudest or most proximate ones.

Research on structured deliberation consistently shows that when people are given good information and genuine opportunity to reason together — rather than just reacting to proposals that feel threatening — they reach more nuanced, durable conclusions than either polarized public opinion or top-down expert judgment alone produces. Deliberation, in this sense, is not just a democratic value. It is a technical tool for overcoming the polarization that makes hard policy decisions feel impossible. It is also, critically, a tool for helping people reason past their immediate self-interest toward a broader understanding of tradeoffs, which is precisely what is missing when participation processes sample only those with the most to lose from a particular change, rather than those with the most at stake in the outcome. Done well, it changes which voices dominate and acknowledges the role of power. That shift is the difference between a participation process that ratifies the preferences of whoever showed up and one that actually informs a decision. This is not an argument for endless input or process without limits. As James Goodwin argues in this collection, the most effective participation is targeted rather than open-ended, focused on the core disputes that actually need resolving, rather than generating voluminous records that obscure more than they illuminate. The goal is engagement that is both more inclusive and more purposeful: asking the right questions of the right people at the right time (which means going well beyond the immediate neighborhood to find the people whose lives will be shaped by a decision).

But asking the right questions requires doing the work before the room fills up. Good engagement doesn’t begin with an open-ended invitation to say whatever comes to mind. It begins with agencies doing enough homework (with experts and communities) to frame the problem clearly: what is actually being decided, what constraints are real, what tradeoffs exist, and where there is genuine room for public input to influence the outcome. That frame is one of mutual respect.

This is where standards matter, as explored later in this essay. The difference between engagement that produces insight and engagement that produces noise is largely a design question.

Good engagement builds the trust that makes government function.

Scholars have considered the consequences of low trust through many lenses, but the legitimacy of democracies relies on trust. Lower trust means less engagement with functions that government performs uniquely or drives, whether disaster response, weather warnings, federal benefits, security functions, public health, or independent data collection and analysis — and that lesser engagement means those functions work less well for everyone else. This has a cascading impact as democratic institutions weaken when government cannot, does not, or is not believed to deliver on expectations of its citizens.

When people experience decision-making as transparent, accessible, and genuinely responsive to their input, trust builds. When they show up and feel unheard, or never show up at all because no one made it possible, trust erodes, most quickly and most consequentially for those communities that can least afford to lose it. This isn’t incidental — trust is the medium through which every other benefit of engagement operates. When it erodes, the downstream consequences reach far beyond any single process, a pattern worth examining directly when we turn to why engagement so often fails.

While study after study shows that less than half of Americans trust the federal government, far fewer (21%) believe it listens to the public — and just 15% believe it is transparent, both according to a 2024 national survey. This underscores a deep perception that government is neither responsive nor accountable to the people it serves. Every engagement process is a small test of whether democracy is meaningful — especially when government is asking people to accept changes as visible and consequential as those required by climate policy.

Good engagement reduces conflict and can prevent litigation.

As Andy Gordon has argued at FAS, listening is a prerequisite for discovery and a requirement for success on any ambitious public goal where stakes are high. The ARPA-I national listening tour demonstrated this concretely: starting with questions rather than answers, and drawing on distributed expertise from every layer of the transportation system, produced an agenda-setting process that no small group behind closed doors could have replicated. The same principle holds for climate and environmental policy. The instinct to streamline participation (to save time, to avoid change, to avoid the NIMBY trap) often backfires in the most concrete terms. Decisions made without adequate input tend to generate opposition downstream, when it is far more costly to address: through litigation, organized resistance, implementation failures, and the kind of sustained community distrust that shadows projects for years. The veto-point problem, in other words, is not a feature of too much participation. It is what participation looks like when it arrives too late, is structured too adversarially, and samples too narrowly. Fix the design (broaden who is heard, start earlier, frame the problem honestly) and participation stops functioning as a veto mechanism and starts functioning as the evidence base that allows government to make hard calls with confidence. An agency that has genuinely sought broad, representative input is in a far stronger position to defend a difficult decision. When that engagement runs through organized, representative groups, it also enables more effective deal-making — where tradeoffs can be negotiated and outcomes reflect a broader, more representative set of community interests.

Collaborative approaches improve the quality of decision-making and increase public trust precisely because they bring the right stakeholders in early (engaging more upstream), before positions have hardened and before the public record has closed. Agreements get built earlier, key voices feel heard rather than steamrolled, impacts are better understood across not only the loudest but those most widely impacted, and mitigation is transparent. And with that, the potential litigation drops, compliance improves, and implementation becomes something communities feel invested in rather than something done to them.

A Framework for Doing It Right

Moving with the public is how government earns trust and makes decisions people can understand, accept, and stand behind.

Participation doesn’t eliminate conflict, and isn’t meant to. The challenge is structuring disagreement so decisions can still be made — and hold up to scrutiny — by clarifying tradeoffs, surfacing impacts, and narrowing options. Climate policy makes this especially clear; decisions about energy, land use, and infrastructure must move quickly while navigating disagreement about costs, risks, and local impacts.

Participation must reflect not just who shows up, but the full set of people affected, including those whose interests are less visible but equally consequential. Engagement can overrepresent highly organized or locally affected groups, even when decisions carry broader regional or national benefits. Designing participation to reflect those broader impacts — and the perspectives not in the room — helps avoid decisions that are responsive but not well-balanced.

The OMB memo advances a shift from participation as a single event (a hearing or listening session) to a practice agencies must design intentionally, tailor to context, and improve over time. The memo’s five principles — Purposeful, Respectful, Transparent and Accountable, Accessible, and Learning-Focused — offer a practical framework aligned with the decision, the stakes, and the people affected.

At their best, these principles help government:

- reach the right people (not just the easiest to reach)

- ask questions tied to real choices and constraints (not just general opinions)

- use input to shape decisions and follow-through (not just record it)

The goal isn’t consensus — it’s decisions that can move forward.Climate urgency makes getting this right non-negotiable. This framework is designed for exactly the conditions climate policy creates: high stakes, contested choices, deep skepticism, and no margin for processes that consume time without building trust.

Five Guiding Principles for Meaningful Engagement

Is it purposeful?

Purposeful engagement starts with clarity. What decision is being made? What is open to input at this stage? What is constrained by law, budget, or timing? Who is affected, and when can input still affect outcomes? It also means asking participants to respond to specific, decision-relevant questions. Engagement begins early enough for communities to help shape options, not simply react to them.

Why it matters

Without a clear purpose tied to a decision, engagement captures reactions to incomplete information or comes after key choices are already set. When choices are complex, participants may rely on partial or misleading assumptions rather than the factors shaping them. Framing questions around real constraints and tradeoffs produces more informed, actionable input. Inviting input outside an agency’s authority or capacity can also overwhelm staff and create unmet expectations.

What it looks like

- Engage before major choices are locked in.

- Present a limited set of realistic options (e.g., 2–3 alternatives).

- Ask targeted questions tied to specific considerations (e.g., cost, siting, mitigation, local impacts), not general preferences (e.g., “do you support this project?”).

- Define what input is in scope, and how out-of-scope input will be routed (e.g., partners, interagency coordination, future tracking).

Is it respectful?

Respectful engagement treats communities as knowledgeable partners and acknowledges the costs of participation. It reduces barriers where possible and ensures participation is worthwhile and relevant.

Why it matters

When engagement feels extractive, one-sided, or not worth the time required, participation drops and trust erodes, especially in communities already bearing environmental and infrastructure burdens. This affects not just who participates, but the relevance of the input received. People are more likely to engage — and stay engaged — when the process is clearly connected to decisions, provides enough context, and is worth their effort.

What it looks like

- Partner with trusted intermediaries to co-design or co-host engagement.

- Provide support (e.g., stipends, childcare, travel reimbursement) where feasible (see, for example, community compensation guidelines from the Colorado Department of Human Services and Washington State Office of Equity).

- Set clear norms for dialogue and how input will be documented.

- Train staff in facilitation, cultural competence, and navigating disagreement.

- Explain how input will be considered by decision-makers.

Is it transparent and accountable?

Transparent and accountable engagement sets clear expectations about what is being decided, how public input will be used, and how decisions will be communicated.

Why it matters

People don’t need to agree with outcomes to see them as legitimate, but they do need to understand how decisions were made. Transparency clarifies how input connects to decisions — including what can and cannot change — and helps maintain trust even when alignment is difficult. Accountability comes from closing the loop: showing what was heard, and what followed. Not all input will change outcomes, but its role should be visible.

What it looks like

- Communicate decision criteria, constraints, and timelines up front.

- Explain how input will be used before engagement begins.

- Share examples of how prior feedback influenced agency decisions or plans.

- After the engagement phase, show how input was considered by decision-makers (e.g., agency response to comments, draft revisions).

Is it accessible?

Accessible engagement removes practical barriers and proactively invites participation from those most affected but least likely to show up by default.

Why it matters

Barriers determine who participates and whose perspectives are heard. When participation depends on time, resources, technical fluency, or familiarity with government, input skews toward those advantages and may not reflect the full scope of public impacts. As a result, decisions rely on a narrower — and potentially distorted — set of inputs. Broadening access improves both representation and the quality of information decisions rely on.

What it looks like

- Offer multiple ways to participate (e.g., in-person, virtual, evenings, weekends) and provide input (e.g., written, audio, mapping tools).

- Use accessibility-by-default design principles (e.g., plain language, compatibility with assistive technologies, screen reader-friendly materials).

- Include stakeholders beyond those most visible or organized (e.g., future residents, regional beneficiaries).

- Conduct outreach through trusted community channels (e.g., local organizations, faith groups, libraries, community centers).

- Provide captioning, translation, interpretation, and other accessibility supports as standard practice — not only upon request.

Is it learning-focused?

Learning-focused engagement is iterative and adaptive. Agencies assess whether engagement is reaching the right people, producing helpful input, and informing decisions — and adjust accordingly.

Why it matters

Without iteration, engagement repeats the same gaps in participation and input. Learning in real time allows agencies to adjust how engagement is designed and delivered. It also prevents wasted effort by enabling course correction, saving resources and community goodwill — essential in fast-moving environmental and infrastructure contexts.

What it looks like

- Collect participant feedback (e.g., on clarity, accessibility, usefulness).

- Conduct internal debriefs to identify what worked and what didn’t.

- Monitor who is participating and who is missing.

- Adjust outreach, format, or framing mid-process.

- Use clear measures to assess the effectiveness of participation.

Is this engagement tied to a decision that is still open — and are participants being asked to respond to clearly defined choices, constraints, or tradeoffs?

Is this engagement worth people’s time — and are participants equipped to provide informed, relevant input?

Is it clear how input will be used — and how participants will see how it was considered?

Are we reaching and enabling participation beyond those most visible or organized — and who may still be missing?

Are we using what we learn to adjust this process in real time — and to improve future engagement?

Matching Methods to the Moment

No single method fits every situation. Effective participation matches the approach to the decision, based on who is affected, what is open to input, and what participation is feasible. Different stages of the policy lifecycle call for different forms of engagement:

- Early stages: define problems and surface lived experience.

- Mid-stage decisions: compare options and test tradeoffs.

- Implementation: troubleshoot and adapt.

The five principles set the standard for how to engage. The next step is choosing methods that apply them — fitting the decision, the audience, and the agency’s constraints (e.g., timeline, resources, legal requirements). Frameworks like the IAP2 Spectrum of Participation, the T.I.E.R.S. Public Engagement Framework, and the National Coalition for Dialogue and Deliberation’s Engagement Streams Framework can help avoid a common trap: defaulting to the same level or type of participation regardless of context.

Effective participation isn’t about asking more people more questions. It’s about selecting approaches that produce usable input.

That alignment also applies to who is included. Decisions with regional or national consequences require engagement that includes those who will benefit or bear indirect impacts. For example, housing or transmission projects often draw input primarily from current residents, even when benefits accrue to future residents or regional users.

This is not about giving any group veto power, but ensuring decision-making reflects the full distribution of impacts and interests.

Evidence from the Field

Well-designed participation isn’t just a process — it’s a governance tool. Examples from environmental and infrastructure policy show it can inform decisions, improve design, clarify contested evidence, and build the capacity for better engagement over time.

Participation that changes decisions

A 2025 study analyzing 108 Environmental Impact Statements under the National Environmental Policy Act (NEPA) found that public comments led to substantive changes in agency decisions in the majority of cases:

- 62% involved meaningful changes to decisions,

- 64% modified project alternatives,

- 42% changed mitigation plans, and

- when preferred alternatives shifted, agencies directly credited public input as the reason.

While the study did not assess outcome quality, longstanding NEPA success stories suggest these changes often strengthen project design, e.g., by identifying overlooked impacts, informing mitigation strategies, or incorporating local knowledge into technical analysis.

Place-sensitive design

Infrastructure decisions highlight the importance of place-sensitive engagement because impacts vary by location, history, and lived experience.

The Bipartisan Policy Center’s examination of a U.S. Department of Energy-funded carbon storage demonstration project in Illinois shows how early, sustained engagement helped build understanding and trust around geologic carbon storage. Engagement began years before site selection and relied on trusted local experts, multiple outreach strategies, and two-way communication to familiarize communities with the technology and its potential impacts. These efforts contributed to broad-based support and community willingness to host the project, illustrating how early engagement can shape perceptions of risk and benefit and improve the conditions under which projects move forward.

Similarly, analysis by Acadia Center and Clean Air Task Force found that opposition and delays were reduced, and public support for infrastructure grew, when clean energy planners took local siting and environmental concerns seriously and equipped communities to participate meaningfully.

These examples underscore an important balance. Place-sensitive engagement works best when local input is considered alongside broader system needs, so place-based concerns inform — but do not override — decisions with wider public benefits.

Joint fact-finding and shared inquiry

In disputes involving scientific uncertainty and contested values, joint fact-finding — where agencies, experts, and stakeholders collaboratively define questions, gather evidence, and interpret findings — produces more credible, usable information. Rather than positioning agencies and communities as adversaries, these approaches shift focus from competing claims to shared inquiry, helping participants develop a common understanding of facts and tradeoffs.

Environmental dispute cases show that joint fact-finding can narrow disagreements and reduce mistrust even when consensus is not possible. In practice, these processes help participants clarify what is known, what remains uncertain, and where value-based disagreements persist — allowing decisions to move forward on a more transparent and informed basis.

Environmental justice case studies documented by the U.S. Environmental Protection Agency (EPA) likewise illustrate how collaborative inquiry can enhance participation and buy-in from affected communities. In several cases, involving community members directly in data collection and interpretation improved the relevance of findings, increased confidence in the results, and fostered more constructive dialogue between agencies and communities — strengthening both the substance of decisions and their implementation.

Community-led research and data governance

Community-based participatory research offers another pathway to stronger decisions by changing who controls the production of knowledge. Analysis from the Brookings Institution shows that when communities help set research priorities and interpret findings, the results better reflect local context and needs. Traditional research models often reflect externally defined agendas that lack community-specific knowledge, limiting their usefulness for decision-making.

Community-led approaches, by contrast, redistribute control over research and data governance, enabling communities to shape how information is generated and used. While joint fact-finding focuses on how agencies, experts, and stakeholders collaboratively interpret evidence in decision-making contexts, community-led research changes who sets the agenda in the first place. In practice, this can produce more relevant inputs for policy and planning, strengthen the connection between data and lived experience, and support ongoing partnerships that extend beyond a single engagement process.

Evaluation

Effective engagement improves through feedback. For example, the U.S. Army Corps of Engineers’ evaluation of public involvement in flood risk management pilots found that engagement tied to clear decision points and structured activities (e.g., working groups, facilitated discussions) strengthened agency capacity for public involvement and improved two-way dialogue with communities. Participating staff reported that these efforts helped teams better understand community concerns, identify information gaps, and structure engagement more systematically.

Internal debriefs and participant feedback informed adjustments across project phases, helping teams refine outreach, coordination, and how input is organized and applied.

These findings spotlight another benefit of well-designed engagement: not just contributing to individual decisions, but building the knowledge, relationships, and processes that make more informed and collaborative decision-making possible.

What These Examples Show

Taken together, these examples point to a clear pattern: engagement works best when tied to decisions still being shaped and structured to produce usable input from those affected. In these conditions, participation does more than gather input — it improves decisions and delivery.

As noted earlier, participation doesn’t remove disagreement. It makes it more manageable by clarifying what is known and uncertain, surfacing tradeoffs, and reflecting a more balanced set of perspectives. This better equips decision-makers to explain and defend their choices.

Building Capacity to Deliver

Well-designed participation doesn’t substitute for agency capacity — it sharpens it, especially at the state and local levels where timelines are tight, staff are limited, and decisions are high stakes.

Participation is often treated as an added burden on already stretched institutions. But when targeted and structured, it helps agencies use existing capacity more effectively by identifying concerns early and reducing downstream conflict, redesign, and delay. This isn’t just an equity argument; it’s a speed and delivery argument.

What matters isn’t whether agencies “have capacity,” but whether participation is designed to support decisions that can be explained and sustained.

Strengthening State Capacity

Next, let’s look at the specific elements necessary to improve public participation.

Leadership and Governance

Why it matters

Engagement succeeds when it is treated as core governance, not a communications add-on. When leaders treat participation as part of decision-making, it affects how processes are designed, how staff are incentivized, and how tradeoffs are handled.

What it looks like

- Designate engagement leads to coordinate across programs and align input with decisions that cut across issues or policy areas.

- Embed clear ownership within program teams.

- Build engagement milestones into project timelines.

- Have senior leaders review engagement summaries alongside legal, technical, and budget analyses.

- Reflect participation goals in agency strategic plans, implementation plans, and performance reviews.

Skills and Culture

Why it matters

Engagement failures are often organizational, not technical. Without the right skills and norms, staff may struggle to use public input or navigate conflict, and even well-intentioned engagement can break down.

What it looks like

- Train staff to interpret and weigh public input alongside technical and operational constraints.

- Synthesize input into themes and areas of agreement or tension so it can be compared and shared without revisiting individual comments.

- Design engagement plans across functions (policy, legal, technical, communications).

- Set expectations that engagement is part of policy development and implementation, not a parallel process.

- Reinforce these norms through staffing, timelines, and accountability.

Tools and Resources

Why it matters

Without the right tools and resources, engagement can generate more input than agencies can realistically analyze or respond to. The issue isn’t just volume, but whether input can be organized, interpreted, and applied to decisions.

What it looks like

- Use practical checklists and templates for planning, documentation, and follow-up.

- Use formats that produce comparable, decision-relevant input (e.g., facilitated discussions, guided prompts, prioritization exercises).

- Use analysis approaches that account for different types of input and perspectives (e.g., written comments vs. oral input, surveys vs. community discussions), not just the easiest responses to process.

- Establish pre-approved contracting mechanisms for facilitators, translators, and interpreters.

- Track commitments and follow-through internally (e.g., via dashboards).

Learning and Improvement Systems

Why it matters

Adaptation requires more than intent. Learning at the project level isn’t sufficient — without shared systems, agencies tend to apply the same approaches across teams and over time, regardless of effectiveness.

What it looks like

- Standardize internal reporting that connects public input to decisions and next steps (e.g., “what we heard / what we’re doing” summaries).

- Establish criteria for adjusting outreach or formats based on participation patterns (e.g., extend timelines if turnout is low, add targeted outreach to missing groups).

- Create shared repositories so lessons learned carry across teams and inform future projects.

- Incorporate outcome checks over time (e.g., whether engagement reduced conflict or improved implementation).

Strengthening Public Capacity

This challenge extends beyond agencies themselves. Participation design should account for both agency capacity and who can realistically participate. When engagement skews toward people who face fewer barriers to participation, it raises equity concerns and weakens the quality of information and problem-solving.

Reducing Participation Barriers

Why it matters

Reducing barriers isn’t about paying for feedback. It’s about making participation feasible, informed, and reflective of those most affected, including those who will experience the long-term impacts.

What it looks like

- Provide context in advance so participants can engage without technical expertise.

- Provide information in multiple languages and accessible formats.

- Share clear examples of useful input (e.g., specific impacts, leading practices).

- Present side-by-side comparisons of relevant options and tradeoffs (e.g., anticipated impacts, costs, timelines).

- Offer opportunities for the public to ask clarifying questions before providing input (e.g., Q&A sessions, office hours).

Equipping Communities to Engage

Why it matters

Meaningful engagement often requires skills, time, and capacity that some communities — especially smaller or under-resourced ones — may not have. Without intentional outreach and resourcing, agencies hear repeatedly from the same well-resourced groups. Just as important, without support for organized participation, engagement struggles to translate into representative voice, particularly for communities historically marginalized by race or class.

What it looks like:

- Provide practical tools to support effective participation (e.g., how-to videos, comment templates, guides to agency processes).

- Share meeting materials early so communities have time to review, discuss, and respond.

- Provide small grants or stipends so trusted partners can convene discussions, and synthesize input, and support representative participation.

- Designate clear agency points of contact to help communities navigate participation processes.

Developing Long-Term Relationships

Why it matters

Engagement is faster and more collaborative when relationships already exist. When agencies engage only during moments of controversy, participation is more likely to feel reactive and transactional.

What it looks like

- Maintain engagement beyond one-off interactions, including through light-touch ways (e.g., periodic newsletters, community listservs).

- Maintain continuity in points of contact through clear handoffs and shared records so relationships and institutional knowledge persist as staff change.

- Communicate regularly and follow through outside active decision processes.

- Acknowledge past harms or broken trust.

- Return to communities after implementation to share outcomes.

Return on Investment

Trust, as this paper has argued, is the load-bearing wall. Investing in state and public capacity builds it — so participation helps decisions progress rather than stall. Well-designed engagement enables agencies to use input and respond credibly. When communities can participate fully, decisions are better grounded, easier to explain, and more likely to hold.

Looking Ahead

As engagement tools evolve, the same principles apply. Digital participation, civic technology, and AI-assisted analysis are already being used to help governments reach more people and make sense of large volumes of input. But without deliberate design, they risk introducing new exclusions and harms, such as unequal access, privacy concerns, and bias.

Internationally, several examples illustrate both the promise and the tradeoffs. France used AI tools to analyze millions of contributions submitted during the Grand Débat National, grouping input by theme to make citizen insights more accessible to policymakers; the process highlighted tensions between scale and nuance, and raised questions about how input is aggregated and whose perspectives are preserved. The UK government uses an AI consultation analysis tool to analyze consultation responses at scale, reducing manual work and improving the speed and consistency of analysis. Its evaluation documents practical challenges, including the need for human review and concerns regarding bias, accuracy across groups, public trust, and transparency in interpretation.

These examples point to real potential: making participation more scalable, accessible, and usable in decision-making. But they also reinforce the same lesson as the rest of this paper — the impact of these tools depends less on the technology itself than on how they are designed and governed.

The path forward is not to adopt emerging tools wholesale, but to hold them to the same standards.

- Purposeful: Use tools to help answer specific questions or synthesize input, not simply because they are new or project “modernization.”

- Respectful: Handle community data, stories, and participation with care, including clear consent and appropriate privacy protections, especially when considering AI tools beholden to terms of use agreements.

- Transparent and Accountable: Explain how input is collected, analyzed, and used, especially when automation or AI is involved.

- Accessible: Design for inclusion rather than assume technology access or digital fluency.

- Learning-Focused: Assess whether tools improve engagement and decision-making, and adjust or retire tools that do not.

Used this way, technology doesn’t replace judgment or governance; it strengthens both.

As agencies adopt new tools, they can also build on existing human infrastructure — such as promotores and other community-based messengers — that has long been used in public health and service delivery to support outreach, build trust, and connect agencies with communities.

When participation is designed to clarify tradeoffs, surface real impacts, and support accountable decisions, it becomes what democratic governance needs most right now, and what this collection argues is still within reach: a government that listens well enough to be worth believing in.

Strengthening the Federal Cycle of Learning and Adaptation by Closing the Loops

The federal government has a feedback-loop problem.

Regularly generated information, including evidence, performance information, and qualitative insights from implementation, too often fails to shape decisions. Evidence may be reviewed without changing priorities; performance data may be tracked without clarifying what it informs, and implementation feedback may reach leadership without surfacing what works for whom and why, or suggesting next steps. The components of a cyclical learning system linking priorities, questions, evidence, decisions, and implementation information exist in theory and on paper, but the connective tissue that turns all of these components into a functioning cycle of learning and adjustment is lacking. Information and artifacts alone don’t necessarily facilitate learning and adaptation; strengthening federal feedback loops requires embedding translation and use into decision-making from the start.

This memo is not a case for new infrastructure. The Evidence Act, learning agendas, evaluation plans, performance frameworks, and customer experience authorities already exist; what they do not yet add up to is a learning system. The translation this memo proposes is turning the infrastructure we have into the learning system we need, and it’s addressed to federal program leaders, policy officials, evaluation and evidence staff, performance officers, and strategic planning teams who already sit inside it and are best positioned to make it function as intended.

Challenge and Opportunity

The federal government already operates within a broad cycle of goal-setting, evidence generation, performance review, implementation, and reporting. On paper and in principle, this cycle should allow for learning, adjustment, and improvement to federal programs over time. In practice, however, agencies vary in how consistently they translate such information into planning, decision-making, or course-correction. Federal agencies have made progress in building and using evidence, but translating that information into timely operational or policy revisions remains uneven.

The core problem isn’t production; it’s translation, and the translation failure shows up as “so what” gaps on both sides of the information pipeline. On the input side, receivers of information are often left asking what they’re supposed to do, and on the output side, a second question appears – “is it my job to act on this, and if so, how?”. Research findings are often too slow, too caveated, or too disconnected from immediate policy and management questions. Performance data may show quantitative changes in outputs, costs, or enrollment without revealing the mechanisms behind them or the practical implications for implementation, or cueing the design apparatus that could apply these insights. Feedback from frontline service providers and affected users might reach leadership mainly through quantitative indicators, dashboards, or status updates, which don’t always capture lived experience, causal explanation, or informed suggestions for course correction. Without named owners and defined next steps, even the most actionable information tends to circulate rather than convert.

Three gaps sit behind this pattern. First; a context gap – decision-makers often lack the full picture, because qualitative indicators and customer experience research arrive separately, or later than quantitative evidence, leaving them with only a partial view of what’s working well or driving implementation problems. Second, an action gap; even with a complete view of the picture, it’s not always obvious which lever applies, on what timeline, or with what tradeoff. Third, an ownership gap; it’s often unclear who is responsible for translating any given signal into a decision, and this ambiguity means that insights can be observed without being acted on. Together, these three gaps leave evidence and feedback insufficiently integrated into decision-making routines.

The problem is also structural; decision-makers face turnover, competing priorities, time limits, and management pressures, and thus, evidence needs a more robust pathway. Devoid of clear translation, trusted messengers, and defined or mandated use points, even the most relevant information can be too late, too ill-timed, or too jargon-heavy to influence decisions, resulting in missed adaptation opportunities.

The federal government doesn’t need an entirely new learning architecture. It needs to make the one it already has more usable. Agencies can do this in a few ways. First, by building stronger translation functions by creating space for “knowledge brokers” (people or teams whose core function is to translate evidence into decision-relevant language and maintain the required relationships that make the translation trusted). Second, by incorporating the use of evidence, performance, and implementation feedback into policy and program work from the start. Third, by creating better pathways for implementation and lived-experience feedback to reach leadership in ways that resonate with them and support action.

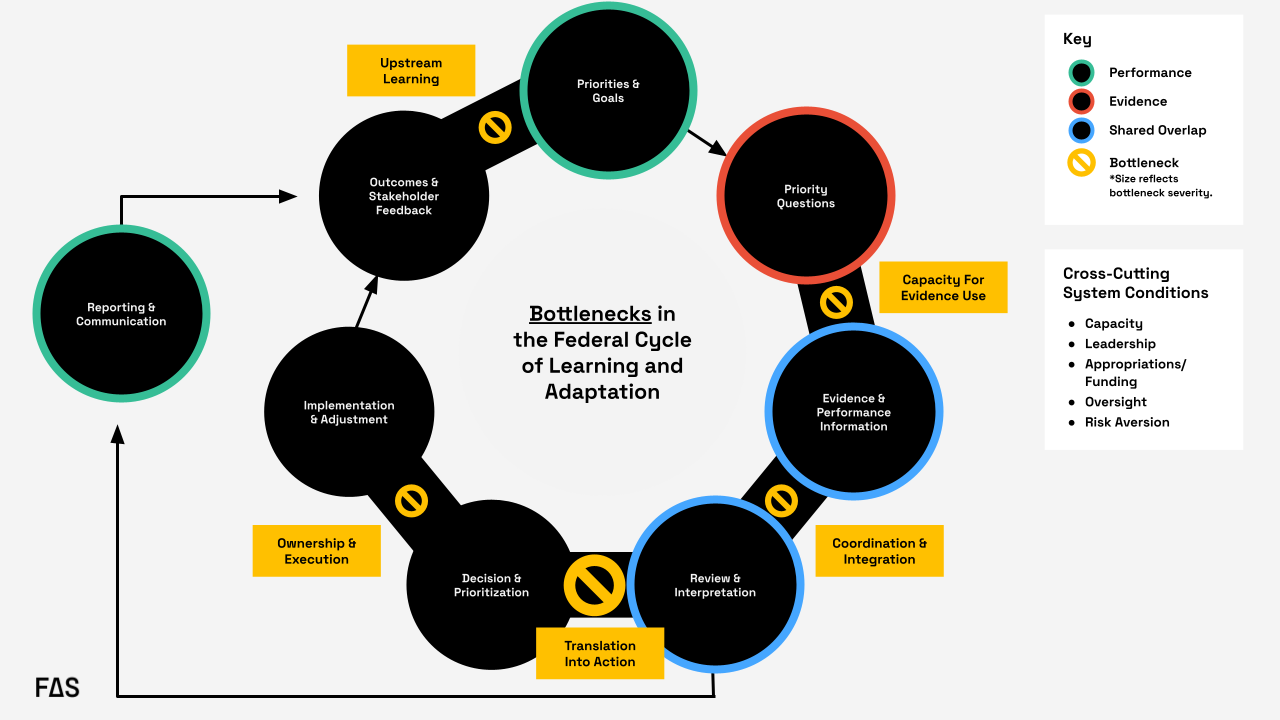

Formal federal guidance envisions a closed “loop” linking goals, priority questions, evidence, and performance information, along with review, decision-making, implementation, reporting, and feedback. In practice, the loop often degrades at key handoffs: evidence-use capacity, coordination and integration, translation into action, ownership and execution, and upstream learning from outcomes. The most significant recurring bottleneck occurs during the transition from review and interpretation to decision and prioritization, where information is generated and reviewed but does not reliably translate into action.

Plan of Action

We need to shift from a system that collects information to a system that uses it.

Agencies should create or strengthen embedded translation functions that connect evidence, performance information, implementation experience, and policy levers at the moment decisions are being made. The key is to move from a dissemination model to a utilization model. Instead of “produce, disseminate, and hope for uptake”, agencies should do the following:

Recommendation 1. Designate a knowledge broker to facilitate regular decision briefs…

or routines that create structured opportunities to clarify what’s known, what’s uncertain, and what actions are available – beginning with a defined set of high priority issues rather than every single decision the agency needs to make.

This recommendation targets the translation into action bottleneck in Figure 1; the handoff from review and interpretation to decision and prioritization, which can be considered the most significant recurring point of failure in the federal cycle of learning and adaptation. In practice, this means assigning this function to a role or small team housed in an existing performance, evaluation, strategy, or program office and requiring that group to support recurring decision points with short decision briefs. Those briefs should identify the decision, synthesize relevant evidence, performance trends, and implementation feedback, and specify available actions, tradeoffs, and owners.

For example, in the rollout of the FAFSA Simplification Act, a knowledge broker tied specifically to this initiative could have translated readiness indicators, beneficiary feedback, and information from financial aid administrators into decision-ready synthesis for the officials with decision authority who were attempting to course correct in real time. Instead, significant delays turned the rollout into a high-profile implementation issue.

Crucially, this function should start narrow. Rather than positioning a knowledge broker as an all-purpose translator for an agency’s full decision load, the initial portfolio could be scoped to a small set of priority issues. Starting narrow lets the broker establish credibility and relationships that make translation trusted and refine what the routine and explicit outputs are before it scales – the portfolio can scale later. This gives agencies a defined mechanism for turning reviewed information into decisions rather than leaving that handoff informal. Within the federal government, the Office of Evaluation Sciences has modeled how an embedded team of evidence translators can work alongside program offices rather than from a silo.

Recommendation 2. Start policy initiatives and evaluation planning with a real question…

or decision, identify the user, specify the lever, and clarify – in advance – what different findings would imply. Incorporating this thinking upstream changes the role of evidence from a retrospective input to an operational tool.

This recommendation mainly addresses the translation into action bottleneck, and secondarily, the evidence-use capacity bottleneck. Agencies can operationalize this by building decision framing into existing learning agenda and evaluation planning processes, both of which are already required under the Evidence Act. Before an evidence product is commissioned or a performance indicator is selected, program offices can be required to answer four questions on the record: What decision is this for? Who will use it? What lever would change as a result? What finding would lead to what action?

In a hypothetical example, say that USDA’s Food and Nutrition Service (FNS) wants to commission a new evaluation or analysis regarding SNAP redetermination churn (the pattern of households losing SNAP benefits at recertification and then re-enrolling, often for procedural reasons rather than eligibility). The four questions noted above can be answered on the record before the work begins. The decision is whether to issue new guidance to state agencies on recertification practices and what that guidance should encourage. The user is the FNS administrator and the relevant policy office, with state level SNAP directors as the implementing audience. The lever is subregulatory guidance. The early thinking regarding mapping findings to actions would specify, in advance, which patterns or insights would trigger which associated response. This way, when the findings arrive, USDA wouldn’t be starting from scratch with the “what do we do with this” question; the decision architecture would already be in place.

Pre-specifying these conditions in a short decision-framing memo that travels with the work turns evidence from a retrospective deliverable into a tool scoped to a specific decision or policy window. The same logic extends to decision memo templates themselves, which can include standing prompts such as “what evidence informed this decision?” and “how will we learn about this in real time?”, so that utilization is built in.

Recommendation 3. Create pathways for easier access to mixed methods evidence and insights from lived experience.

Recommendation 3 targets the coordination and integration, and upstream learning bottlenecks; the gap where evaluation, performance, administrative, qualitative, and customer experience data move at different speeds, live in different places, and reach decision-makers as parallel streams. Insights from lived experience – what programs actually look and feel like to the people using them – are particularly likely to be separated from information that reaches leadership, arriving as anecdotes, if they arrive at all. Because these problems are distinct; the recommendations can be broken down and addressed at the agency level.

Recommendation 4. Standardize decision-ready formats that consolidate quantitative and qualitative evidence.

Agencies should build standardized decision templates and briefs that present quantitative indicator-level data alongside narrative summaries of lived experience and implementation conditions, so decision-makers aren’t expected to synthesize across disparate sources on their own timelines. The resulting artifacts should be tied to recurring decision moments (budgeting, guidance revisions, program reauthorization) so that they can be used in real time.

In the FAFSA Simplification Act rollout, the Department of Education leadership faced this problem: application data, technical readiness indicators, information from financial aid administrators, and user feedback existed separately, and moved at different speeds. A standardized decision-ready format could have pulled those streams together; pairing completion trend data with brief narratives or exemplary quotes regarding what applicants and financial aid offices were actually encountering, rather than leaving leaders alone to assemble the picture in real time

Recommendation 5. Actively use existing general clearance mechanisms for rapid qualitative and user experience research.

Agencies should make use of standing generic clearance mechanisms that allow them to fast-track small qualitative and user experience studies (for example, up to 100 respondents, completed within a fixed time window) when unexpected findings need rapid explanation. This would allow for the ability to run a tightly scoped evaluation in weeks rather than months, which is the operational timescale at which decisions frequently move. Without it, the qualitative evidence needed to explain any type of performance anomaly often arrives after the decision or policy window has closed.

For SNAP redetermination churn, this would let FNS turn around a short, scoped evaluation of why participants are dropping off at a specific step in the recertification cycle in weeks rather than months. The insights could then inform the next round of guidance rather than coming in after the fact.

Recommendation 6. Build customer-first indicators built into existing federal reporting requirements.

Beneficiary and frontline experience should become part of the evidence base by default rather than by exception. Most federal programs already have reporting infrastructure, and layering in a modest set of customer-first indicators that use the existing infrastructure rather than building new information collection requirements ensures that user perspectives are consistently available as routine inputs.

Within the federal government, the customer experience and life experience work coordinated through OMB and performance.gov has demonstrated that lived experience can be collected and used at scale within existing authorities, which can be considered a foundation to build from rather than reinvent. At the state level,Minnesota’s Story Collective, housed within Minnesota Management and Budget (MN MMB), pairs administrative and performance data with qualitative, lived-experience narratives to give decision-makers a richer view of what their programs are actually producing.

These recommendations also address a common weakness in the federal system: evidence and performance information sit within the same broad ecosystem but move at different speeds, use different tools, and often reach different audiences. This is all the more reason to create a translation layer that can synthesize across them. Agencies need staff and routines that can connect evaluation, administrative data, performance indicators, qualitative input, and implementation realities into decision-relevant guidance. Without that connective tissue, agencies are left with parallel streams of information that don’t consistently converge at the point where action occurs.

Conclusion

The federal government already generates a great deal of information about what it’s doing and how it’s performing, but information isn’t the same as learning, and learning isn’t the same as adaptation. The gap between them is where the “so what” goes unanswered, and where the federal feedback loop breaks down.

Closing these loops doesn’t require new infrastructure or authority. It requires three shifts in how the existing system is used: designating knowledge brokers to carry translation across the handoff from review to decision-making, building decision framing into policy and evaluation work from the start so that evidence is scoped to the decisions it’s meant to inform, and creating pathways that move mixed-methods and lived experience into decision-makers’ hands in formats and timeframes that match how decisions actually happen in the federal environment. Whether the question is about USDA responding to SNAP redetermination churn or the Department of Education learning from an application rollout in real time, the underlying pattern is the same: the signals exist, but the translation that turns signals into actionable insights doesn’t reliably happen.

If the government wants a system of learning and adaptation that improves results in real time, it has to treat translation, utilization, and adaptation as core functions of governance rather than as afterthoughts.

Why Interagency Policy Coordination Efforts Frequently Fail (And How To Fix Them)

One of the great innovations of the American experiment is the federation of the organization of government at scale. For all the advantages to science and technology governance that federalism has promoted, the decentralization of responsibilities within the federal government makes speaking with one voice difficult. While differences between government agencies has been a source of strength for the U.S. federal research and development (R&D) ecosystem, those differences can also manifest as tribalism in the governance of programs administered and regulations promulgated by those agencies.

We firmly believe that it’s possible to “stack the deck” in such a way that produces better governing outcomes with stronger public impact while maintaining the strength inherent in interagency deliberative processes. To do that, this memo hopes to demystify the way that decisions are made inside agency bodies while providing a roadmap that can help decisionmakers and their advisors achieve those better outcomes. Our recommendations encourage senior policymakers and policy influencers, alike, to maintain a strong teleological focus, to embrace economic impact assessment, and adequate resourcing and troubleshooting gumption to deliver tangible policy outcomes.

Differences in R&D priority implementation between agencies can be subtle, such as a discrepancy in legal interpretation over a federal statute, or they can be overt, such as one agency taking a position seemingly at odds with another, or procedural such as when two agencies require redundant paperwork to collect information. This lack of cohesion can ultimately lead to significantly increased administrative burden for stakeholders, slower deployment of Administration priorities, and increased costs for the R&D ecosystem. To address this, R&D stakeholders frequently ask for more harmonized rulemaking between agencies. Unfortunately, the incentive structures supporting institutional autonomy frustrate harmonization and simplification efforts, further slowing the work of the government and (in some cases) magnifying the administrative burden experienced by stakeholder organizations.

Challenge and Opportunity

Coordination among federal science agencies is essential to ensure government-wide alignment on R&D investment priorities and policy agendas. However, the federal R&D enterprise suffers from some of the most egregious siloization of process and procedure in the administrative state.

To give one specific example, non-governmental space activities are covered by multiple agencies, with the Federal Aviation Administration covering launch and reentry, the Federal Communications Commission and Department of Commerce covering spectrum-related issues, the Office of Space Commerce covering remote sensing technologies, and the Federal Communications Commission (strangely) covering orbital debris. If a company wishes to engage in remote sensing activities, they need to engage each of these licensing processes, separately, which may operate on different timelines and with different levels of priority. Both the Trump administration’s executive order on commercial spaceflight and the Biden-Harris administration’s novel space activities proposal maintain the separation of licensing processes between crewed and uncrewed space activities, protecting the equities of both the Department of Transportation and Department of Commerce rather than resulting in regulatory rationalization and placing them at odds with with Congress’ proposals.

Disharmony in the regulatory system may result in differentiation of federal grant, contract, and cooperative agreement requirements that organizations need to navigate; oftentimes requiring multiple and substantially different layers of expertise and government-service provider relationships that organizations have to manage. When the government does coordinate, interagency bodies frequently develop recommendations that maximize the flexibility of the government institutions, but may avoid addressing fundamental ethical issues or the concerns of impacted communities.

To address these complaints and ensure alignment, both formal and informal interagency deliberative bodies are often formed within the Executive Branch. These may take the form of committees, subcommittees, and working groups of Congressionally mandated interagency fora, such as the National Science and Technology Council or the Interagency Council on Statistical Policy. Or, they may arise from the convening power of components in the Executive Office of the President, in what are known as, policy coordination committees in the current administration.