Why Credit Access Makes or Breaks Clean Tech Adoption and What Policy Makers Can Do About It

Building Blocks to Make Solutions Stick

For clean energy to reach everyone, government can’t just regulate behavior. It has to actively shape credit markets in partnership with the private sector.

Implications for democratic governance

- Financing programs need governance that is visibly fair, transparent, and accountable to enable trust–without that, low trust drags down their efficacy.

- Build broad constituencies to set and drive the agenda.

- Treat local lenders and communities as active implementers, not passive beneficiaries.

Capacity needs

- Talent, playbooks, and governance structures to run policy-enabled finance (credit, guarantees, revolving funds) with speed and integrity.

- Faster contracting, simpler reporting, and fewer transaction frictions.

- Clear guidance on identifying and resolving tradeoffs, instead of allowing decisions to bog down in case-by-case analysis paralysis.

- Staff who can translate between agencies, investors, and communities.

- Connective tissue to and between states to replicate smart practices and share toolkits for financing mechanisms that move beyond one-time infusions of cash.

- Quasi-public structures that give government agility without sacrificing public interest and accountability.

Access to affordable credit is a necessary condition for an equitable energy transition and an inclusive economy. Markets naturally concentrate capital where risk is low and returns are predictable, leaving low-income communities, rural areas, and smaller projects behind. Well-designed federal policy can change that dynamic by shaping markets—reducing risk, creating incentives, and unlocking private capital so clean technologies reach everyone, everywhere. This paper explores how policy-enabled finance must be part of the toolkit if we are going to drive widespread adoption of clean technologies, and can be summarized as follows:

- Problem: Clean technologies require upfront capital; tax incentives alone are insufficient for small, distributed projects and underresourced borrowers. Without targeted credit solutions, the energy transition will deepen existing economic and environmental inequities.

- Opportunity: Policy‑enabled financial services—direct investments, tax incentives, and loan guarantees—have a proven track record of expanding access to credit and driving inclusive economic growth. The climate policy playbook should be expanded to incorporate lessons from other sectors and programs that have incorporated these interventions.

- Case study: The Greenhouse Gas Reduction Fund (GGRF) was designed to augment grants and tax incentives contained in the Inflation Reduction Act by seeding revolving capital, leveraging national financing hubs, and mobilizing local lenders to scale clean investments. This program was stopped in its tracks early in the Trump administration, but lessons from its design and early implementation should be leveraged by local, state, and future federal programs.

The critical role of policy-enabled finance to drive widespread economic opportunity

Access to affordable credit is not just a financial tool—it is a cornerstone of economic opportunity. It enables families to buy homes, entrepreneurs to launch businesses, and communities to invest in technologies that reduce costs and improve quality of life. Yet, across the United States, access to credit remains deeply uneven. Nearly one in five Americans and entire regions – particularly rural and Tribal communities – are excluded from the financial mainstream, limiting their ability to thrive.

Private-sector financial institutions—banks, private equity firms, and other lenders—are designed to maximize profit. They concentrate on markets where risk is predictable, transaction costs are low, and deals are easy to close. This business model leaves behind borrowers and communities that fall outside these parameters. Without intervention, capital flows toward the familiar and away from the places that need it most.

Public policy can change this dynamic. By creating incentives or mitigating risk, policy can make lending to or investing in underserved markets viable and attractive. These interventions are not distortions — they are strategic investments that unlock economic potential where the market alone cannot, generating economic value and vitality for the direct recipients while yielding positive externalities and public benefit for local communities. And, importantly, these policy interventions act as a critical complement to regulation. Increasing access to credit is often the carrot that can be paired with, or precede, a regulatory stick so that people are not only led to a particular economic intervention, but they are also incentivized and enabled.

For decades, policy-enabled finance has delivered measurable impact through multiple programs and agencies designed to support local financial institutions – regulated and unregulated, depository and non-depository – that are built to drive economic mobility and local growth. These policies and programs have taken multiple forms, but can generally be put in three categories:

- Direct Investments: Programs like the CDFI Fund Financial Assistance awards that provide enterprise grants to Community Development Financial Institutions (CDFIs) to support balance sheet strength and increased lending and the Emergency Capital Investment Program (ECIP) that made equity investments into community development credit unions and banks.

- Tax Credits and Incentives: The Low-Income Housing Tax Credit (LIHTC), New Markets Tax Credit (NMTC), Opportunity Zones, and renewable energy credits like the Investment Tax Credit and Production Tax Credit have spurred billions in private investment for housing, community development, and clean energy.

- Loan Guarantees: Small Business Administration, U.S. Department of Agriculture, and Department of Energy guarantee programs, among others, reduce risk for the lender, enabling small businesses, rural communities, and earlier stage companies to access credit otherwise unavailable at transparent and affordable rates from participating financial institutions.

These tools enjoy broad recognition and bipartisan support because they work. They increase access, availability, and affordability of credit—fueling job creation, housing stability, and economic resilience. Policy-enabled finance is not charity; it is a proven strategy for broad and inclusive economic growth and a key tool for the policy-maker toolkit to support capital investment, project development, and adoption of beneficial technologies in a market-driven context that can increase the effectiveness of a regulatory agenda.

Most importantly, policy-enabled finance has led to major improvements in wealth-building and quality of life for millions of Americans. The 30-year mortgage was created by the Federal Housing Administration in the 1930s as a response to the Great Depression. Before this intervention, only the very wealthy could afford to buy a home given the high downpayment requirements and short-term loans. Since this policy change, thousands of financial institutions have offered long-term mortgages to millions of Americans who have bought homes that provide safety and security for their families, strong communities, and an opportunity to build wealth through appreciating assets. Broad home ownership is a public good, but until the government created the right policy and regulatory framework for the markets, it was out of reach for the majority of Americans.

Similarly, the Small Business Administration’s loan guarantee programs started in the 1950s supported financial institutions, including banks and non-bank lenders, in extending credit to small businesses that would otherwise be difficult to serve with affordable credit. These programs have collectively helped millions of small businesses access the credit they need to grow their businesses, create wealth for themselves and their families, provide critical goods and services in their communities, and create a diverse and vibrant local tax base.

The financial markets, without these types of interventions, are not structured to prioritize access and affordability. Well-designed policy and complementary regulatory interventions have been proven to drive different behaviors in the capital markets that yield real benefits for American families and businesses.

The role of access to credit in driving an equitable energy transition

The public and private sectors have spent decades and billions of dollars investing in the development of clean technologies that reduce greenhouse gas emissions, create economic benefits, and deliver a better customer experience. Now that these technologies exist, the challenge is to deploy them for everyone, everywhere.

The barrier to widespread deployment is that most clean technologies require an upfront investment to yield long-term benefits and savings (i.e., an initial capital expense to reduce ongoing operational expenses) – technologies like solar and battery storage, electric vehicles, electric HVAC and appliances, etc. – which means that people and companies with cash or access to credit are adopting these better technologies while those without access to cash or credit are being left behind. This is yielding an even greater divide – creating economic savings, health benefits, and better technologies for those who can afford them, while leaving dirty, volatile, and increasingly expensive energy sources for the lowest-income communities.

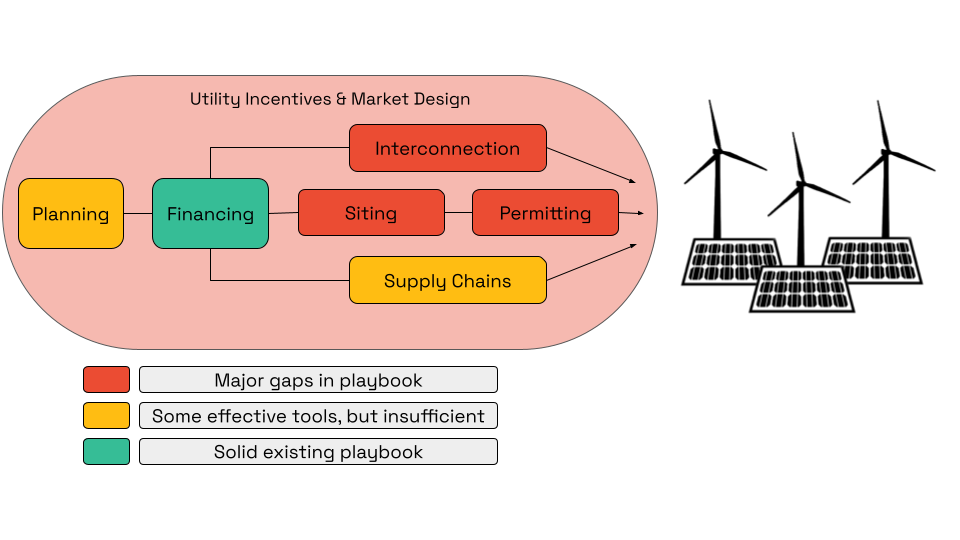

Many of the federal policy interventions to support deployment of these new technologies to date have been through tax credits. These policies have been very popular, but are not often widely adopted, particularly in rural and lower-income communities, because, (a) they are complex, (b) they often require working with individuals or businesses with large tax liabilities, and (c) they typically come with high transaction costs, making smaller, more distributed projects harder to make work. The energy transition is a huge wave of change, but it is made up of many small component parts – individual buildings, machines, vehicles, grids – so if our policies fail to enable small projects to get done, we will fail to transition quickly and equitably.

To deploy everywhere, households and businesses need credit to offset capital expenses. To expand access to credit, we need supportive clean energy policies that work within and alongside local financial services ecosystems – just like we’ve seen with housing and small businesses.

Regulation is insufficient to drive widespread adoption

Pursuing a carbon-free economy is a massive undertaking and, understandably, much of the state and federal government’s toolkit has focused on regulation of people and businesses to drive behavior change – policies like fuel economy standards, pollution restrictions, renewable energy standards, and electrification mandates. This is an important piece of the puzzle – but insufficient to drive broad (and willing) adoption.

Take, for example, the goal of electrifying heavy-duty trucks in and around port communities. States like California have attempted to set a date at which all new trucks on the registry must be zero-emissions vehicles. Predictably, this mandate was met with a lot of pushback from truck drivers, small operators, and industry associations who struggled to see a path to complying with this regulation without a major increase in cost.

It wasn’t until the regulation was paired with direct incentives for truck purchases and an attractive and feasible financing package for vehicle acquisition and charging infrastructure that the industry actors started to come around. This has helped change behavior of both buyers and incumbent sellers in the market.

Policy-enabled finance creates tools – often used in conjunction with other policy mechanisms – that can more effectively meet people where they are with affordable, appropriate, and tailored solutions and can help demonstrate a feasible path to adoption that can help buyers and sellers in these markets adapt accordingly.

The Greenhouse Gas Reduction Fund as an innovative policy-enabled finance program

The Greenhouse Gas Reduction Fund (GGRF) is more than an emissions initiative—it is a strategic investment in economic equity and market innovation that took lessons in program design from many sectors and programs of the past. Designed with three core objectives, the program aims to:

- Reduce greenhouse gas emissions at scale

- Deliver direct benefits to communities, particularly those that have been historically underserved by the financial markets

- Transform financial markets to accelerate clean energy adoption and resilience

GGRF programs, including the National Clean Investment Fund, the Clean Communities Investment Accelerator, and Solar for All, were built to complement other Inflation Reduction Act (IRA) programs by occupying a critical middle ground between grant programs and tax credits. Grant programs provide direct, one-time support for projects and programs that are not financeable (i.e., not generating revenue). Tax credits are put into the market to incentivize private investment for anyone interested in taking advantage but are not typically targeted to any specific project or population.

GGRF bridges these approaches. It channels capital into markets where funding does not naturally flow in the form of loans and investments, ensuring that clean energy and climate solutions reach every community—but does so in a way that often extends the benefits of the tax credits and incentive programs so that they reach a broader set of projects and communities where the incentive is insufficient to drive adoption. GGRF focuses on increasing access to credit and investment in places that traditional finance overlooks by reducing risk and creating scalable financing structures, empowering local lenders, community organizations, and national financing hubs to deploy resources where they are needed most. Also, because the program makes loans and investments, it recycles capital continuously – akin to a revolving loan fund – so that the work filling gaps in market adoption can continue for decades.

GGRF’s design was built on a strong foundation of successful direct investment programs for local lenders, such as CDFI Fund awards and USDA programs. What makes it unique is its scale—tens of billions of dollars—and its centralized approach, leveraging national financing hubs to drive systemic change with and through new and existing local financial capillaries (i.e., credit unions, community banks, green banks, and loan funds). This program was not built to drive incremental progress; it is a market-shaping intervention designed to accelerate the clean energy transition while promoting widespread economic growth.

Unfortunately, the program was stopped in its tracks when the Trump administration illegally froze funds already disbursed to awardees, leading to multiple lawsuits to restore funding. Without this disruption, awardees and their partners across the country would be driving direct economic benefits for families and communities across all 50 states. In the first six months of the program, awardees had pipelines of projects and investments that were projected to create over 49,000 jobs, drive $866 million in local economic benefits, save families and businesses $2.7 billion in energy costs, and leverage nearly $17 billion in private capital. The intention and mechanics of the program were working – and working fast – to deliver direct economic, health and environmental benefits for millions of Americans.

Moving at the speed of trust: Bringing the public and private sectors together for effective implementation

For a program like the Greenhouse Gas Reduction Fund to succeed, both the private and public sectors need clarity, confidence and accountability. But most importantly, they need a baseline of trust between the parties to support ongoing creative problem solving to implement a new, scaled program with exciting promise and a limited blueprint.

For the private sector, certainty is paramount. Investors and lenders (and importantly, their lawyers) require clear definitions, consistent requirements, and transparency about the availability of funds, requirements of use, and the ability to forward commit capital to projects and businesses. They need mechanisms to leverage public dollars with private capital and assurances that counterparties will be shielded from political, compliance, and policy risk. Flexibility is equally critical, allowing actors to adapt to rapid market shifts and technological innovations without being constrained by rigid program structures. Understanding these requirements – and the needs of the financial market actors involved – is outside the comfort zone of most government agencies and employees and requires significant experience and capacity building to strengthen this muscle. Nimble thinking is not often associated with government agencies, but in policy-driven financial services, it is paramount.

At the same time, the public sector has its own requirements which require patience and understanding from the private sector. Policymakers and the EPA, the implementing agency of the GGRF, must ensure that funds are used properly and that Congressional and public oversight is robust. This means designing programs that comply with all laws and regulations while advancing policy priorities. It requires mechanisms for accountability—certifications, reporting, and transparency in how funds flow – along with safeguards against undue influence from purely profit-motivated private actors. Balancing these needs is not optional when managing taxpayer funds; it is the foundation for building trust and ensuring that the program delivers on its promise of reducing emissions, benefiting communities, and transforming markets.

Implementation requires striking the balance between the needs of the private and public actors; this was difficult and time consuming for both the federal employees and for us as private recipients. There was pressure to deploy quickly to demonstrate impact and the value of the program, but it took a long time to get contracts signed and funds in the market because of the many requirements of the public and private parties involved. We speak different languages, are solving for different constraints, and work in drastically different environments – all which led to complexity and delays.

Internal EPA requirements and federal crosscutters (i.e., federal requirements from other related laws that applied to this program) increased time to market and transaction costs. Many of these requirements came with high-level policy objectives without the ability to get to a level of detail required for capital deployment.

For example, two of the major policy crosscutters were the Davis Bacon and Related Acts (DBRA) requirements around labor and workforce, and the Build America Buy America (BABA) requirements for equipment manufacturing and component parts. While the agency and private awardees were aligned at a high level on policy intention – good-paying jobs and domestically-manufactured goods – down streaming these requirements to borrowers and projects required significantly more detail and nuance than was available to the agency, adding weeks and months onto implementation and frustration among private counterparties.

Clear expectations up front on how to manage the trade-offs – policy priorities versus capital deployment – could have helped create a high-level framework for implementation, which was a one-by-one review of use cases to determine feasibility and applicability. This added complexity and friction to the process without driving outsized results.

More requirements and complexity led to slower, more costly deployment, which meant fewer communities would benefit from the program’s goals of cutting emissions, creating jobs, and cutting household and business costs.

Another key feature of the program for the National Clean Investment Fund and Clean Communities Investment Accelerator was the ability for the federal government to leverage a Financial Agent to administer the funds. This arrangement was developed between the EPA and Treasury, leveraging a long-standing practice of the Treasury Department of contracting with external banks to provide financial services that were hard for the government to provide directly. This was particularly important for the National Clean Investment Fund program because the disbursement of funds into awardee accounts enabled the awardees to meet a core statutory requirement to leverage funds with private capital. Without this function, the cash would not be available on the balance sheet of the awardees and would be difficult to leverage with private investment.

Lastly, the reporting requirements for the program were complex, making it hard to provide clarity on what data collection was required for early transactions. Again, both parties recognized the importance of transparent data collection and dissemination but implementing that intent in practice was time consuming. A simple, standardized framework to get started that could evolve over time would have helped reduce uncertainty and supported faster deployment.

Altogether, the cross-sector translation – finding common ground between two disparate worlds – added many months onto the process of getting the program to the market which, in the current political climate, was time not spent doing the important work to educate a broad set of stakeholders on the program’s promise, potential, and purpose. A lot of this complexity could have been reduced by developing a baseline of trust between the parties through the application and award process, complemented by a common goal to improve program implementation over time.

Strange bedfellows create weak alliances

In addition to the programmatic elements of translation, the actors involved in implementing direct investment strategies tend to be unknown entities to government agencies and Congress. Even though many of the implementing organizations – the “awardees” – have been around for decades doing similar work, there were weak ties with Congress, federal agencies, and other related stakeholders. Similarly, there was a lack of understanding of the role that nonprofit and community-based financial organizations play in addressing market gaps. This mutual lack of understanding and engagement leaves room for misunderstanding, distrust or generalizations that can hinder the ability to make collective progress.

Within the agency, this was a new program type for the EPA, so requirements and design process took many months before anything was shared publicly. The Notice of Funding Opportunity was released nearly a year after the legislation was signed.

The unique form and function of the program and limited direct engagement with lawmakers and other stakeholders about the program left a vacuum of information, which led to skepticism and confusion. Because the funds were provided to awardees as grants, many interpreted this as just another grant program – a large federal spending package that would lead to “handouts” – instead of what it was, the federal government seeding a sustainable fund with “equity” that would be lent out, returned, and reinvested in perpetuity. For example, here is the Wall Street Journal editorial page,and later, the EPA press release conflating investments with “handouts”:

“Imagine if Republicans gave the Trump Administration tens of billions of dollars to dole out to right-wing groups to sprinkle around to favored businesses. That’s what Democrats did in the Inflation Reduction Act (IRA). The Trump team’s effort to break up this spending racket has led to a court brawl, which could be educational.”

The fact that this policy structure and the private sector entities charged with implementing it were relative strangers led to confusion and delay during a period that could have been spent on outreach, engagement, and education. Without that broad base of support, the program unnecessarily became a political punching bag.

To mitigate this risk going forward, there needs to be greater investment in relationship building, education, stakeholder engagement and capacity building within and among the implementing partners across all relevant government actors and their private sector counterparts, especially after award selections are made. This connective tissue would go a long way in creating a baseline of common understanding of the policy objectives, program design, and implementation partners involved so all parties are aligned on strategic intent and path forward.

Making policy-enabled finance programs work in the future

If we agree that policy-enabled finance is essential to drive the energy transition and deliver broad benefits, the next step is asking the right questions about how to design these interventions for success, drawing lessons from the GGRF and other related programs.

First, what mechanisms should we use, and what are the trade-offs for each? Federally supported direct investment programs, such as managed funds, can deploy capital quickly and target underserved markets, but they require strong governance, thoughtful program design, and radical transparency, otherwise they are susceptible to the “slush fund” narrative or similar risks (i.e. conflicts of interest and political favors).

Tax credits and incentives have proven effective in attracting private investment, yet they often favor actors with existing tax liability and can leave smaller players behind. Guarantees reduce risk for lenders and unlock private capital, but they demand careful structuring to avoid moral hazard and can struggle to reach communities that are truly under-resourced.

Despite the many pitfalls of direct investment programs, they address a challenge that has plagued many of the more distributed policies: centralization and market making. Often in an attempt to let a thousand flowers bloom, policymakers underestimate the need for centralized or regional infrastructure to help with asset aggregation, data collection, product standardization, and scaled capital access. This yields local infrastructure that is sub-scale, inefficient, and unable to access the capital markets for private leverage – too small to truly shape markets.

While the GGRF’s future is uncertain given pending litigation, its purpose and role as a set of centralized financial institutions within the broader community-based financial ecosystem is critical – and needs to be more broadly understood as policymakers set future priorities.

Second, should government manage funds and programs internally or partner with external experts? Internal management within an agency offers control and accountability but can strain agency capacity and impede the ability to be an active market participant. It is also difficult to attract the right talent within the government’s pay scale, leading to an inability to recruit and high turnover. This model has been attempted through programs like the Department of Energy’s Loan Programs Office (LPO), but even that market-based program has been slower to execute, delaying critical infrastructure and technology investments by months, if not years.

On the other hand, external management brings specialized expertise and market agility, yet it raises questions about oversight and influence. No matter who the private party is, there is skepticism around the use of funds, their personal or professional gain, and their intentions with taxpayer money. In our deeply politicized world, this puts a target on the leaders of these organizations that may limit who is willing to play this role.

Quasi-public Structures

Despite the challenges, on balance it seems that internal agency management or a quasi-public structure is the most feasible path. Internal management pushes the boundaries of public agency function but goes a long way to build trust and accountability. Quasi-public structures seem to be a good compromise when feasible. Other countries have figured out how to manage these programs within a government or quasi-government agency (see the Clean Energy Finance Corporation and Reconstruction Finance Corporation, both in Australia). We can too.

At the federal level, credit programs should be managed by agencies with the skills and capacities to hold an investment function, like the Department of Energy or the Treasury Department, and leverage lessons learned from programs like DOE’s LPO and EPA’s GGRF to structure new entities. Or – like many of the state and local green banks have done – create quasi-public entities that have public sector governance and appropriations but otherwise operate independently as financial institutions with their own balance sheets, bonding authority, and staffing structure.

Lastly, if public-private partnerships are preferred, who should the government work with to implement policies meant to expand access to capital and credit? Nonprofit financial institutions often prioritize mission, community impact and are willing to arrange complex financings that require a higher touch approach but often lack scale and institutional capital access. For-profit firms bring scale and expertise but often find it hard to manage a government program with a mindset or culture that differs from their typical profit-maximization frameworks.

Depository institutions such as banks offer stability and regulatory oversight, whereas non-depositories can innovate more freely to reach the hardest to serve communities. Regulated entities provide robust and trusted infrastructure and controls, but unregulated actors may move faster and can be more creative in supporting traditionally under-resourced opportunities. Specialty firms bring deep sector or asset-class knowledge, while generalists offer broad reach and experience in managing across asset classes.

To identify the optimal path, it is helpful to look to existing programs for lessons. The U.S. Treasury’s Emergency Capital Investment Program (ECIP) demonstrates how direct investment into regulated depository institutions can mobilize significant capital for underserved communities through an existing financial ecosystem. The Loan Programs Office shows what internal management can achieve for large-scale projects. Tax credit programs like the New Markets Tax Credit (NMTC) and Investment Tax Credit (ITC)/Production Tax Credit (PTC) illustrate how incentives can transform markets, while guarantee programs such as the U.S. Department of the Treasury’s Community Development Financial Institutions Fund (CDFI) Fund Bond Guarantee and SBA 7(a) and 504 guarantees highlight the power of risk mitigation in activating and standardizing products to support secondary market access. These precedents offer valuable insights as we design future policies to accelerate a broadly beneficial energy transition.

Educating policymakers to build trust in the community finance ecosystem

Regardless of path forward, one thing remains critical – building better relationships between policymakers and the community finance industry, including community banks, credit unions, loan funds, and green banks. These are the boots-on-the-ground organizations that share a mission with many policymakers to expand economic opportunity and broaden access to capital and credit. And they are often the organizations navigating multiple public products and programs to bring affordable, quality financial services to communities.

The challenge is that most advocacy and educational work for these organizations has been siloed – there are groups representing credit unions big and small, those representing housing lenders, loan funds, green banks, and community banks. The disaggregation of these efforts has diluted the potential for policymakers to look at this ecosystem as a whole to determine how best to leverage it for public good. This is not to say that each of these individual groups does not have a role to play for their members – they all have different needs and requirements and deserve representation. But the broader industry would benefit from collaboration across these organizations to create a mechanism for these institutions to help with outreach, advocacy and education around policy-enabled finance overall. This would bring a strong and powerful group of actors together for a higher collective purpose and, ideally, create a large and diverse constituency with common goals.

State and local governments stepping up

In the near-term, the absence of federal support for clean technology deployment through policy-enabled finance creates an enormous opportunity for state and local governments to step up and push forward. Hundreds of local financial institutions were doing work to prepare for the delivery of GGRF funds to and through local projects and businesses to drive broader adoption of clean technologies. These organizations continue to have the skillsets, capacity, and pipeline to finance these projects – but need access to flexible and affordable capital to do so.

State funding efforts could mirror the program and product design of the GGRF to get deals done locally, working with one or more of the constellation of financial institutions preparing to deploy federal funds. Just because the GGRF’s programs were cut short, it doesn’t mean that the infrastructure and learnings generated should go to waste – if there are public institutions willing to commit capital, there should be many financial institutions across the country ready to put it to good use.

Conclusion

If our shared goal is an equitable, rapid energy transition, policy must do more than regulate — it must enable finance and focus on deployment, or getting great projects done. The Greenhouse Gas Reduction Fund showed both the promise and the pitfalls of large-scale, policy-enabled finance: when designed and governed well, these tools can unlock private capital, deliver measurable local benefits, and sustain long-term market transformation. When implementation gaps and weak relationships persist, even well-intentioned programs become politically vulnerable and ripe for attack. To make these programs successful within our current political context, future efforts should prioritize clear governance, cross-sector capacity, and sustained stakeholder engagement so public dollars can catalyze private investment that reaches every community.

DOE 4.0: Rethinking Program Design for a Clean Energy Future

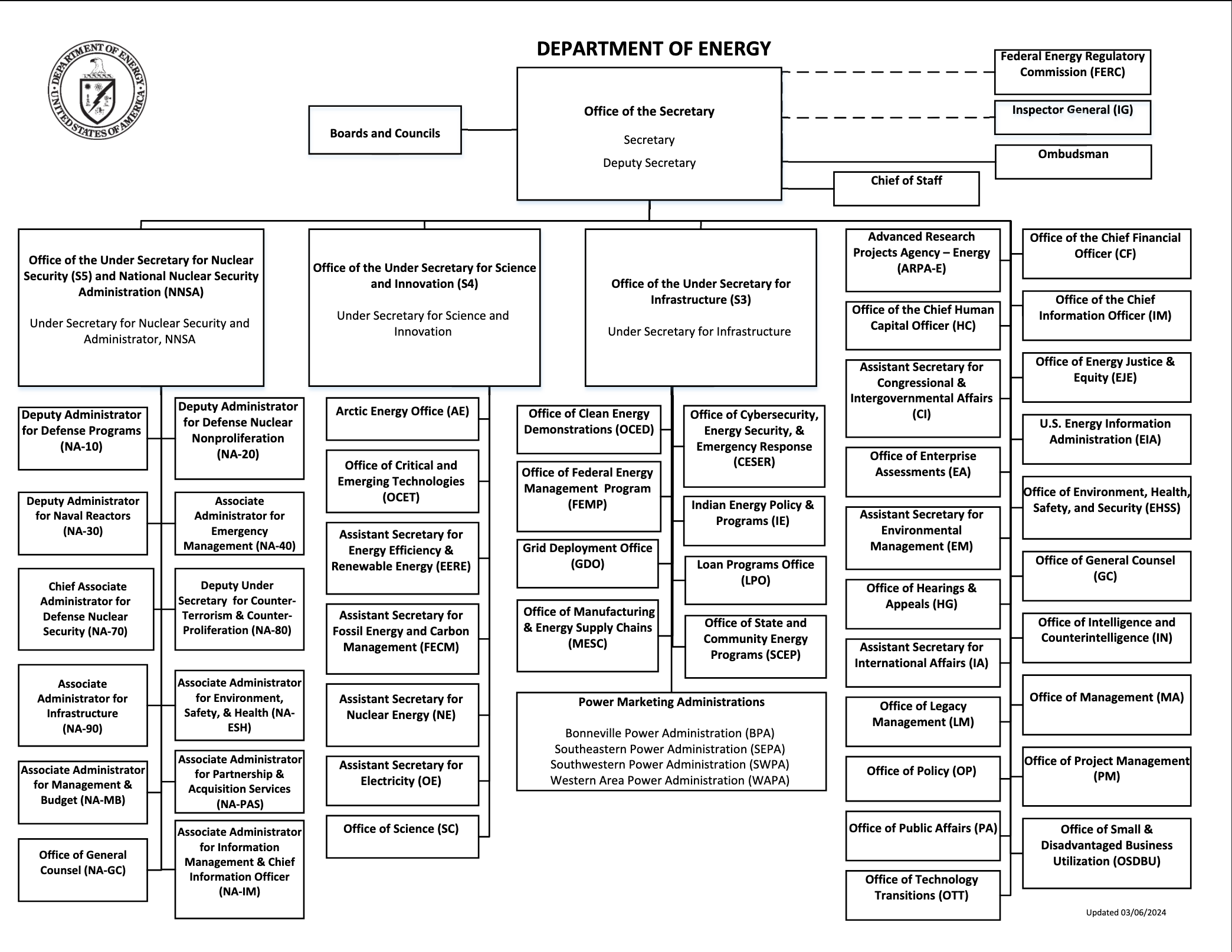

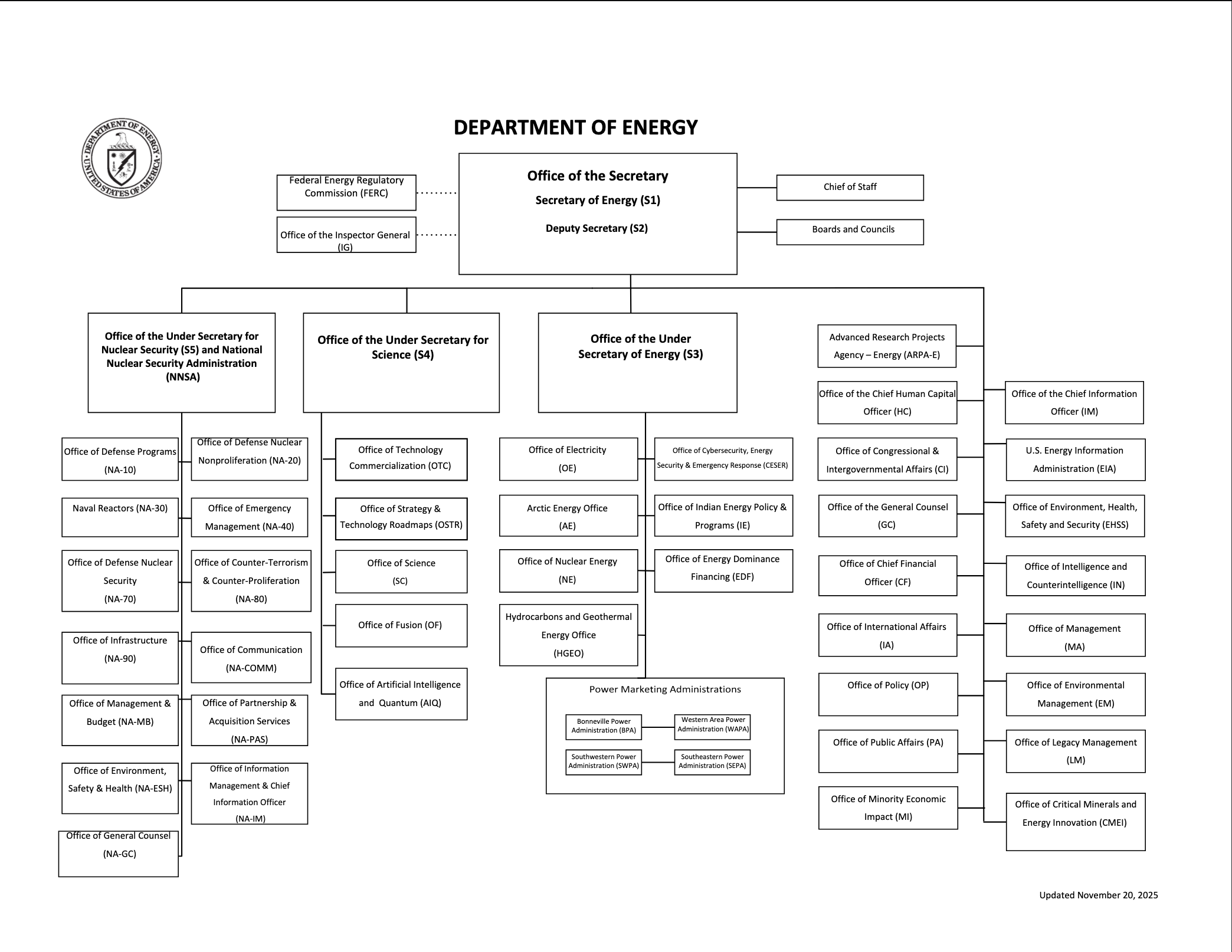

DOE’s mission and operations have undergone at least three iterations: starting as the Atomic Energy Commission after World War II (1.0), evolving into the Department of Energy during the 1970s Energy Crisis to focus on a wider range of energy research & development (2.0), and then expanding into demonstration and deployment over the last 20 years (3.0). The evolution into DOE 3.0 began with the Energy Policy Act of 2005, which authorized the Loan Programs Office (LPO), and accelerated with the infusion of funding from the American Recovery and Reinvestment Act of 2009. Finally, the Bipartisan Infrastructure Law (BIL) and the Inflation Reduction Act (IRA) crystallized DOE 3.0’s dual mandate to not only drive U.S. leadership in science and technology innovation (as under DOE 1.0 and 2.0), but also directly advance U.S. industrial development and decarbonization through project financing and other support for infrastructure deployment.

While DOE continues to support the full spectrum of research, development, demonstration, and deployment (RDD&D) activities under this dual mandate, the agency is now undergoing another transformation under the Trump administration, as a large number of career staff leave the agency and programs and budgets are overhauled. The Federation of American Scientists (FAS) is launching a new initiative to envision the DOE 4.0 that emerges after these upheavals, with the goals of identifying where DOE 3.0 missed opportunities and how DOE 4.0 can achieve the real-world change needed to address the interlocking crises of energy affordability, U.S. competitiveness, and climate change.

Crucial to these goals is rethinking program design and implementation to ensure that DOE’s tools are fit for purpose. BIL and IRA introduced new types of programs and assistance mechanisms, such as Regional Hubs and “anchor customer” capacity contracts, to try to meet the differing needs of demonstration and deployment activities compared to R&D. Some were a clear success, while others faced implementation challenges. At the same time, the majority of funding from these two bills was still implemented using traditional grants and cooperative agreements, which did not always align with the needs of the commercial-scale projects they sought to support. Based on lessons learned from the Biden administration, this report provides recommendations to DOE to improve the implementation of different types of assistance and identifies opportunities to expand the use of flexible and novel approaches. To that end, this report also advises Congress on how to improve the design of legislation for more effective implementation.

The ideas and insights in this report were informed by conversations with former DOE staff who played a role in implementing many of these programs and experts from the broader clean energy policy community.

Distribution of BIL and IRA Funding

Before diving into program design, it’s helpful to first understand the range of technologies and activities that BIL and IRA programs were meant to address, especially where that funding was concentrated and where there may have been gaps, since programs should be tailored to the purpose.

Authorizations and Appropriations

Congress intentionally provided the lion’s share of BIL and IRA funding to demonstration and deployment activities. The table above shows the distribution of BIL and IRA authorizations and appropriations for DOE. The table excludes DOE’s revolving loan programs – the Tribal Energy Financing Program (TEFP), the Advanced Technology Vehicles Manufacturing Loan Program (ATVM), the Title XVII Innovative Energy Loan Guarantee Program (Title 1703), the Title XVII Energy Infrastructure Reinvestment Financing Program (Title 1706) – which are discussed in the following section.1 The Carbon Dioxide Transportation infrastructure Finance and Innovation program (CIFIA) was included in the table above because that program’s appropriations could be used for both grants and loan credit subsidies.

The technology areas that received the most DOE funding from BIL and IRA (excluding loans) were building decarbonization, grid infrastructure, clean power – combining solar, wind, water, geothermal, and nuclear power, energy storage systems, and technology neutral programs – carbon management, and manufacturing and supply chains, which each received over $10 billion in funding.

Below is a breakdown of the funding distribution for each sector/technology.

Grid Infrastructure received a total of $14.9 billion, second only to building decarbonization. All of the funding went towards demonstration and deployment programs, the majority ($10.5 billion) of which went towards the Grid Resilience and Innovation Partnerships (GRIP) program. The remainder of the funding went towards Grid Resilience State and Tribal formula grants, the Energy Improvement in Rural and Remote Areas, Transmission Facilitation Program, and transmission siting and planning programs. No funding went to R&D or workforce programs. Grid infrastructure was also eligible for the Title 17 and Tribal loans programs.

Power Generation received a total of $13.2 billion, with funding unevenly distributed across technologies and stages of innovation. Nuclear power received the largest share, with over $9.2 billion allocated to the Advanced Reactor Demonstration Program and the Civil Nuclear Credit Program, supporting demonstration of advanced reactors and production incentives to maintain existing nuclear plants, respectively. Geothermal energy received the least funding among power generation technologies, with only $84 million allocated to the Enhanced Geothermal Systems (EGS) Demonstration Program and no other support for R&D or deployment.

Modest amounts were provided for RD&D in solar ($80 million), wind ($100 million), and water power technologies ($146 million). For deployment, hydropower also received production and efficiency incentives to support existing facilities ($754 million); wind energy received funding for Interregional and Offshore Wind Electricity Transmission Planning and Development ($100 million); and solar qualified for the Renew America’s Schools program ($500 million). To complement these technologies, $505 was provided for energy storage demonstration programs to enable reliable deployment of variable renewables.

Power generation was also eligible for the Title 17 and Tribal loan programs.

Manufacturing and Supply Chains received $10.8 billion in funding from BIL and IRA. The majority of that funding, $6.3 billion, went towards battery supply chains, primarily for the Battery Materials Processing Program ($3 billion) and the Battery Manufacturing and Recycling Program ($3 billion). Additional focus areas for funding included EV manufacturing ($2 billion), advanced energy manufacturing and recycling ($750 million), high-assay low-enriched uranium (HALEU) supply chains for nuclear power plants ($700 million), and heat pump manufacturing ($250 million). Energy manufacturing and supply chains are eligible for Title 1703 loans, while EV and battery manufacturing and supply chains are eligible for ATVM loans.

Critical Minerals received a total of $6.9 billion, of which $6 billion was allocated for the Battery Materials Processing Program and the Battery Manufacturing and Recycling Program, which funded demonstration and commercial-scale critical minerals processing and recycling projects. The remainder of the funding went to R&D programs on mining, processing, and recycling technologies; technologies to recover critical minerals from coal-based industry, mining and mine waste, and other industries; and technologies that use less critical minerals or replace them with alternatives. Critical minerals were also eligible for all of DOE’s revolving loan programs, except for CIFIA.

Industrial Decarbonization and Efficiency received a total of $7.5 billion. Six ($6.0) billion of this funding went towards the Industrial Demonstrations Program (IDP), which was sector and solution agnostic and accepted projects for both new facilities and retrofits, making the money extremely flexible. Much smaller funding amounts were allocated to deployment and workforce programs like rebates for energy efficient technologies and systems, decarbonizing energy manufacturing and recycling facilities, and Industrial Training and Assessment Centers. No funding was allocated to R&D programs.

Hydrogen and Clean Fuels received $8 billion for the Regional Clean Hydrogen Hubs program to support near-term demonstration and commercialization of hydrogen production, transportation, and usage. Hydrogen and clean fuels were also eligible for all of the loan programs, except for CIFIA. Investment across the full research-to-deployment (RDD&D) continuum was lacking. Dedicated funding for clean fuels besides hydrogen was also missing.

EVs and Transportation funding from BIL and IRA was largely focused on light-duty personal EVs. By contrast, investments in medium- and heavy-duty vehicles and urban transportation were limited.

EV manufacturing and supply chains received $8.3 billion in funding. The largest single allocations went to the Battery Materials Processing and Battery Manufacturing & Recycling Programs ($6 billion), strengthening domestic battery supply chains for EVs. Domestic Manufacturing Conversion grants ($5 billion), further supported downstream manufacturing of advanced EV technologies. Additional funding supported R&D for battery recycling and second-life applications. EV and battery manufacturing were also eligible for ATVM loans.

A notable new focus for DOE under BIL was the deployment of EV charging infrastructure. Charging infrastructure was eligible for $1.05 billion in DOE funding through the Renew America’s Schools program and the Energy Efficiency and Conservation Block Grant Program. DOE played a key role in the Joint Office of Energy and Transportation’s implementation of the National Electric Vehicle Infrastructure (NEVI) Formula Program, funded by DOT ($5 billion), and other charging programs. This marks a shift from DOE’s previous focus on developing vehicle technologies and fuels to a broader focus on all of the technology and infrastructure needs for widespread EV adoption.

Building Decarbonization and Efficiency received the most non-loan funding from BIL and IRA at $15.2 billion. The largest share of this funding, $12 billion, went towards deployment and affordability programs such as the Home Energy Efficiency Rebate Program, High-Efficiency Electric Home Rebate Program, and the Weatherization Assistance Program – all of which aim to reduce energy costs for low-income households by increasing the energy efficiency of their homes. Additional funding supported workforce training and the improvement of building codes. Little to no funding went to R&D and demonstration programs, signaling the relative maturity of building decarbonization and efficiency technologies compared to other sectors. District heating and cooling facilities are eligible for TEFP loans.

Carbon Management received a total of $11.6 billion. The majority of the funding went towards demonstration and deployment activities, of which $2.1 billion went towards CIFIA to support the deployment of transportation infrastructure, $2.5 billion went towards carbon storage validation and testing, $3.0 billion went towards carbon capture pilots and demonstrations, and $3.5 billion went towards the development of Regional Direct Air Capture (DAC) Hubs. Carbon management was also eligible for loans from the Title 17 programs.

Loans

DOE’s loan programs operate differently from the way authorizations and appropriations work for traditional assistance programs, which is why they are not included in the chart above. These programs receive both a certain amount of loan authority, which set limits on the size of their portfolios, and appropriations for program administration and credit subsidies, which allows the office to provide low-cost financing. The IRA appropriated $13.8 billion total for these four programs and provided an additional $310 billion in loan authority for Title 1703, Title 1706, and TFP. CIFIA was established in the IRA without a cap on its loan authority. The IRA also repealed the cap on ATVM’s loan authority, which remains uncapped.2

During the four years of the Biden administration, the Loan Programs Office (LPO), now renamed the Office of Energy Dominance Financing (EDF), issued a total of 24 loans and 28 conditional commitments, worth over $100 billion in total. Energy storage, battery manufacturing, clean power, and the grid received the greatest number of loans and conditional commitments, while nuclear energy, carbon management, and non-battery or EV manufacturing received the least. No loans were issued for CIFIA, which is why that program is not shown in the following figures.

Program Design & Implementation

Flexible Contracting Mechanisms: Grants vs. Other Transactions

The majority of BIL and IRA funding (excluding loans) was implemented in the form of grants and cooperative agreements governed by 2CFR 200 and 2CFR 910. Even for programs for which the legislation did not specify the exact type of assistance mechanism that DOE should use (i.e., unspecified or “financial assistance”), the agency largely defaulted to those grants and cooperative agreements. One argument for this approach was that program officers and contracting officers are trained and experienced in using these mechanisms, which may have helped programs deploy faster.

However, these grants were originally designed for R&D programs and faced some drawbacks when used for demonstration and deployment programs. 2CFR 200 and 2CFR 910 are almost 200 pages long, requiring extensive compliance that smaller organizations and organizations new to federal applications may not be equipped to navigate. Additionally, some terms and conditions required by those rules (e.g. for intellectual property, real property, and program income) were not compatible with private sector needs for demonstration and commercial-scale projects. Most consequentially, they require a termination for convenience clause, which allows the government to cancel an award without providing a reason. The Trump administration is now using that clause to terminate awards.

Alternatively, DOE could have more frequently used its Other Transaction Authority (OTA) to enter into contracts without 2CFR regulations, allowing the agency to negotiate contracts more like the private sector would, developing terms and conditions as they make sense for the purpose of the specific purpose. This can enable DOE to design and implement more creative arrangements, such as for demand-pull or market-shaping mechanisms. DOE could have also leveraged OTs to make process improvements, rethink the traditional solicitation and evaluation process, and potentially accelerate implementation.3

DOE 3.0 missed a major opportunity to leverage these benefits of OTs. The few exceptions were the Hydrogen Demand Initiative (H2DI), the Advanced Reactor Demonstration Program, and Partnership Intermediary Agreements. Towards the end of the Biden administration, DOE discussed transitioning some of OCED’s awards to OT agreements, but did not get a chance to follow through before the presidential transition.4

DOE 4.0 should pick up where DOE 3.0 and deploy OTs more broadly among demonstration and deployment programs to overcome the challenges of traditional financial assistance regulations and processes. Congress should ensure that future authorizing legislation is designed to enable this flexibility–for example, by not specifying the type of assistance that DOE should use to implement new programs.

Flexible Funding

BIL and IRA authorized and appropriated funding for a wide range of programs, many with very specific goals and eligible uses. That approach allows Congress to provide detailed direction to DOE on legislators’ priorities. However, DOE should also be able to respond dynamically to industries and markets as they develop. For example, when BIL and IRA were being developed, next-generation geothermal technologies were still quite nascent and received very little funding from these bills. Within two years though, the technology rapidly advanced, thanks to the success of the first few demonstration projects, and now shows enormous potential for meeting clean, firm energy demand, but DOE has limited funding available to support the industry.

In future legislation, Congress should consider establishing a few flexible funding programs that would give DOE a greater range of options to support the development of energy technologies and infrastructure as the agency’s experts know best. This could look like a pooled pot of funding with broad authority for DOE to use across technologies and/or activities, such as a single fund for demonstration and deployment activities broadly, or a single fund for grid infrastructure needs. If Congress is wary about this, legislators could start with creating flexible funding programs designed to fit within the scope of a single DOE office, before testing programs that cross multiple offices, which may come with intra-agency coordination challenges.

Program Design: Regional Hubs

The Hydrogen Hubs and Regional Direct Air Capture (DAC) Hubs were a new type of program established by BIL, designed to fund clusters of projects located in different regions rather than individual, unrelated projects. BIL invested $7 billion and $3.5 billion in these programs, respectively, and they made some of the largest awards by dollar amount – on the order of $1 billion per award – out of all of the BIL and IRA programs.

The hub approach aimed to foster an industrial ecosystem, including not only multiple projects aiming to deploy the technology, but also future suppliers, offtakers, labor organizations, academic partners, and state, local, and Tribal governments. Concentrated regional investment and greater coordination would not only accelerate commercialization of hydrogen and DAC technologies but also help distribute the benefits of new clean energy industries across the nation.

Due to the ambitious size and complexity of their goals, the Hydrogen Hubs and DAC Hubs required, and still require, a long timeline to develop. The structure and oversight DOE applied to the hub development process also extended timelines further. When the Trump administration began re-evaluating Biden-era programs and Congress started looking for funds to rescind, these two programs became appealing targets because of the large amount of funding they held and the lack of on-the-ground deployment progress – even though that was to be expected based on the program timeline.5

Project cancellations and funding rescissions are a massive waste of both federal and private sector resources. In the future, before creating any other large-scale programs modeled on the Hydrogen Hubs and DAC Hubs, policymakers should first determine whether there is long-term bipartisan commitment to the program’s goals to avoid the possibility that a change of administration will jeopardize the program. If that commitment isn’t guaranteed, this model may simply be too risky to use; other types of assistance may be easier to implement or more resilient to changes in administration.

An alternate regional hub model that Congress and DOE could consider is the CHIPS and Science Act’s Regional Technology and Innovation Hubs and NSF Engines. These programs had a much lower level of ambition, providing awards – on the order of tens of millions instead of one billion – to seed early-stage innovation, build a research ecosystem, and support workforce development, rather than deploying specific technologies.

Program Design: Demand-Pull

Demand-pull mechanisms have emerged in conversations between FAS and former DOE staff as a very underutilized but promising tool for enabling the scaling and deployment of clean energy technologies and large-scale infrastructure projects. Confidence in long-term offtake is a requirement for private lenders to provide financing at a viable rate for projects. DOE can help provide that certainty through a wide range of tools, including purchase commitments and capacity contracts, contracts-for-difference, and other financial arrangements.

By unlocking private sector investment, demand-pull mechanisms can reduce or eliminate the need for DOE to provide additional financing for project construction. However, public sector funding is still useful for pre-construction stages of project development, such as planning, siting, and permitting, which can be hard to get private sector financing for when other risks to a long-term revenue model have not been addressed yet.

There are three primary use cases for demand-pull mechanisms: building shared infrastructure, demonstrating innovative technologies, and expanding industrial capacity.

Shared infrastructure projects require a large number of customers and can sometimes struggle with securing them: customers are afraid to commit without the developer demonstrating that they’ve secured other customers first. DOE can help address this challenge by serving as an anchor customer for these projects and help attract additional customers. This also makes it easier to finance the project.

A successful example of this from BIL is the Transmission Facilitation Program, which authorized DOE to purchase up to 50% of the planned capacity of large-scale transmission lines for up to 40 years. Once the transmission line is built, DOE can then sell capacity contracts to actual customers who need to use the transmission line and recoup the agency’s investment. This approach could be used for other types of shared infrastructure, such as hydrogen or carbon dioxide transportation, or even large clean, firm power plants (e.g., nuclear) for their generation capacity.

First-of-a-kind projects often struggle to secure offtakers due to the unproven nature of their technology and the lack of a pre-existing market. For example, H2DI was designed to complement the Hydrogen Hubs program by directly supporting demand for select hydrogen producers and also helping establish a transparent strike price for the nascent market that would benefit all hydrogen producers. Other demonstration programs (e.g. IDP) would have also benefited from DOE support for demand and market formation.

Lastly, the development of new industrial capacity for producing energy technologies and their inputs can also face demand challenges because while there may be a pre-existing global market, the domestic market may be small or nonexistent, and existing offtakers may not be willing to reroute their supply chains without market or policy pressure to do so. This was most obvious with the critical minerals and battery supply chain projects that DOE tried to support.

One successful model from the IRA was the HALEU availability program. DOE set up indefinite delivery, indefinite quantity contracts with companies developing HALEU production capacity and set aside $1 billion to procure HALEU from the five fastest movers. The purchase commitment created demand certainty, while the competitive model incentivized faster project development and ensured that the DOE’s funding would only go towards the most viable projects. More programs like this would be transformative for domestic supply chain development.

In designing demand-side support programs for these latter two categories, DOE must tailor the programs to the unique challenges of different technologies or commodities, and whether or not there are additional goals of domestic market formation and/or market stabilization. For example, auctions are a great tool for price discovery, while contracts-for-difference can help projects hedge against price volatility and overcome domestic price premiums.

There are also double-sided market maker programs where DOE serves as an intermediary between producers and buyers, entering into long-term offtake commitments with project developers up front to provide demand certainty, and then reselling the product to buyers on a shorter-term basis when the project comes online This helps make supply chain connections and address mismatches between project developer vs. buyer timelines. For example, for low-carbon cement and concrete, buyers typically procure building materials on a short-term basis as needed for each project, but developers of first-of-a-kind production facilities require long-term offtake commitments in order to secure project financing.

Authorizing language and/or appropriations can be a barrier to DOE using demand-pull mechanisms. To address this issue, Congress should factor the following considerations into the design of legislation:

- Flexible Authorities. Due to the variety of demand-pull mechanisms and the need to tailor them to the unique market challenges of different technologies or commodities, they are best implemented using OT agreements. Statutory language that prescribes the exact type(s) of assistance (e.g., grants) for a program can prevent DOE from using demand-pull. Instead, Congress should provide clear goals for a program to achieve and leave DOE with the flexibility to determine the best type of assistance mechanism.

- Budget Scoring and Timelines. Demand-pull mechanisms often involve multi-year advance commitments of funding, but the exact amount and timing of transactions may be uncertain, since it is conditional upon project performance and overall market conditions (e.g. contracts-for-difference payments are based on the market price at the time of the transaction). This results in budget scoring issues. Legally binding commitments of money can typically only be made if the agency has enough funding to obligate the full amount of the contract when it is signed, even if that funding probably won’t be paid out until much later.6 This results in the need for a significant amount of upfront funding, which can be difficult to obtain from Congress, and long timelines before the outcome of that funding is fully realized, which can make it difficult to manage congressional expectations. These long timelines also mean that no-year funding is ideal for DOE to be able to run demand-pull programs without the funding expiring.7

- Revenue Management. Some demand-pull mechanisms are designed with the potential for revenue generation, so legislation should ideally be designed to include the authorization of a revolving fund to allow revenue to be reused for program costs. Alternatively, DOE may contract with an external entity to manage the program funds, as it did with H2DI, so that the revenue can stay with the partner entity and be reused.

Program Design: Prizes

Unlike most financial assistance, which operates on a cost-reimbursement basis and requires cost-share, prizes reward performance and are awarded after activities are completed and criteria have been met. This means there are no strings attached to the funding and no IP requirements, making these programs easier for applicants to work with.8 Prizes are also of a fixed amount, which incentivizes innovators to find least-cost solutions in order to maximize revenue from the award. On the flip side, innovators are responsible for any cost overruns, and DOE is not required to shoulder that risk.

In the past DOE has used prizes wrongly to try and reach potential applicants that struggle with the application process for traditional assistance. It’s important to keep in mind the best use cases for prize programs. For example, prize programs rely on clear milestones, but are agnostic on the approach, making them great for interdisciplinary innovation. They can be beneficial for incentivizing new innovators to get involved with problem areas that don’t have many pre-existing solvers. They are also well-suited for small dollar amount awards that otherwise may not be worth the administrative overhead, since the overhead costs for prize programs are lower than traditional assistance programs once they have been designed.

Moving forward, DOE should keep in mind best practices for designing equitable prize programs. Prize programs should ideally be designed as stage-gated competitions with incremental prize payments for each phase, rather than one big payment at the end, so that innovators with fewer financial resources can participate. For example, the first stage could be the submission of a whitepaper with a proposed plan for developing and testing the technology, then the second stage could be lab work, and so on. Participants would be whittled down between each stage to hone in on the most competitive projects.

Program Design: Loans

DOE 4.0’s loan programs could be improved by setting clearer expectations on risk, clearer guidance on State Energy Financing Institution (SEFI) projects, and a strategy for using additional tools such as equity.

Risk Tolerance. Discrepancies between statutory language and congressional oversight for DOE’s loan programs have historically made it difficult for the agency to determine the right balance of risk. For example, Title 1703 is designed by legislation to fund innovative, higher-risk, hard infrastructure projects that the private sector is typically reluctant to fund. A high-risk, high-reward program should, by nature, be allowed to have some failed projects and still be considered a success. However, Congress has historically been extremely critical of any defaulted loans, making DOE hesitant to use Title 1703 and ATVM to its full potential.

DOE 3.0 made some attempts to improve communications on its approach to risk management, but the agency could do more to communicate the success of its loan programs. Congressional authorizers should help the agency by building risk into the statute of DOE’s loan programs and budgets and better managing the expectations of oversight members.

State Energy Financing Institution (SEFI) Projects. Another area of reform that DOE 4.0 should tackle is the SEFI-supported projects under Title 17, authorized by BIL, which allows DOE to finance any energy project that also receives “meaningful financial support” from a SEFI, such as state energy offices or green banks. However, ambiguity in the statute behind this new carveout caused confusion among states on how exactly to partner with DOE’s loan program. What is considered meaningful financial support? What qualifies as a SEFI? To clarify these questions from states, either DOE 4.0 should create model SEFI guidance or Congress should amend the statute with clear definitions.

Equity and Other Financing Tools. The Trump administration’s restructuring of the Lithium Americas Thacker Pass loan to include an equity warrant, which gives DOE the right to acquire equity of the company at a set price in the future, has raised questions as to what DOE’s role should be if it were to become an equity owner in a company and what guardrails and visibility is needed in such a scenario.9 Policymakers may also want to consider the risks and benefits of expanding DOE’s loan program authorities to include direct equity investments and other financing tools that agencies like the International Development Finance Corporation (DFC) have access to.10

Program Design: Technical Assistance

DOE 4.0 should expand its technical assistance offerings in three primary ways: technical advising and verification, navigating federal funding, and talent and workforce needs.

Technical Advising and Verification. DOE’s in-house scientific and engineering expertise is a major draw for funding applicants. For example, according to FAS conversations with former agency staff, the project developers behind Vogtle Units 3 and 4, which received a loan guarantee from DOE, would seek advice from LPO engineers when they had engineering questions. Private investors, who may lack the expertise needed for technical due diligence, often use DOE awards as a proxy for assessing project risk. As a result, some project developers will apply for DOE funding to prove their credibility to private financiers and negotiate lower financing rates.

In the face of potential budget cuts, DOE 4.0 could leverage this strength by offering project certifications that would entail the same technical support and verification as a demonstration award or loan, without the funding support. This would provide a similar market signal to private investors, without costing DOE as much – just staff time. And since DOE is not taking on any project risk, the application and negotiation process could also be simplified and streamlined to align better with private-sector timelines.

Navigating Federal Funding. DOE should dedicate increased resources to conducting outreach to underserved communities, small businesses, innovators, and new applicants about funding opportunities and shepherding them through the application process. For example, despite awareness of available funding opportunities, some Native American tribal organizations in Alaska were unable to pursue them due to a lack of bandwidth or expertise to participate in resource-intensive (and often times confusing) application processes, and the awards sizes were too small to make them worth the costs of external private consultants to support. Community Navigator Programs and other forms of technical assistance could help communities overcome these barriers to accessing federal support. PIAs can also help with reaching small businesses and new applicants to apply for programs.

Talent and Workforce Needs. DOE has had success with placing talent at state energy offices and other critical energy organizations like public utility commissions through the Energy Innovator Fellowship to embed expertise in under-resourced offices. DOE should consider expanding this program or establishing new programs to place experts at other institutions, such as grid operators, investor owned utilities, and local governments, to advise and support them in adopting new energy technologies and accelerating infrastructure deployment.

Program Design: Community Benefit Plans

For all of its demonstration and deployment programs, DOE 3.0 introduced a new requirement that awardees create community benefit plans (CBPs) to ensure that communities would share in the benefits of local clean energy projects. CBPs have been both lauded and criticized by community and labor organizations: they praised their intent, but expressed frustration over their limited influence on companies’ plans and that allowable cost limits constrained what could be included in awards. Where CBPs were most effective, they encouraged developers to consider local communities and jobs, though this often required significant internal coordination to use DOE’s funding contracts as leverage. At the same time, CBPs were seen as an additional administrative burden on program implementation, contributing to delays. Under the Trump administration, CBPs will no longer be enforced and are no longer required for future funding opportunities.

DOE 4.0 presents an opportunity to restore and improve CBPs as a mechanism for both distributing the benefits of federally-funded projects and improving project quality. To maximize impact, DOE 4.0 should focus on a smaller set of high-priority outcomes with clear, measurable success metrics. DOE 3.0’s broad mandate, which spanned jobs, justice, climate, and deployment across multiple programs, sometimes diluted effectiveness and created confusion for staff managing both program design and operations. In DOE 4.0, these outcomes should be closely linked to actual project success, whether through facilitating social license to ease permitting, or supporting workforce development to train and retain workers, as developers themselves emphasized when aligning with program goals. Providing actionable guidance, including templates and real-world examples of successful community benefits plans, can further improve project outcomes. The advocacy community can help lay the groundwork for DOE 4.0 by documenting successful case studies and model agreement language. Congress could help embed key priorities in statute, providing clear, practical guidance that reflects DOE’s administrative capacity and enhances the likelihood of successful implementation.

Additionally, it is critical that future CBP mechanisms account for community preferences, including local prohibitions on certain technologies and other expressions of community priorities. By proactively respecting local concerns, DOE can foster trust and strengthen the long-term impact of projects. DOE 4.0 will also need to navigate tensions around labor preferences. While the department cannot explicitly require union labor, questions about labor practices may signal preferences that vary across states, including right-to-work contexts. This underscores the importance of sensitivity to local norms and expectations.

Where resources allow, DOE 4.0 should hire and dedicate staff with expertise in labor engagement and community partnerships to review applications and provide technical assistance, supporting applicants in navigating the CBP process and designing high-quality, community-centered projects. Technical assistance needs to be done carefully though to avoid perceptions of bias and influencing the award selection process.

Lastly, clear and consistent guidance across DOE offices is essential. For example, applicants have reported a lack of clarity about what activities qualify as “allowable costs” in CBPs, and different offices have applied inconsistent standards. Establishing a unified, expansive approach to allowable costs—including activities that indirectly support clean energy workforce development, such as community child care programs—can unlock transformative opportunities for local communities. This standardization should be done for other aspects as well. In general, official guidance needs to find a better middle ground between the overly technical, lengthy documents and vague webinars produced by DOE 3.0, so that ideally applicants can understand requirements without staff intervention.

Conclusion

Good program design is fundamental to effectively engaging with researchers, industry, state and local governments, and communities, in order to realize the full potential of DOE funding. Though much of the real-world impact of BIL and IRA is still yet to come, DOE can already begin learning from the challenges and successes of program design and implementation under the Biden administration. The recommendations in this report are just as applicable to the remaining funding from BIL and IRA that DOE has yet to implement, as they are to future programs. Moving forward, Congress has the opportunity to reconsider the way that programs are designed in future legislation, especially those targeting demonstration and deployment activities, and make sure that DOE has clear direction and the right authorities and flexibility to maximize the impact of federal funding.

Acknowledgements

The authors would like to thank Arjun Krishnaswami for coining the idea of DOE 4.0 and his insightful feedback throughout the development and execution of this project. The authors would also like to thank Kelly Fleming for her leadership of the clean energy team while she was at FAS. Additional gratitude goes to Claire Cody at Clean Tomorrow, Gene Rodrigues, Keith Boyea, Kyle Winslow, Raven Graf and all the other individuals and organizations who helped inform this report through participating in workshops and interviews and reviewing an earlier draft.

Appendix A. Acronyms

Appendix B. BIL and IRA Funding Distribution Methodology

The funding distribution heat map at the beginning of the report includes all of the BIL and IRA programs with funding authorized and/or appropriated directly to DOE, excluding loan programs. The following were not included in this table:

- Loan programs, which are funded differently than traditional programs;

- Tax credits that DOE helped design (e.g., 45X), which are also funded through a different mechanism; and

- Programs implemented by DOE, but funded by other agencies’ appropriations, such as the Methane Emissions Reduction Program funded by the Environmental Protection Agency.

Programs were tagged according to their sector or technology area, their activity area, and type of assistance based on key words in their statutory language. Programs could be tagged with multiple sectors/technologies, activity areas, and/or types of assistance.

To determine the amount of funding for each sector/technology and activity area combination, all of the programs with the corresponding tags were included in the sum. Because of this duplicative counting, the sum of the dollar amounts in the table exceeds the total amount of funding for all of these programs. Sector/technology totals were calculated without this duplication, which is why those amounts are less than what one would obtain by summing all of the activity area amounts for a sector/technology.

Activity area categories:

- “R&D” includes funding for programs covering research, development, and/or pre-commercial pilots.

- “Demonstration” includes funding for demonstration and commercial pilot programs using innovative technologies.

- “Deployment” includes funding for project planning, financing, and offtake; siting and permitting; and the development of standards or codes.

- “Workforce” includes educational outreach, training programs, apprenticeships, and other workforce development programs.

- “Energy Access and Affordability” refers to funding to lower the cost of energy for low-income households and to support the development or improvement of energy infrastructure for rural and remote areas and tribal nations. Some of these programs involve multiple sectors/technologies (see below).

- “National Labs Infrastructure” refers to programs funding construction and facility upgrades at national labs.

Sector/technology categories:

- “Grid infrastructure” refers to programs focused on any part of the transmission network between power generation facilities and consumer households or facilities, plus the planning, operations, and management of grid and the interconnection of new generation or loads to the grid. These were primarily programs implemented by the former Grid Deployment Office or the Office of Electricity.

- “Power: Technology Neutral” refers to programs supporting power generation or storage that any zero-emission generation technology was eligible for.

- “Power: Solar Energy”, “Power: Wind Energy”, “Power Hydro and Marine Energy”, “Power: Geothermal Energy”, “Power: Nuclear Energy”, and “Power: Energy Storage Systems” refers to programs focused on the underlying technologies and the demonstration and deployment of power generation and storage facilities using these technologies. Demonstration and deployment programs for the manufacturing of these energy technologies and input materials and components (e.g. battery manufacturing or HALEU fuel) were not included in these categories, but rather under the “Manufacturing and Supply Chains” category.

- “EV Charging Infrastructure” refers to programs supporting charging infrastructure for EVs of any weight class.

- “Critical Minerals” refers to programs supporting the mining, processing, recycling, and recovery from non-traditional sources of materials on either DOE’s Critical Materials List or the U.S. Geological Service’s Critical Minerals list.

- “Manufacturing and Supply Chains” refers to programs supporting the manufacturing of energy technologies and their input components and materials, as well as advanced manufacturing technologies in general. This category can overlap with both “Critical Minerals” (e.g. the Battery Materials Processing Program) and “Industrial Decarbonization/Efficiency” (e.g. the Advanced Energy Manufacturing & Recycling Grants Program)

- “Industrial Decarbonization & Efficiency” refers to programs supporting the reduction or elimination of carbon emissions from manufacturing industries.