Creating Auditing Tools for AI Equity

The unregulated use of algorithmic decision-making systems (ADS)—systems that crunch large amounts of personal data and derive relationships between data points—has negatively affected millions of Americans. These systems impact equitable access to education, housing, employment, and healthcare, with life-altering effects. For example, commercial algorithms used to guide health decisions for approximately 200 million people in the United States each year were found to systematically discriminate against Black patients, reducing, by more than half, the number of Black patients who were identified as needing extra care.

One way to combat algorithmic harm is by conducting system audits, yet there are currently no standards for auditing AI systems at the scale necessary to ensure that they operate legally, safely, and in the public interest. According to one research study examining the ecosystem of AI audits, only one percent of AI auditors believe that current regulation is sufficient.

To address this problem, the National Institute of Standards and Technology (NIST) should invest in the development of comprehensive AI auditing tools, and federal agencies with the charge of protecting civil rights and liberties should collaborate with NIST to develop these tools and push for comprehensive system audits.

These auditing tools would help the enforcement arms of these federal agencies save time and money while fulfilling their statutory duties. Additionally, there is a pressing need to develop these tools now, with Executive Order 13985 instructing agencies to “focus their civil rights authorities and offices on emerging threats, such as algorithmic discrimination in automated technology.”

Challenge and Opportunity

The use of AI systems across all aspects of life has become commonplace as a way to improve decision-making and automate routine tasks. However, their unchecked use can perpetuate historical inequities, such as discrimination and bias, while also potentially violating American civil rights.

Algorithmic decision-making systems are often used in prioritization, classification, association, and filtering tasks in a way that is heavily automated. ADS become a threat when people uncritically rely on the outputs of a system, use them as a replacement for human decision-making, or use systems with no knowledge of how they were developed. These systems, while extremely useful and cost-saving in many circumstances, must be created in a way that is equitable and secure.

Ensuring the legal and safe use of ADS begins with recognizing the challenges that the federal government faces. On the one hand, the government wants to avoid devoting excessive resources to managing these systems. With new AI system releases happening everyday, it is becoming unreasonable to oversee every system closely. On the other hand, we cannot blindly trust all developers and users to make appropriate choices with ADS.

This is where tools for the AI development lifecycle come into play, offering a third alternative between constant monitoring and blind trust. By implementing auditing tools and signatory practices, AI developers will be able to demonstrate compliance with preexisting and well-defined standards while enhancing the security and equity of their systems.

Due to the extensive scope and diverse applications of AI systems, it would be difficult for the government to create a centralized body to oversee all systems or demand each agency develop solutions on its own. Instead, some responsibility should be shifted to AI developers and users, as they possess the specialized knowledge and motivation to maintain proper functioning systems. This allows the enforcement arms of federal agencies tasked with protecting the public to focus on what they do best, safeguarding citizens’ civil rights and liberties.

Plan of Action

To ensure security and verification throughout the AI development lifecycle, a suite of auditing tools is necessary. These tools should help enable outcomes we care about, fairness, equity, and legality. The results of these audits should be reported (for example, in an immutable ledger that is only accessible by authorized developers and enforcement bodies) or through a verifiable code-signing mechanism. We leave the specifics of the reporting and documenting the process to the stakeholders involved, as each agency may have different reporting structures and needs. Other possible options, such as manual audits or audits conducted without the use of tools, may not provide the same level of efficiency, scalability, transparency, accuracy, or security.

The federal government’s role is to provide the necessary tools and processes for self-regulatory practices. Heavy-handed regulations or excessive government oversight are not well-received in the tech industry, which argues that they tend to stifle innovation and competition. AI developers also have concerns about safeguarding their proprietary information and users’ personal data, particularly in light of data protection laws.

Auditing tools provide a solution to this challenge by enabling AI developers to share and report information in a transparent manner while still protecting sensitive information. This allows for a balance between transparency and privacy, providing the necessary trust for a self-regulating ecosystem.

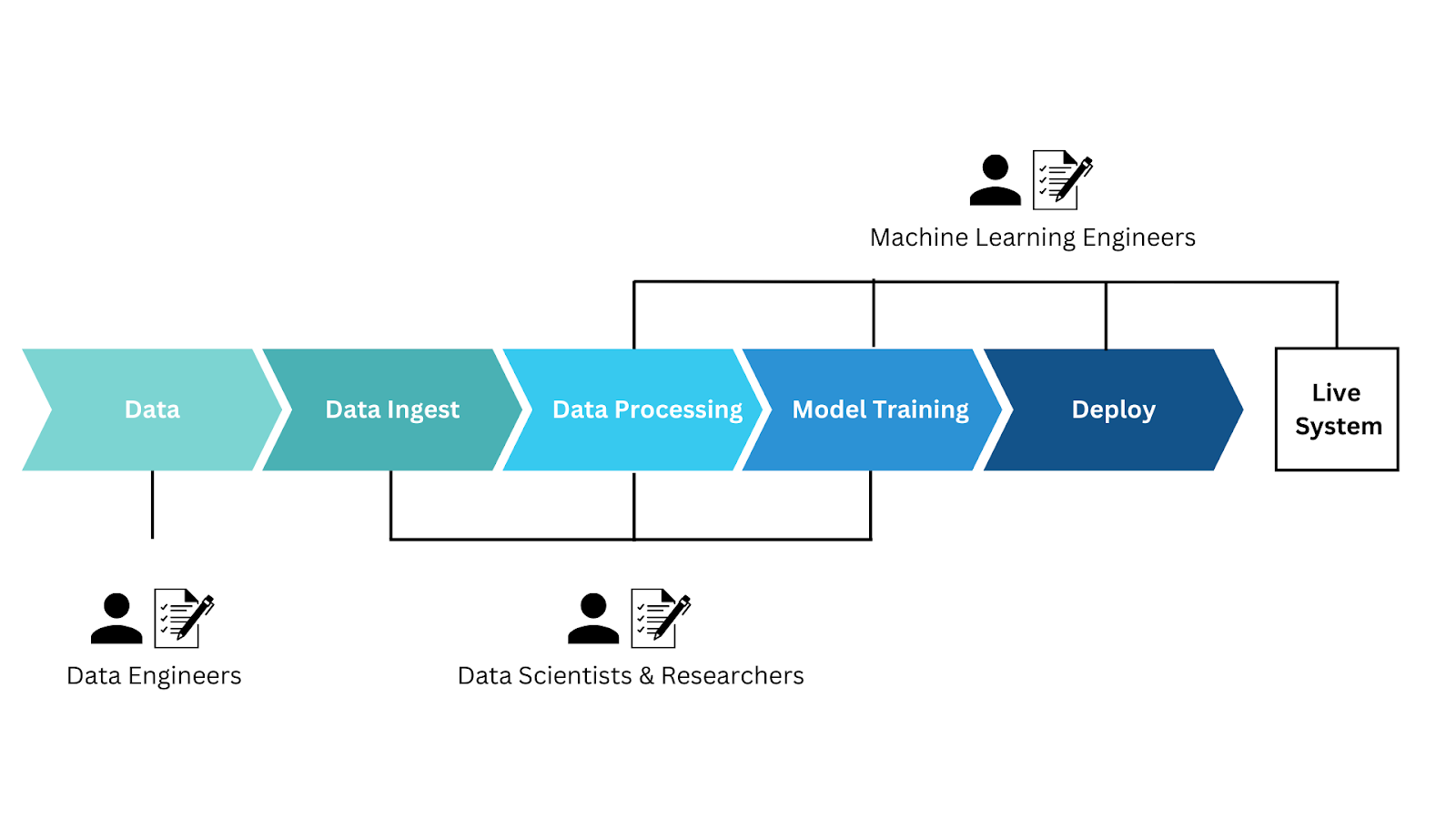

A general machine learning lifecycle. Examples of what system developers at each stage would be responsible for signing off on the use of the security and equity tools in the lifecycle. These developers represent companies, teams, or individuals.

The equity tool and process, funded and developed by government agencies such as NIST, would consist of a combination of (1) AI auditing tools for security and fairness (which could be based on or incorporate open source tools such as AI Fairness 360 and the Adversarial Robustness Toolbox), and (2) a standardized process and guidance for integrating these checks (which could be based on or incorperate guidance such as the U.S. Government Accountability Office’s Artificial Intelligence: An Accountability Framework for Federal Agencies and Other Entities).1

Dioptra, a recent effort between NIST and the National Cybersecurity Center of Excellence (NCCoE) to build machine learning testbeds for security and robustness, is an excellent example of the type of lifecycle management application that would ideally be developed. Failure to protect civil rights and ensure equitable outcomes must be treated as seriously as security flaws, as both impact our national security and quality of life.

Equity considerations should be applied across the entire lifecycle; training data is not the only possible source of problems. Inappropriate data handling, model selection, algorithm design, and deployment, also contribute to unjust outcomes. This is why tools combined with specific guidance is essential.

As some scholars note, “There is currently no available general and comparative guidance on which tool is useful or appropriate for which purpose or audience. This limits the accessibility and usability of the toolkits and results in a risk that a practitioner would select a sub-optimal or inappropriate tool for their use case, or simply use the first one found without being conscious of the approach they are selecting over others.”

Companies utilizing the various packaged tools on their ADS could sign off on the results using code signing. This would create a record that these organizations ran these audits along their development lifecycle and received satisfactory outcomes.

We envision a suite of auditing tools, each tool applying to a specific agency and enforcement task. Precedents for this type of technology already exist. Much like security became a part of the software development lifecycle with guidance developed by NIST, equity and fairness should be integrated into the AI lifecycle as well. NIST could spearhead a government-wide initiative on auditing AI tools, leading guidance, distribution, and maintenance of such tools. NIST is an appropriate choice considering its history of evaluating technology and providing guidance around the development and use of specific AI applications such as the NIST-led Face Recognition Vendor Test (FRVT).

Areas of Impact & Agencies / Departments Involved

Security & Justice

The U.S. Department of Justice, Civil Rights Division, Special Litigation SectionDepartment of Homeland Security U.S. Customs and Border Protection U.S. Marshals Service

Public & Social Sector

The U.S. Department of Housing and Urban Development’s Office of Fair Housing and Equal Opportunity

Education

The U.S. Department of Education

Environment

The U.S. Department of Agriculture, Office of the Assistant Secretary for Civil RightsThe Federal Energy Regulatory CommissionThe Environmental Protection Agency

Crisis Response

Federal Emergency Management Agency

Health & Hunger

The U.S. Department of Health and Human Services, Office for Civil RightsCenter for Disease Control and PreventionThe Food and Drug Administration

Economic

The Equal Employment Opportunity Commission, The U.S. Department of Labor, Office of Federal Contract Compliance Programs

Infrastructure

The U.S. Department of Transportation, Office of Civil RightsThe Federal Aviation AdministrationThe Federal Highway Administration

Information Verification & Validation

The Federal Trade Commission, The Federal Communication Commission, The Securities and Exchange Commission.

Many of these tools are open source and free to the public. A first step could be combining these tools with agency-specific standards and plain language explanations of their implementation process.

Benefits

These tools would provide several benefits to federal agencies and developers alike. First, they allow organizations to protect their data and proprietary information while performing audits. Any audits, whether on the data, model, or overall outcomes, would be run and reported by the developers themselves. Developers of these systems are the best choice for this task since ADS applications vary widely, and the particular audits needed depend on the application.

Second, while many developers may opt to use these tools voluntarily, standardizing and mandating their use would allow an evaluation of any system thought to be in violation of the law to be easily assesed. In this way, the federal government will be able to manage standards more efficiently and effectively.

Third, although this tool would be designed for the AI lifecycle that results in ADS, it can also be applied to traditional auditing processes. Metrics and evaluation criteria will need to be developed based on existing legal standards and evaluation processes; once these metrics are distilled for incorporation into a specific tool, this tool can be applied to non-ADS data as well, such as outcomes or final metrics from traditional audits.

Fourth, we believe that a strong signal from the government that equity considerations in ADS are important and easily enforceable will impact AI applications more broadly, normalizing these considerations.

Example of Opportunity

An agency that might use this tool is the Department of Housing and Urban Development (HUD), whose purpose is to ensure that housing providers do not discriminate based on race, color, religion, national origin, sex, familial status, or disability.

To enforce these standards, HUD, which is responsible for 21,000 audits a year, investigates and audits housing providers to assess compliance with the Fair Housing Act, the Equal Credit Opportunity Act, and other related regulations. During these audits, HUD may review a provider’s policies, procedures, and records, as well as conduct on-site inspections and tests to determine compliance.

Using an AI auditing tool could streamline and enhance HUD’s auditing processes. In cases where ADS were used and suspected of harm, HUD could ask for verification that an auditing process was completed and specific metrics were met, or require that such a process be undergone and reported to them.

Noncompliance with legal standards of nondiscrimination would apply to ADS developers as well, and we envision the enforcement arms of protection agencies would apply the same penalties in these situations as they would in non-ADS cases.

R&D

To make this approach feasible, NIST will require funding and policy support to implement this plan. The recent CHIPS and Science Act has provisions to support NIST’s role in developing “trustworthy artificial intelligence and data science,” including the testbeds mentioned above. Research and development can be partially contracted out to universities and other national laboratories or through partnerships/contracts with private companies and organizations.

The first iterations will need to be developed in partnership with an agency interested in integrating an auditing tool into its processes. The specific tools and guidance developed by NIST must be applicable to each agency’s use case.

The auditing process would include auditing data, models, and other information vital to understanding a system’s impact and use, informed by existing regulations/guidelines. If a system is found to be noncompliant, the enforcement agency has the authority to impose penalties or require changes to be made to the system.

Pilot program

NIST should develop a pilot program to test the feasibility of AI auditing. It should be conducted on a smaller group of systems to test the effectiveness of the AI auditing tools and guidance and to identify any potential issues or areas for improvement. NIST should use the results of the pilot program to inform the development of standards and guidelines for AI auditing moving forward.

Collaborative efforts

Achieving a self-regulating ecosystem requires collaboration. The federal government should work with industry experts and stakeholders to develop the necessary tools and practices for self-regulation.

A multistakeholder team from NIST, federal agency issue experts, and ADS developers should be established during the development and testing of the tools. Collaborative efforts will help delineate responsibilities, with AI creators and users responsible for implementing and maintaining compliance with the standards and guidelines, and agency enforcement arms agency responsible for ensuring continued compliance.

Regular monitoring and updates

The enforcement agencies will continuously monitor and update the standards and guidelines to keep them up to date with the latest advancements and to ensure that AI systems continue to meet the legal and ethical standards set forth by the government.

Transparency and record-keeping

Code-signing technology can be used to provide transparency and record-keeping for ADS. This can be used to store information on the auditing outcomes of the ADS, making reporting easy and verifiable and providing a level of accountability to users of these systems.

Conclusion

Creating auditing tools for ADS presents a significant opportunity to enhance equity, transparency, accountability, and compliance with legal and ethical standards. The federal government can play a crucial role in this effort by investing in the research and development of tools, developing guidelines, gathering stakeholders, and enforcing compliance. By taking these steps, the government can help ensure that ADS are developed and used in a manner that is safe, fair, and equitable.

Code signing is used to establish trust in code that is distributed over the internet or other networks. By digitally signing the code, the code signer is vouching for its identity and taking responsibility for its contents. When users download code that has been signed, their computer or device can verify that the code has not been tampered with and that it comes from a trusted source.

Code signing can be extended to all parts of the AI lifecycle as a means of verifying the authenticity, integrity, and function of a particular piece of code or a larger process. After each step in the auditing process, code signing enables developers to leave a well-documented trail for enforcement bodies/auditors to follow if a system were suspected of unfair discrimination or unsafe operation.

Code signing is not essential for this project’s success, and we believe that the specifics of the auditing process, including documentation, are best left to individual agencies and their needs. However, code signing could be a useful piece of any tools developed.

Additionally, there may be pushback on the tool design. It is important to remember that currently, engineers often use fairness tools at the end of a development process, as a last box to check, instead of as an integrated part of the AI development lifecycle. These concerns can be addressed by emphasizing the comprehensive approach taken and by developing the necessary guidance to accompany these tools—which does not currently exist.

New York regulators are calling on a UnitedHealth Group to either stop using or prove there is no problem with a company-made algorithm that researchers say exhibited significant racial bias. This algorithm, which UnitedHealth Group sells to hospitals for assessing the health risks of patients, assigned similar risk scores to white patients and Black patients despite the Black patients being considerably sicker.

In this case, researchers found that changing just one parameter could generate “an 84% reduction in bias.” If we had specific information on the parameters going into the model and how they are weighted, we would have a record-keeping system to see how certain interventions affected the output of this model.

Bias in AI systems used in healthcare could potentially violate the Constitution’s Equal Protection Clause, which prohibits discrimination on the basis of race. If the algorithm is found to have a disproportionately negative impact on a certain racial group, this could be considered discrimination. It could also potentially violate the Due Process Clause, which protects against arbitrary or unfair treatment by the government or a government actor. If an algorithm used by hospitals, which are often funded by the government or regulated by government agencies, is found to exhibit significant racial bias, this could be considered unfair or arbitrary treatment.

Example #2: Policing

A UN panel on the Elimination of Racial Discrimination has raised concern over the increasing use of technologies like facial recognition in law enforcement and immigration, warning that it can exacerbate racism and xenophobia and potentially lead to human rights violations. The panel noted that while AI can enhance performance in some areas, it can also have the opposite effect as it reduces trust and cooperation from communities exposed to discriminatory law enforcement. Furthermore, the panel highlights the risk that these technologies could draw on biased data, creating a “vicious cycle” of overpolicing in certain areas and more arrests. It recommends more transparency in the design and implementation of algorithms used in profiling and the implementation of independent mechanisms for handling complaints.

A case study on the Chicago Police Department’s Strategic Subject List (SSL) discusses an algorithm-driven technology used by the department to identify individuals at high risk of being involved in gun violence and inform its policing strategies. However, a study by the RAND Corporation on an early version of the SSL found that it was not successful in reducing gun violence or reducing the likelihood of victimization, and that inclusion on the SSL only had a direct effect on arrests. The study also raised significant privacy and civil rights concerns. Additionally, findings reveal that more than one-third of individuals on the SSL, approximately 70% of that cohort, have never been arrested or been a victim of a crime yet received a high-risk score. Furthermore, 56% of Black men under the age of 30 in Chicago have a risk score on the SSL. This demographic has also been disproportionately affected by the CPD’s past discriminatory practices and issues, including torturing Black men between 1972 and 1994, performing unlawful stops and frisks disproportionately on Black residents, engaging in a pattern or practice of unconstitutional use of force, poor data collection, and systemic deficiencies in training and supervision, accountability systems, and conduct disproportionately affecting Black and Latino residents.

Predictive policing, which uses data and algorithms to try to predict where crimes are likely to occur, has been criticized for reproducing and reinforcing biases in the criminal justice system. This can lead to discriminatory practices and violations of the Fourth Amendment’s prohibition on unreasonable searches and seizures, as well as the Fourteenth Amendment’s guarantee of equal protection under the law. Additionally, bias in policing more generally can also violate these constitutional provisions, as well as potentially violating the Fourth Amendment’s prohibition on excessive force.

Example #3: Recruiting

ADS in recruiting crunch large amounts of personal data and, given some objective, derive relationships between data points. The aim is to use systems capable of processing more data than a human ever could to uncover hidden relationships and trends that will then provide insights for people making all types of difficult decisions.

Hiring managers across different industries use ADS every day to aid in the decision-making process. In fact, a 2020 study reported that 55% of human resources leaders in the United States use predictive algorithms across their business practices, including hiring decisions.

For example, employers use ADS to screen and assess candidates during the recruitment process and to identify best-fit candidates based on publicly available information. Some systems even analyze facial expressions during interviews to assess personalities. These systems promise organizations a faster, more efficient hiring process. ADS do theoretically have the potential to create a fairer, qualification-based hiring process that removes the effects of human bias. However, they also possess just as much potential to codify new and existing prejudice across the job application and hiring process.

The use of ADS in recruiting could potentially violate several constitutional laws, including discrimination laws such as Title VII of the Civil Rights Act of 1964 and the Americans with Disabilities Act. These laws prohibit discrimination on the basis of race, gender, and disability, among other protected characteristics, in the workplace. Additionally, the these systems could also potentially violate the right to privacy and the due process rights of job applicants. If the systems are found to be discriminatory or to violate these laws, they could result in legal action against the employers.

Commercial artificial intelligence tools have recently emerged that are able to produce police reports. If the resulting reports are inaccurate, incomplete or biased, or if the process leaks confidential information, this could undermine the criminal justice system and harm citizens.

Too often, affected patients, clinicians, and regulators cannot see how the system works, why a decision was made, or whether meaningful human oversight occurred.

Existing tools from other domains, such as existing robust public engagement processes in drug development, when applied to AI deployment can help strengthen public trust in these systems and enhance perceptions of their legitimacy and the decisions they produce.

With thoughtful policy action, it is still possible to build systems that are fair, transparent, and accountable, and to earn the public trust that will ultimately determine AI’s future. We hope policymakers are ready to act.