Successful Pooled Hiring Starts With Diving the Deep End

The Office of Personnel Management has been busily reversing course on federal workforce reductions with some splashy hiring announcements. In December, it launched Tech Force, a pooled recruitment effort targeting 1,000 early-career technologists to be placed across agencies for two-year stints. In March, it stood up across-government shared certificate for project managers. It launched an Early Career Talent Network spanning five job categories. Two weeks ago, it expanded Tech Force into cybersecurity. OPM Director Scott Kupor has been explicit about his ambition: this is a “model for more centralized, efficient hiring across government.”

I’ll bite: yes, there’s a lot of promise in that! The instinct behind all of these actions builds on years of initiatives meant to create efficiencies out of the hundreds of thousands of hires made federally each here. Pooled hiring, which should include one well-designed announcement, one shared assessment, and many agencies drawing from the same pool of qualified candidates, is exactly the kind of tool the federal government should be using. I saw this up close when I was at OMB and I fully drank this Kool-Aid. The logic is compelling: (typically) the federal government processes over 22 million applications and hires over 350,000 people into public service every year. No private employer operates anywhere near that scale, which I still believe can be an asset, and pooled hiring creates the entry point to get there.

But pooled hiring has a track record (going back several administrations), and it’s uneven. Most recently, the Biden administration championed it most ambitiously during the infrastructure surge, where OPM partnered with seven agencies and hired roughly 5,000 employees, doing things like USDA hiring 39 HR specialists off a single certificate (if this sounds underwhelming to you, trust me when I say it’s mindblowing to your average hiring manager; more explained shortly). But the same period produced plenty of pooled actions that generated duplicative work, agency foot-dragging, and candidates who aged off certificates before anyone made them an offer. FAS and others have been studying these challenges in the context of the permitting workforce surge, and the problems are structural, predictable, and repeating. Also? Solvable.

The concept has promise but implementation has kept breaking in the same places. This piece is about why and about how to get it right, now, while there’s political will and active momentum to use it.

The Design Error at the Center of Everything

First, a quick explainer on how this actually works — because “pooled hiring” gets used loosely and the mechanics matter. A pooled hiring action is a competitive job announcement run either by OPM centrally or by a lead agency on behalf of multiple agencies and intended to fill multiple open positions in multiple agencies. Instead of each agency posting its own announcement, recruiting its own applicants, and running its own assessment, one announcement goes out, one applicant pool forms, and one assessment process screens candidates into a shared certificate of eligibles (government-speak for a ranked list of candidates that agencies can choose from). Agencies that have signed on to participate can then make selections from that certificate without having to run their own action from scratch. OPM-run actions (like the current Tech Force or the project manager cert) work the same way, just with OPM as the lead rather than a single agency. Either way, the cert is the output: a ranked list of candidates who have been assessed as qualified, available to any participating agency to hire from without having to solicit new resumes, review their qualifications, administer assessments, or other tedious parts of the hiring process.

That’s the theory.

The shared certificate is where most implementations stop. Agencies get a screened list and then do their own thing — their own interviews, on their own timelines, with their own offer processes. Or maybe they don’t, even when they said they would! The coordination ends at the cert. Everything downstream remains fully siloed at each agency.

This is far from the ideal that most policymakers have in mind and what many private employers do. A genuine pooled hiring action pools the whole pipeline. Recruitment, assessment, interviewing, and offers — all coordinated, all running in parallel across participating agencies. That doesn’t work for every role, but in surge situations, or for roles where agencies make dozens of hires of the same roles every year, it’s great. Agencies don’t just agree to draw from the same pool. They show up on the same interviewing days. They make offers on the same compressed timeline. Candidates who applied once get considered by many agencies simultaneously with each running its own slow-motion version of the process.

Almost nothing the federal government currently calls “pooled hiring” actually does this. The new OPM actions are no exception. Tech Force is better marketed than previous efforts, and the private-sector partnerships are genuinely new. But the selection and offer stages remain siloed at each agency and I’ll be very curious if they make selections. That’s the design flaw everything else flows from.

What Breaks When You Don’t Fix the Design

When I was at OMB, we saw these failure modes up close, in what were probably deeply frustrating meetings with the valiant program team as we learned where the seams were. Some things we saw:

Pooled hiring worked when it was a clear administration priority and had OPM and OMB supplementation. Early indicators suggest that Tech Force has success because it’s clear that the administration, the OPM director, and OPM staff are both giving it attention and smoothing implementation behind the scenes. That’s good for proof of concept, but it doesn’t show the weaknesses that can emerge when administration accountability doesn’t hold agencies to delivery on innovation hiring methods.

Agencies didn’t trust screening they didn’t run. OPM’s own guidance requires agencies making selections from another agency’s certificate to verify that the original qualification and assessment criteria are appropriate for their position. That verification step becomes a second screening — which defeats the efficiency rationale entirely. Agencies that double and triple-screened candidates created more work than if each had run its own action from scratch. The fix isn’t better guidance, it’s building trust into the design upfront, by ensuring the people trusted with the most relevant subject-matter expertise help design the assessment in the first place.

Demand didn’t stay put. Agencies raised their hands, agencies or OPM ran a resource-intensive recruitment action, and then agencies were slow to hire — or circumstances changed before they did. The August 2024 OMB/OPM hiring memo specifically directed agencies to review available shared certificates before launching new hiring actions — a discipline that, if actually followed, would force better demand alignment upfront. It mostly didn’t happen and, absent the sort of prompting we talk about later, is hard to enforce. Partly this is a culture problem, but it’s also a structural one: agencies that don’t plan for talent surges find that new hiring needs don’t align with their existing workforce plans or their capacity to recruit, assess, and onboard. You can’t opt into a pooled action and then be surprised when the pool fills.

We struggled to tell the right people, and the system didn’t either. There’s a more fundamental problem sitting underneath the demand-alignment failure: hiring managers and HR specialists often don’t hear about pooled hiring announcements at all, and when they do, it’s generally not with enough lead time to actually prepare. Pooled actions get announced through OPM memos and Chief Human Capital Officers (CHCO) Council communications that circulate at the leadership level (and boy howdy did we circulate!), but that information doesn’t reliably travel to the hiring manager who is already three weeks into drafting a job announcement for the exact role sitting in a shared cert. And when it does arrive, it arrives as information: there’s no deadline attached, no checklist triggered, no reason to stop what they’re already doing. As it stands, among the 200K+ hiring managers, most made very few hires a year or in their overall career, so learning a process with barriers to entry was challenging.

Nothing interrupts the default action.The deeper problem is that nothing in the hiring workflow itself cues anyone to look. When a hiring manager initiates a new action in the hiring system, they’re not pushed or incentivized in any systematic way to check for an existing cert. When an HR specialist begins drafting a job announcement, no flag surfaces to say: a shared certificate for this position series already exists, do you want to use it? The system simply lets them proceed. This means that even when an agency or OPM has done the work of running a pooled action and producing a cert, agencies duplicate that effort anyway; less due to indifference, but because the path of least resistance is to do what they’ve always done, and nothing in the process interrupts that default.

The fix here is partly cultural but a lot technical. The Agency Talent Portal and USA Staffing need to surface available shared certificates at the moment a hiring manager or HR specialist initiates a new action for a covered position: as a required check embedded in the workflow itself. If you’re about to post a GS-12 data scientist announcement and there’s an active governmentwide cert for that exact series and grade, the system should tell you, right then, before you proceed. Opt-out, not opt-in. The current design assumes awareness that doesn’t exist and motivation that isn’t reliable.

Pooled actions were expensive for the “owner” and the experts: While cost-saving overall, running pooled actions could be resource and time consuming for the “owner,” and particularly the subject matter experts brought in for assessment, particularly when hires were not ultimately made.

The position description bottleneck. Pooled hiring inherits whatever good and bad planning exists in agencies’ position description (PD) libraries. Even for commonly-hired roles, position descriptions are not always readily accessible and, likewise, standard assessments often don’t exist at every grade level. But it’s a bigger challenge than that: the whole GS system presumes (competencies, job task analyses, and more) that every job is highly specialized, not generalizable for cross-agencies pools. FAS documented this directly: OPM and the Permitting Council collaborated to create a pooled, cross-government announcement for Environmental Protection Specialists — one job announcement producing a candidate list many agencies could use. But the assessment became a bottleneck because standard assessments didn’t exist for each grade level in the announcement, requiring significant additional development time. This isn’t an edge case, it’s a Tuesday. Breaking! OPM Director Kupor just announced a new AI tool to generate PDs! We’ll follow with interest.

Hiring managers couldn’t get access without a permission chain. For a new hiring innovation to be adopted, you’d think that all the barriers, incentives, and opt-in/out dynamics would be aligned. You’d be wrong. Pooled hiring at a “mother may I” architecture: system passwords and access, coordinators, gating processes, intermediaries between hiring managers and shared certificates. It’s a design flaw dressed up as compliance. The same 2024 memo had to explicitly direct agencies to update hiring manager permissions in the Agency Talent Portal. That it needed to be said tells you everything about how poorly the access question had been handled. As FAS and the Niskanen Center jointly documented in their analysis of the current OPM hiring memos, the toughest tasks are also the most crucial: changing the culture around hiring to empower managers, and actually letting line managers be managers.

Talent teams could be a good idea that keeps getting launched without the authority or resources to actually work. Every administration for the past decade has called for empowered agency talent teams — small, specialized units charged with driving hiring innovation, adopting new tools like SME-QA, and coordinating participation in pooled actions. M-24-16 explicitly called for agencies to create and sustain these teams, and the current OPM Merit Hiring Plan has stood one up at the central level as well. The concept has potential but execution has been consistently undercut by the same failure mode: no committed resources, no authority to intervene, no access, and no product mindset. In understaffed agency HR offices that were not empowered to “get to yes”, the function hasn’t meshed well, and moreover, it’s arrived in a system that already lacks strong strategic workforce planning, a key enabler of its potential success.

As FAS and the Niskanen Center documented agency talent teams, OPM communications and education support, and the necessary systems changes all require people, money, and IT investment that hasn’t materialized. Announcing a mandate is not the same as funding its execution.

But underfunding isn’t the only problem. Even well-resourced talent teams have struggled when they lacked the institutional standing to actually change agency behavior. The core failure mode is assuming that having good people in the building is enough — that talent solves problems on its own, without a clear theory of change about authority, access, and how decisions get made. An agency talent team that is advisory in nature, without a direct line to hiring managers and HR decision-makers, without leadership backing when they push back against entrenched process habits, and without metrics that create accountability for adoption, is not going to move the needle on pooled hiring participation. It’s going to produce reports and hold workshops and then watch agencies do what they were already going to do.

Veterans preference created confusion that nobody addressed proactively. Preference applies differently in delegated examining versus merit promotion contexts. When agencies share certificates across those lanes, legal ambiguity creates real hesitation. This is genuinely solvable — but only if OPM issues targeted guidance with each pooled action as a standard part of the launch package. Stepping back, it’s necessary to state that any type of absolute preference is going to make pooled hiring challenging. Clarifying guidance is a Band-Aid.

Small technical barriers compound the problem. One underreported friction point: shared certificate policies can constrain agencies from sharing certs across different geographic locations designated in the original announcement, or across different hire types — temporary versus permanent. An agency running a pooled action for DC-based positions can’t easily extend that cert to field office hires. A cert issued for permanent positions doesn’t smoothly cover term appointments. These are solvable technical problems that OPM and OMB could fix through policy revision but they require someone to actually map the barriers before designing the action.

And when agencies go it alone anyway, the burden multiplies for everyone. This is the part that gets lost in discussions that treat siloed hiring as merely inefficient rather than actively harmful. When agencies that are already understaffed — particularly permitting and HR teams — don’t leverage opportunities to work together, bottlenecks compound. Pooled hiring isn’t just a convenience for well-resourced agencies. For teams that are already stretched, it’s the difference between a manageable workload and an impossible one.

Agency HR leads without the skills or network to work across agencies. Like so much else, pooled hiring depends on relationships. OPM and agencies have not carefully selected the HR managers who not only understand the potential policy barriers to working across agencies but the collaboration skills and networks to solve problems quickly.

The Assessment Question: Use the Right Tool Not the Easy One

If you’ve read this far, you’ve probably heard of things like SME-QA, the greatest acronym in the hiring world. Let’s talk assessments.

The default federal hiring assessment — the self-assessment questionnaire — is effectively worthless for identifying technical talent. As Jennifer Pahlka has put it, the system has been built so that the most important knowledge is how the hiring process works instead of the knowledge needed to do the job. A nationally recognized programmer once applied to the Department of Defense and was initially rejected because their resume described real expertise in language that didn’t match OPM’s classification keywords. Meanwhile, someone who understood the system could mark themselves “expert” across every self-assessment category with no verification at all.

The Subject Matter Expert Qualification Assessment, or SME-QA, was one of the skills based hiring toolkits developed to fix this: real experts screen for real skills, with HR ensuring merit principles hold. SMEs independently review every resume. Candidates who clear the initial bar then go through further steps like structured interviews, coding exercises, or written assessments — administered by other practitioners in the field, not generalist HR staff. For technical roles going into a pooled action — data scientists, cybersecurity professionals, engineers — SME-QA paired with a shared certificate is close to the ideal design. Build the assessment once with governmentwide SME input, share the cert, and every agency draws from a pool that was actually screened by people who know the field.

But any skills based hire practice has a scaling problem that’s been documented since the first USDS pilots. The work is resource intensive for federal agencies not used to dedicating so much SME time to a hiring process. As Niskanen’s recent analysis of the Chance to Compete Act makes clear, new written assessments developed by industrial-organizational psychologists are extremely resource-intensive to produce — likely prohibitively expensive at the scale needed to cover broad swaths of the federal workforce. But there are roles and moments where such dedicated investment makes sense.

The design principle that should govern this: pooled hiring should be an opportunity to concentrate assessment burden at the enterprise level, not multiply it at the agency level. Build the assessment once, or maximize use of SME-QA time, governmentwide, for roles where it genuinely matters. Actually use them consistently rather than rebuilding from scratch at each agency. And as Niskanen argues, transform OPM’s role from compliance monitor to assessment engine: a marketplace of vetted, shared tools agencies can pull from rather than commission independently.

There’s a trust dividend here too. Agencies that contribute subject-matter experts to the assessment design have far more reason to trust the resulting certificate. Skin in the game at the assessment stage translates directly to confidence at the hiring stage.

A Note On Listening

Many successful pooled actions worked because OMB and OPM (or other senior White House offices) gave attention, capacity, authority and accountability to the process, bolstering agencies who were being asked to execute hiring with unusual flexibility and competence.

Overall, however, when agencies told OPM and OMB that pooled hiring was hard for them to execute alone, the response from the center was too often some version of: the guidance is out there, the instructions are online, that’s how the process works. Agencies described a cascade of rigidities that made implementation genuinely difficult, and we weren’t always responsive. We treated compliance problems as communication problems. If agencies weren’t doing it right, they must not have understood it correctly, so the answer was more guidance, clearer FAQs, better webinars.

That’s the wrong diagnosis. What they were telling us was that the process didn’t fit their reality and that the gap between what the policy assumed and what their operations actually looked like was wide enough that no amount of additional instruction was going to close it. When the people responsible for carrying out a policy are consistently telling you it’s hard in specific, consistent ways, the right response is to ask what’s broken in the desig.. The people designing these systems need to hear that feedback as signal instead of as resistance to be overcome.

This is the reason why the recommendations in this piece are about structural changes to how pooled hiring is designed, not about better outreach or clearer communications. Agencies don’t need another memo explaining how shared certificates work. They need a system that works in the conditions they’re actually operating in.

How to Actually Do This Right

The current OPM actions are a real opportunity. Here’s what would make them work, stated as plainly as possible.

Lock in real demand before you launch. Not expressions of interest: actual hiring commitments with funded billets and named positions. The failure mode is OPM building a pool that agencies shop from slowly or not at all. Require agencies to submit hiring forecasts before they’re included in a pooled action, and hold them to those forecasts with visible accountability.

Build assessment infrastructure before the announcement goes up. Standardized PDs, validated assessments, and clear SME selection criteria that agencies trust need to exist before the action launches. Thecentralized position description library called for in M-24-16 is the right vehicle. Critically, assessments need to exist at every grade level included in the announcement.

Build the awareness and the system prompt together. Upgrade communication on pooled hiring announcements directly to hiring managers and HR specialists. But communication alone won’t fix this. The Agency Talent Portal and USA Staffing need to surface available shared certificates at the moment a hiring manager or HR specialist initiates a new action for a covered position series and grade. This should be a required check embedded in the workflow itself — before they proceed with drafting a new announcement. If you’re about to post a GS-12 data scientist announcement and an active government-wide cert exists for that series and grade, the system should tell you right then. The current design assumes awareness that doesn’t exist and motivation that isn’t reliable.

Pool the interviewing, not just the screening. Coordinated interviewing days. Same-day or 48-hour offer authority for hiring managers. Agencies competing for the same candidates simultaneously, not sequentially. Cross-agency onboarding cohorts that start together and build peer networks from day one. This is what actually compresses time-to-hire.

Fund and empower talent teams as implementation infrastructure. Every idea in this piece requires someone inside each major agency whose job it is to make that happen. That’s what a talent team is for. But talent teams need three things that they rarely get: a dedicated budget line, direct access to the hiring managers and HR leadership they’re supposed to influence, and metrics that hold them accountable for adoption rates and actual hiring outcomes rather than process activity. A talent team of one person with a shared budget and no senior sponsor is not an implementation strategy.

Give hiring managers direct access. Update the Agency Talent Portal permissions. Eliminate the intermediary layers between a hiring manager and a cert they’re authorized to use. Hold managers accountable for whether they hire. Culture change here is real but it follows structural change: when managers have direct access and clear authority, behavior shifts.

Make follow-through a metric with teeth. Agencies that opt in and don’t hire should have to explain why, publicly, to the President’s Management Council.The voluntary participation problem doesn’t get solved with please-and-thank-you memos.

Run continuous pooled actions for common roles. HR specialists, contracting officers, environmental specialists, IT managers — these aren’t surge needs, they’re permanent ones. A cert that’s always open, with agencies drawing from it as needs emerge, is far more useful than a prestige program that runs once a year and then goes quiet.

The Bigger Lens

(with thanks to Gabe Menchaca and Peter Bonner for making the stronger argument)

Pooled hiring is a microcosm of a question the federal government seesaws on constantly: what does it mean to govern as an enterprise rather than as several hundred agencies that happen to share a payroll source?

This requires admitting something those of us who have worked in the center don’t always say plainly: agencies and their leaders are protecting their turf for understandable reasons. They are accountable for their missions, their budgets, and their outcomes. When a pooled hiring action asks them to trust a cert they didn’t design, coordinate interviews around a shared calendar, and accept that they won’t get every single thing they want, and that’s a big ask! The trade may be worth making, but it doesn’t happen automatically, and the center has not historically done a good job making the case for why, or building the conditions under which agencies can actually say yes.

That’s a collective action problem, and it’s harder than it looks. It requires genuine leadership alignment across all the agencies involved, and a center that has made the benefit of cooperation concrete and visible rather than just asserting it in guidance. Too often the response to non-participation has been more documentation rather than an honest look at what the actual barrier was. That’s compounded by a structural problem worth naming: agencies are accountable for their HR outcomes but OPM holds much of the compliance authority over how hiring gets done. Accountability without authority produces exactly the behavior you’d expect.

The federal government has demonstrated it can operate differently. The BIL surge, the data scientist certs, USDA’s HR specialists (and maybe Tech Force) worked because the conditions were right: shared design, locked-in demand, leadership alignment, enough urgency to overcome the default toward agency autonomy. The question is whether we can build those conditions deliberately rather than stumbling into them during a crisis. That requires a solid theory of change about how cross-agency infrastructure actually gets adopted: one that takes agency self-interest seriously as a design constraint rather than an obstacle to be overcome by memo. Get that right, and pooled hiring becomes a model for how the federal government decides what to do together and what to do apart. That’s a bigger prize than faster hiring. It’s a more functional government.

Strengthening the Federal Cycle of Learning and Adaptation by Closing the Loops

The federal government has a feedback-loop problem.

Regularly generated information, including evidence, performance information, and qualitative insights from implementation, too often fails to shape decisions. Evidence may be reviewed without changing priorities; performance data may be tracked without clarifying what it informs, and implementation feedback may reach leadership without surfacing what works for whom and why, or suggesting next steps. The components of a cyclical learning system linking priorities, questions, evidence, decisions, and implementation information exist in theory and on paper, but the connective tissue that turns all of these components into a functioning cycle of learning and adjustment is lacking. Information and artifacts alone don’t necessarily facilitate learning and adaptation; strengthening federal feedback loops requires embedding translation and use into decision-making from the start.

This memo is not a case for new infrastructure. The Evidence Act, learning agendas, evaluation plans, performance frameworks, and customer experience authorities already exist; what they do not yet add up to is a learning system. The translation this memo proposes is turning the infrastructure we have into the learning system we need, and it’s addressed to federal program leaders, policy officials, evaluation and evidence staff, performance officers, and strategic planning teams who already sit inside it and are best positioned to make it function as intended.

Challenge and Opportunity

The federal government already operates within a broad cycle of goal-setting, evidence generation, performance review, implementation, and reporting. On paper and in principle, this cycle should allow for learning, adjustment, and improvement to federal programs over time. In practice, however, agencies vary in how consistently they translate such information into planning, decision-making, or course-correction. Federal agencies have made progress in building and using evidence, but translating that information into timely operational or policy revisions remains uneven.

The core problem isn’t production; it’s translation, and the translation failure shows up as “so what” gaps on both sides of the information pipeline. On the input side, receivers of information are often left asking what they’re supposed to do, and on the output side, a second question appears – “is it my job to act on this, and if so, how?”. Research findings are often too slow, too caveated, or too disconnected from immediate policy and management questions. Performance data may show quantitative changes in outputs, costs, or enrollment without revealing the mechanisms behind them or the practical implications for implementation, or cueing the design apparatus that could apply these insights. Feedback from frontline service providers and affected users might reach leadership mainly through quantitative indicators, dashboards, or status updates, which don’t always capture lived experience, causal explanation, or informed suggestions for course correction. Without named owners and defined next steps, even the most actionable information tends to circulate rather than convert.

Three gaps sit behind this pattern. First; a context gap – decision-makers often lack the full picture, because qualitative indicators and customer experience research arrive separately, or later than quantitative evidence, leaving them with only a partial view of what’s working well or driving implementation problems. Second, an action gap; even with a complete view of the picture, it’s not always obvious which lever applies, on what timeline, or with what tradeoff. Third, an ownership gap; it’s often unclear who is responsible for translating any given signal into a decision, and this ambiguity means that insights can be observed without being acted on. Together, these three gaps leave evidence and feedback insufficiently integrated into decision-making routines.

The problem is also structural; decision-makers face turnover, competing priorities, time limits, and management pressures, and thus, evidence needs a more robust pathway. Devoid of clear translation, trusted messengers, and defined or mandated use points, even the most relevant information can be too late, too ill-timed, or too jargon-heavy to influence decisions, resulting in missed adaptation opportunities.

The federal government doesn’t need an entirely new learning architecture. It needs to make the one it already has more usable. Agencies can do this in a few ways. First, by building stronger translation functions by creating space for “knowledge brokers” (people or teams whose core function is to translate evidence into decision-relevant language and maintain the required relationships that make the translation trusted). Second, by incorporating the use of evidence, performance, and implementation feedback into policy and program work from the start. Third, by creating better pathways for implementation and lived-experience feedback to reach leadership in ways that resonate with them and support action.

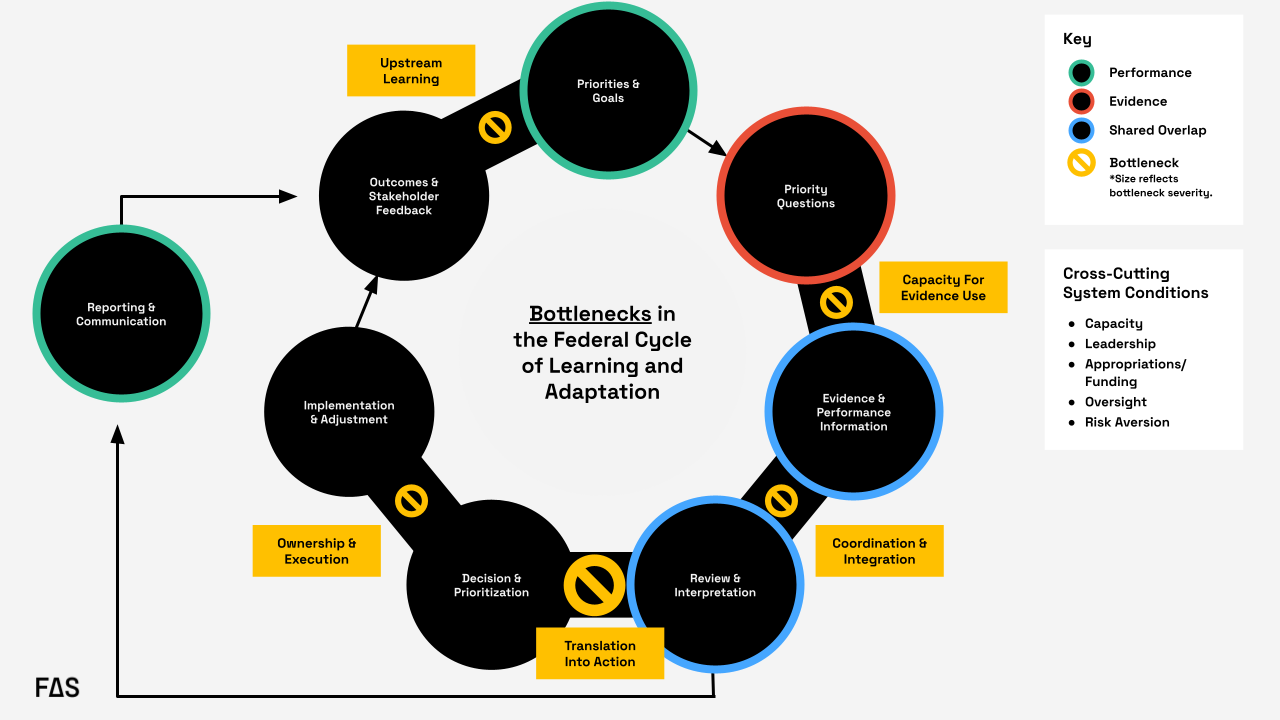

Formal federal guidance envisions a closed “loop” linking goals, priority questions, evidence, and performance information, along with review, decision-making, implementation, reporting, and feedback. In practice, the loop often degrades at key handoffs: evidence-use capacity, coordination and integration, translation into action, ownership and execution, and upstream learning from outcomes. The most significant recurring bottleneck occurs during the transition from review and interpretation to decision and prioritization, where information is generated and reviewed but does not reliably translate into action.

Plan of Action

We need to shift from a system that collects information to a system that uses it.

Agencies should create or strengthen embedded translation functions that connect evidence, performance information, implementation experience, and policy levers at the moment decisions are being made. The key is to move from a dissemination model to a utilization model. Instead of “produce, disseminate, and hope for uptake”, agencies should do the following:

Recommendation 1. Designate a knowledge broker to facilitate regular decision briefs…

or routines that create structured opportunities to clarify what’s known, what’s uncertain, and what actions are available – beginning with a defined set of high priority issues rather than every single decision the agency needs to make.

This recommendation targets the translation into action bottleneck in Figure 1; the handoff from review and interpretation to decision and prioritization, which can be considered the most significant recurring point of failure in the federal cycle of learning and adaptation. In practice, this means assigning this function to a role or small team housed in an existing performance, evaluation, strategy, or program office and requiring that group to support recurring decision points with short decision briefs. Those briefs should identify the decision, synthesize relevant evidence, performance trends, and implementation feedback, and specify available actions, tradeoffs, and owners.

For example, in the rollout of the FAFSA Simplification Act, a knowledge broker tied specifically to this initiative could have translated readiness indicators, beneficiary feedback, and information from financial aid administrators into decision-ready synthesis for the officials with decision authority who were attempting to course correct in real time. Instead, significant delays turned the rollout into a high-profile implementation issue.

Crucially, this function should start narrow. Rather than positioning a knowledge broker as an all-purpose translator for an agency’s full decision load, the initial portfolio could be scoped to a small set of priority issues. Starting narrow lets the broker establish credibility and relationships that make translation trusted and refine what the routine and explicit outputs are before it scales – the portfolio can scale later. This gives agencies a defined mechanism for turning reviewed information into decisions rather than leaving that handoff informal. Within the federal government, the Office of Evaluation Sciences has modeled how an embedded team of evidence translators can work alongside program offices rather than from a silo.

Recommendation 2. Start policy initiatives and evaluation planning with a real question…

or decision, identify the user, specify the lever, and clarify – in advance – what different findings would imply. Incorporating this thinking upstream changes the role of evidence from a retrospective input to an operational tool.

This recommendation mainly addresses the translation into action bottleneck, and secondarily, the evidence-use capacity bottleneck. Agencies can operationalize this by building decision framing into existing learning agenda and evaluation planning processes, both of which are already required under the Evidence Act. Before an evidence product is commissioned or a performance indicator is selected, program offices can be required to answer four questions on the record: What decision is this for? Who will use it? What lever would change as a result? What finding would lead to what action?

In a hypothetical example, say that USDA’s Food and Nutrition Service (FNS) wants to commission a new evaluation or analysis regarding SNAP redetermination churn (the pattern of households losing SNAP benefits at recertification and then re-enrolling, often for procedural reasons rather than eligibility). The four questions noted above can be answered on the record before the work begins. The decision is whether to issue new guidance to state agencies on recertification practices and what that guidance should encourage. The user is the FNS administrator and the relevant policy office, with state level SNAP directors as the implementing audience. The lever is subregulatory guidance. The early thinking regarding mapping findings to actions would specify, in advance, which patterns or insights would trigger which associated response. This way, when the findings arrive, USDA wouldn’t be starting from scratch with the “what do we do with this” question; the decision architecture would already be in place.

Pre-specifying these conditions in a short decision-framing memo that travels with the work turns evidence from a retrospective deliverable into a tool scoped to a specific decision or policy window. The same logic extends to decision memo templates themselves, which can include standing prompts such as “what evidence informed this decision?” and “how will we learn about this in real time?”, so that utilization is built in.

Recommendation 3. Create pathways for easier access to mixed methods evidence and insights from lived experience.

Recommendation 3 targets the coordination and integration, and upstream learning bottlenecks; the gap where evaluation, performance, administrative, qualitative, and customer experience data move at different speeds, live in different places, and reach decision-makers as parallel streams. Insights from lived experience – what programs actually look and feel like to the people using them – are particularly likely to be separated from information that reaches leadership, arriving as anecdotes, if they arrive at all. Because these problems are distinct; the recommendations can be broken down and addressed at the agency level.

Recommendation 4. Standardize decision-ready formats that consolidate quantitative and qualitative evidence.

Agencies should build standardized decision templates and briefs that present quantitative indicator-level data alongside narrative summaries of lived experience and implementation conditions, so decision-makers aren’t expected to synthesize across disparate sources on their own timelines. The resulting artifacts should be tied to recurring decision moments (budgeting, guidance revisions, program reauthorization) so that they can be used in real time.

In the FAFSA Simplification Act rollout, the Department of Education leadership faced this problem: application data, technical readiness indicators, information from financial aid administrators, and user feedback existed separately, and moved at different speeds. A standardized decision-ready format could have pulled those streams together; pairing completion trend data with brief narratives or exemplary quotes regarding what applicants and financial aid offices were actually encountering, rather than leaving leaders alone to assemble the picture in real time

Recommendation 5. Actively use existing general clearance mechanisms for rapid qualitative and user experience research.

Agencies should make use of standing generic clearance mechanisms that allow them to fast-track small qualitative and user experience studies (for example, up to 100 respondents, completed within a fixed time window) when unexpected findings need rapid explanation. This would allow for the ability to run a tightly scoped evaluation in weeks rather than months, which is the operational timescale at which decisions frequently move. Without it, the qualitative evidence needed to explain any type of performance anomaly often arrives after the decision or policy window has closed.

For SNAP redetermination churn, this would let FNS turn around a short, scoped evaluation of why participants are dropping off at a specific step in the recertification cycle in weeks rather than months. The insights could then inform the next round of guidance rather than coming in after the fact.

Recommendation 6. Build customer-first indicators built into existing federal reporting requirements.

Beneficiary and frontline experience should become part of the evidence base by default rather than by exception. Most federal programs already have reporting infrastructure, and layering in a modest set of customer-first indicators that use the existing infrastructure rather than building new information collection requirements ensures that user perspectives are consistently available as routine inputs.

Within the federal government, the customer experience and life experience work coordinated through OMB and performance.gov has demonstrated that lived experience can be collected and used at scale within existing authorities, which can be considered a foundation to build from rather than reinvent. At the state level,Minnesota’s Story Collective, housed within Minnesota Management and Budget (MN MMB), pairs administrative and performance data with qualitative, lived-experience narratives to give decision-makers a richer view of what their programs are actually producing.

These recommendations also address a common weakness in the federal system: evidence and performance information sit within the same broad ecosystem but move at different speeds, use different tools, and often reach different audiences. This is all the more reason to create a translation layer that can synthesize across them. Agencies need staff and routines that can connect evaluation, administrative data, performance indicators, qualitative input, and implementation realities into decision-relevant guidance. Without that connective tissue, agencies are left with parallel streams of information that don’t consistently converge at the point where action occurs.

Conclusion

The federal government already generates a great deal of information about what it’s doing and how it’s performing, but information isn’t the same as learning, and learning isn’t the same as adaptation. The gap between them is where the “so what” goes unanswered, and where the federal feedback loop breaks down.

Closing these loops doesn’t require new infrastructure or authority. It requires three shifts in how the existing system is used: designating knowledge brokers to carry translation across the handoff from review to decision-making, building decision framing into policy and evaluation work from the start so that evidence is scoped to the decisions it’s meant to inform, and creating pathways that move mixed-methods and lived experience into decision-makers’ hands in formats and timeframes that match how decisions actually happen in the federal environment. Whether the question is about USDA responding to SNAP redetermination churn or the Department of Education learning from an application rollout in real time, the underlying pattern is the same: the signals exist, but the translation that turns signals into actionable insights doesn’t reliably happen.

If the government wants a system of learning and adaptation that improves results in real time, it has to treat translation, utilization, and adaptation as core functions of governance rather than as afterthoughts.

Outcome-Based Contracting Reorients Government IT Acquisition Around Public Value and Mission Results

The effectiveness of federal programs is increasingly determined by the technology that powers them. Yet decades of oversight and research have documented persistent challenges in large-scale IT modernization. The Government Accountability Office has repeatedly designated federal IT management as high risk, citing cost overruns, schedule delays, weak requirements management, and inadequate oversight. Bent Flyvbjerg’s research shows that large public-sector technology and infrastructure programs are especially prone to failure due to scope creep and cumulative risk. The Defense Innovation Board similarly concluded in Software Is Never Done that long development cycles and early requirement lock-in expose missions to unacceptable risk.

Across these analyses, the pattern is consistent: requirements are defined too early and too rigidly; performance is measured too late; incentives reward milestone completion rather than operational outcomes; and risk accumulates until deployment. These failures reflect several structural challenges—fragmented funding, leadership turnover, legacy system complexity, and acquisition models that delay validation and limit adaptation.

Traditional acquisition approaches assume stable requirements and predictable environments. Software-intensive systems do not behave this way. Requirements evolve, dependencies emerge during implementation, and technology ecosystems shift over the life of the contract. In this context, specification-driven models can increase risk by delaying feedback and limiting course correction.

This paper examines Outcome-Based Contracting (OBC) as a model for aligning acquisition with the realities of modern IT delivery. OBC reframes procurement around the staged achievement of measurable mission outcomes rather than the delivery of predefined technical artifacts. OBC ties funding, evaluation, and continuation decisions to mission outcomes and pairs naturally with iterative delivery practices that surface and reduce risk early.

Outcome-Based Contracting

Federal acquisition models have evolved over time in response to changing technologies and risks. Early approaches emphasized detailed specification and cost control, with contracts structured around defined requirements and reimbursement of inputs (e.g., cost-plus and fixed-price models). As systems grew more complex, performance-based contracting emerged to shift focus from activities to measurable outputs and service levels. However, in complex and dynamic environments, even performance-based models often remain tied to predefined deliverables and intermediate metrics, limiting their ability to adapt as conditions, requirements, and understanding evolve over time.

Outcome-based contracting (OBC) represents a further evolution. It structures the government–contractor relationship around shared accountability for mission results rather than delivery of predefined outputs. Its defining feature is not a pricing model, but the alignment of incentives, governance, and performance measurement around measurable mission outcomes.

As Allan Burman notes, building on performance-based contracting, OBC shifts accountability from activities and milestones to mission outcomes. In practice, it establishes a structured process in which government and contractor jointly deliver measurable results, with contracts defining decision rights, evaluation mechanisms, and adaptive processes.

Key features include:

- Shared accountability: success is defined in operational terms, not artifact delivery

- Collaborative outcome definition: the government defines the problem to be solved, contractors propose and refine approaches as evidence emerges

- Adaptive performance management: metrics guide decisions, not just compliance

- Joint problem solving: governance supports rapid adjustment when performance diverges

A useful way to understand outcome-based contracting is as a managed performance relationship rather than a one-time procurement transaction. As research from the IBM Center for The Business of Government emphasizes, effective outcome-based models require clearly defined desired results, measurable indicators of success, and ongoing performance management processes that allow both parties to assess progress and adjust course. This includes establishing baseline performance, continuously monitoring results, and linking financial incentives, contract options, and governance decisions to demonstrated improvement. Critically, these models depend on sustained collaboration and transparency: agencies must be able to interpret performance data and engage in joint problem-solving with vendors, rather than relying solely on compliance reviews. In this sense, OBC is not simply a different way to write requirements—it is a different way to manage delivery, in which measurement, incentives, and decision-making are continuously aligned to achieving mission outcomes.

Applying Outcome-Based Contracting to IT Modernization

Applying OBC to IT modernization requires three shifts: defining measurable outcomes, structuring decision rights, and organizing contracts around incremental delivery.

Defining outcomes

Mission objectives must be translated into measurable operational indicators—such as transaction completion rates, time to resolution, system availability, or error reduction. These indicators must be precise enough for evaluation while reflecting real-world service performance.

Effective models distinguish between:

- Mission-level outcomes (stable): e.g., reducing time to receive benefits

- Implementation metrics (adaptive): e.g., response times or interim system thresholds

For example, a call center contract might set a mission outcome of reducing resolution time by 30 percent, supported by metrics such as speed of answer, first-contact resolution, and callback completion time.

A central design question is how outcomes are embedded in the contract. Outcomes can function as binding accountability anchors, linked to evaluation, incentives, and option decisions, but not as rigid end-states. This approach is only effective when supported by governance structures that allow agencies to interpret performance and adjust delivery.

Critically, outcomes and the underlying problem definition must be treated as testable and subject to refinement. Initial problem framing is often incomplete in complex systems. Contracts and governance models should therefore include regular check-ins, using data, user research, and operational feedback to assess whether the problem is being solved as intended. Where necessary, agencies and vendors must be jointly empowered to restate or refine the problem to ensure continued alignment with mission needs.

Structuring decision rights

OBC requires clear decision making authority over priorities and tradeoffs. In software delivery, this centers on a strong government Product Owner (PO) role. The PO is responsible for backlog prioritization, acceptance criteria, and aligning delivery with mission outcomes. The PO must be empowered to continuously adjust priorities based on user needs and performance data without requiring contract modifications. Contractors are accountable for delivering measurable progress, but do not control mission priorities.

Governance must reflect agency maturity, and also the nature of the initiative. More mature organizations can rely on PO-driven execution and adaptive metrics, using contract outcomes as high-level anchors. Even in less mature agencies, OBC principles can be applied in targeted ways—particularly in user-facing systems or components where outcomes can be clearly measured. In some cases, especially large enterprise system implementations, hybrid approaches may be required. These may combine clearly defined objectives and outcome metrics with more structured implementation phases for core platform rollout. The key is not strict adherence to a single methodology, but aligning decision rights, outcomes, and delivery approach to the realities of the system being implemented.

Structuring incremental delivery

Contracts must support incremental, evidence-based delivery. Large, multi-year programs defer risk discovery until late in the lifecycle. Iterative delivery reduces this risk by shortening feedback loops: capabilities are deployed incrementally, evaluated under real conditions, and adjusted early. Incremental delivery provides disciplined mechanisms for iteratively paying down risk.

OBC complements this model by tying funding and continuation decisions to demonstrated performance. Agile practices surface risk; OBC aligns accountability and resources to its mitigation.

This has direct implications for funding models. Effective OBC implementations require upfront decisions about how much funding is allocated to a product or service, with mechanisms to adjust that funding over time based on performance. Budgeting should support iterative scaling—expanding or contracting investment based on whether outcomes are being achieved. This, in turn, requires financial flexibility, such as capability-based budgeting, and the ability to reallocate funds or leverage working capital-like mechanisms.

In practice, appropriations constraints can limit this flexibility. For example, agencies operating under single-year appropriations may struggle to dynamically adjust funding in response to performance signals. Addressing this requires coordination between acquisition, product, and financial management functions to ensure that funding structures align with the adaptive nature of outcome-based delivery.

Outcome-Based Contracting In Practice

Outcomes-oriented approaches are not new but remain underutilized in IT acquisition. Existing models demonstrate the value of aligning funding to measurable performance.

Within government, the Department of the Navy’s World Class Alignment Metrics (WAM) evaluates IT investments based on outcomes such as resilience, customer satisfaction, and cost per user. Similarly, Department of Defense Performance-Based Logistics ties compensation to readiness outcomes, and NASA’s Commercial Crew program links payments to demonstrated capability.

These examples share a core principle: funding follows validated performance rather than predefined inputs. Applied to IT modernization, this requires pairing mission outcomes with iterative delivery, clear decision rights, and sustained technical engagement. Without these elements, outcomes risk becoming abstract goals rather than operational tools.

Despite its advantages, outcome-based contracting is not the default in federal IT acquisition. In practice, existing incentives continue to favor specification-driven models: funding structures are rigid, oversight emphasizes compliance with predefined requirements, and procurement processes reward detailed up-front definition over adaptive execution. The following case illustrates how these dynamics shape real-world outcomes—and how leadership, governance, and delivery choices ultimately determine whether programs succeed or fail.

Case Study: SSA Call Center Modernization

The Social Security Administration (SSA) operates one of the largest public-facing service platforms in the federal government, serving approximately 70 million Americans through its national 800-number network and field offices, processing high volumes of calls. In 2017, the SSA faced growing problems with its aging, complex telephone infrastructure and rising wait times for the tens of millions of Americans who rely on the agency’s national 800-number for assistance with benefits, Social Security numbers, and other services. To address these issues, SSA launched the Next Generation Telephony Project (NGTP), a large IT modernization effort intended to replace legacy telephone systems and unify call handling across the agency.

NGTP emerged from a traditional acquisition model: a detailed, waterfall-style specification, a large systems-integrator contract, and milestone-based progress tied to predefined technical requirements. In February 2020 SSA awarded an IDIQ contract to Verizon to design, implement, test, transition, operate, and maintain the new telephony platform, including procurement of hardware, software, and services. Implementation faced challenges from the beginning: Verizon’s win was contested, delaying the start of work. SSA’s team didn’t realize the solution Verizon proposed, reinforced by SSA’s own contract requirements, was based on architectural components that were a generation behind leading contact center systems. NGTP’s 10-year planning horizon meant any solution would likely be obsolete before full deployment.

By 2020, with the project still in early development, the COVID-19 pandemic forced SSA call center agents to work remotely — a capability the existing legacy system lacked. Verizon scrambled to assemble a custom stopgap solution, but this was plagued with issues. From May 2021 to December 2022, over 40 service disruptions caused dropped calls, long wait times, and outages. At times, more than half of calls went unanswered as the team capped incoming calls to maintain system stability.

Meanwhile, NGTP suffered further delays and technical hurdles. SSA executives were frustrated but assumed they were contractually stuck. The system finally launched in December 2023 for the 800-number only, delivering just part of the promised functionality. But the system experienced ongoing performance issues, including increased wait times and disconnected or unanswered calls that hindered the agency’s ability to serve the public. On August 22, 2024, after only about 10 months of operation, SSA transitioned the 800-Number Network off the NGTP platform and moved to a different telephony solution. The NGTP project cost SSA over $160 million and was abandoned within a year of deployment, with the agency reverting to an alternative telephony platform.

The failure was not attributable to a single cause. Interviews and oversight findings point instead to a combination of over-specification, missing mission outcomes, weak accountability mechanisms, long planning horizons, and an acquisition structure that made adaptation difficult.

It is also important to recognize the scale and complexity of SSA’s operating environment. The agency’s service delivery depends on hundreds of interdependent systems, many of which encode decades of policy and operational logic. Modernization efforts must contend not only with outdated technology, but with deeply embedded business rules and integration dependencies that are not always fully visible at the outset. These conditions increase the difficulty of both specification and implementation, regardless of acquisition approach.

Specificity Did Not Produce Control

A central lesson of NGTP is that specificity in requirements does not necessarily translate into control over outcomes. The solicitation and technical requirements were extensive and highly prescriptive. They incorporated staff input but lacked sustained user-centered validation and focused heavily on defining technical components rather than the operational outcomes the system was intended to achieve. In several cases, the contract mandated architectural approaches that constrained flexibility and effectively locked the program into solutions already lagging prevailing commercial practice.

The NGTP contract required the development of significant custom telephony capabilities in a market where mature commercial Contact-Center-as-a-Service (CCaaS) platforms already existed. Custom software and hardware development inherently carries greater risk than configuring established commercial platforms: the first buyer bears the cost of defects, scaling problems, and design errors that mature products have already identified and resolved. As a result, the program assumed substantial technical risk without clear evidence that SSA’s mission required a bespoke system.

The decision to pursue a custom telephony architecture also introduced structural technical risks. The system was intended to function as a “single enterprise contact center” capable of routing calls across SSA’s national network. In practice, however, the implemented solution consisted of six separate contact centers operating as independent queues rather than a unified system. According to the SSA Office of Inspector General, this configuration prevented calls from being dynamically rerouted between queues, limited agents to answering calls from a single queue, and could disconnect calls when agents logged out of one queue even if capacity existed elsewhere in the system. These limitations increased wait times and created operational inefficiencies. Efforts to resolve the architectural mismatch led to the development of a custom routing “brain” intended to connect the six queues—effectively reinventing load-balancing technologies that have been widely used and commercially mature for decades. The need to retrofit this architecture required multiple contract modifications and created ongoing operational challenges. As one SSA leader later observed, “Some people on the project might have known that load balancers had been mature for 30 years, but managers weren’t listening to them.”

The contract’s prescriptive structure also undermined the flexibility typically associated with its contract vehicle. Although NGTP was structured as an IDIQ, the narrowly defined solution space meant that many necessary adjustments required formal work orders or contract modifications. In practice, the program combined the administrative rigidity of traditional contracting with the technical risk of custom system development.

The detailed specifications locked the implementation into many types of outdated architectural assumptions. For example, certain components were required to be compatible with an old, yet unspecified, version of Internet Explorer, a browser Microsoft formally retired in 2022 in favor of Microsoft Edge. Rapidly evolving technology environments can render highly specific requirements obsolete before systems are delivered. At the same time, the extensive technical detail did not fully address practical operational considerations, such as ensuring that existing SSA call center staff could easily access and use the system in their day-to-day workflows.

Missing Mission Outcomes

The NGTP case also illustrates the limits of operator-focused metrics. SSA understandably focused on call volume and the ability of the system to handle surges in demand. Previous infrastructure could “top out” during predictable spikes, such as cost-of-living adjustment periods. Capacity therefore became a central concern.

But throughput alone is not the same as service performance. For beneficiaries, the meaningful outcomes include how long it takes to reach a representative, whether the issue is resolved on the first contact, how many interactions are required, and how long it takes to complete a request. Those mission outcomes were not adequately embedded in the contract’s performance framework.

Metrics such as average speed of answer did not fully capture the user experience, particularly when calls were dropped, or handled initially by automated systems, or callbacks were counted in ways that reduced reported wait times without necessarily reducing the time required for beneficiaries to obtain help.

The deeper problem was architectural as well as contractual. SSA’s call center is best understood as a front-end interface to a much larger, deeply complex service delivery system involving eligibility determination, identity verification, claims processing, and payments. Yet the contract largely treated telephony modernization as a standalone technical problem rather than as part of an integrated operating model. This narrow framing also limited foresight into how the capability could evolve over time, adopting future emerging technologies or adding integrations with other agency systems to support an omnichannel service model. Defined primarily within a technical infrastructure context, the effort optimized for telephony components rather than positioning customer service as a strategic, cross-agency capability.

Accountability Was Weak Where It Mattered Most

Federal acquisition frameworks already provide multiple mechanisms for vendor accountability, including service level agreements (SLAs), financial incentives and penalties, option periods tied to demonstrated progress, and formal performance reviews. In the private sector, large IT and service contracts routinely embed such operational standards like uptime guarantees, response-time thresholds, incident-resolution timelines, and financial penalties for failure to meet them to ensure that vendors remain accountable for system performance under real operating conditions. In the NGTP case, however, these mechanisms were not sufficiently embedded in the contract structure or tied to mission outcomes and enforceable operational standards.

The SSA Office of Inspector General found that the NGTP contract lacked sufficient performance-based quality standards and incentives to ensure accountability for resolving system-performance issues. The practical result was limited leverage for the government even when the system failed to meet technical and operational needs.

The most striking example came at termination. When SSA stopped work on the NGTP effort, the agency still paid the vendor the remaining portion of the full $125M contract amount. Whatever the legal and operational considerations behind that decision, the message to the market was problematic: poor performance did not produce a proportionate financial consequence.

SSA’s Course Correction

SSA’s response illustrates an alternative approach. Rather than pursuing another large, fully specified replacement effort, the agency adopted a more incremental approach using cloud-native technology and more flexible contract mechanisms. A proof-of-concept deployment of Amazon Connect at a Pennsylvania call center allowed SSA to test the platform in live operating conditions before scaling further.

This approach introduced several disciplines that had been missing from NGTP. It reduced dependence on bespoke infrastructure, created an opportunity to measure performance under real conditions, and allowed the agency to collect operational evidence before broader rollout. Critically, assumptions were tested incrementally rather than embedded upfront. The agency also adopted Product Operating Model best practices: they stood up a cross-functional product team with a product manager, technical lead, design lead, and an SME lead who was responsible for state specific launches, training, and key metrics.

Early results suggested improvement. SSA’s Office of Inspector General reported that the agency’s telephone service handled substantially more callers in fiscal year 2025 and that reported average speed of answer improved. The subsequent administration leveraged the scalable platform to expand deployment across all field offices. At the same time, oversight and public reporting also highlighted the importance of careful metric design. Some reported gains did not fully reflect the total time beneficiaries waited for callbacks or to resolve their issues. That distinction is key: better performance frameworks depend not simply on more metrics, but on the right metrics.

Lessons for Outcome-Based Acquisition

The SSA case highlights several lessons:

- Complex systems cannot be fully specified in advance. Over-specification increases risk, and can lock programs into the wrong solution.

- Iterative delivery is a risk management tool. It surfaces integration, usability, security, and performance problems early enough to address them.

- Accountability must be tied to mission outcomes. Operational and customer experience results matter more than intermediate artifacts.

Governance matters as much as contract structure. Strong product ownership and leadership are essential. Critical to the successful turnaround was having a cross-functional “product quad” of product management, engineering, design, and domain expertise. In the NGTP case, requirements were largely defined within an infrastructure-oriented telecommunications function, leading to a solution optimized for technical components rather than end-to-end service outcomes. This organizational starting point constrained problem framing and limited the program’s ability to align delivery with user needs and mission performance.

An outcome-based model would have defined mission metrics such as first-contact resolution and total time to complete transactions, incorporated discovery phases, and tied continuation decisions to demonstrated performance. It also would have created a precedent for early adoption of critical monitoring tools used by leaders in the course correction, like integrating real-time customer experience telemetry into daily operations, which enabled continuous monitoring of user outcomes and rapid reprioritization of features to address emerging issues as they occur.

Finally, contract structure alone is not sufficient. Successful implementation depends on sustained leadership, technical judgment, and the institutional willingness to act on evidence. Several interviewees noted that meaningful progress accelerated only after leadership with prior agile and product delivery experience assumed responsibility for the effort. Acquisition structure can enable better outcomes, but it cannot substitute for leadership capable of making informed technical and operational decisions in complex environments.

Conclusion

Large-scale IT modernization is central to federal mission delivery. Traditional acquisition models remain effective in stable, well-defined environments but are poorly matched to software-intensive systems characterized by uncertainty, interdependence, and continuous change.

Outcome-based contracting provides a more effective framework for these conditions. It strengthens accountability by tying funding and continuation decisions to measurable performance, improves risk management through iterative delivery, and reorients acquisition toward public value. Rather than asking whether a contractor delivered what was specified, it asks whether the government achieved the mission results it needed.

Realizing this shift requires more than changes to contract structure. The authorities to pursue outcome-based approaches largely already exist, but incentives, funding constraints, and workforce capabilities continue to reinforce specification-driven models. Appropriations structures limit flexibility, oversight mechanisms emphasize compliance over performance, and many agencies lack the product management and data capabilities needed to define and act on outcome metrics. Addressing these constraints will require coordinated changes across budgeting, oversight, acquisition practice, and workforce development.

In the near term, IT modernization progress should be visible in concrete ways: contracts that tie option decisions and incentives to mission outcomes; programs operating with empowered Product Owners and real-time performance data; and evaluation frameworks that prioritize whether services are improving, not just whether requirements were met. Over time, this would mark a broader shift from managing compliance with plans to managing performance against outcomes.For technology and IT modernization efforts, the success of outcome-based contracting depends on alignment with product operating model practices, technical expertise, and sustained leadership. The central proposition of OBC is not less discipline, but better discipline—organized around measurable outcomes, empirical evidence, and the continuous identification and reduction of technical and operational risk.

Who Governs Government AI? The Challenge of Federal Implementation

Public Trust and the Stakes of Federal AI Regulation

Americans are skeptical that their government can regulate artificial intelligence. A Pew Research Center study from October 2025 found that while large majorities in countries like India (89%), Indonesia (74%), and Israel (72%) trust their governments to regulate AI effectively, only 44% of Americans say the same, and a greater number, 47%, express distrust. Globally, more people trust the European Union (53%) to regulate AI than the United States (37%). Americans will only realize the benefits of AI if they have confidence that these systems are used safely, fairly, and in ways that improve their lives.

Trust is not a soft concern: it is the foundation for the adoption, legitimacy, and long-term success of any technology. When people doubt that AI systems are governed responsibly, they are less likely to accept their use in sensitive domains like healthcare, education, public benefits, or national security. Public skepticism can slow innovation, undermine compliance, and deepen polarization around emerging technologies. Encouragingly, this is not a partisan issue. Republicans and Democrats alike have emphasized that trustworthy AI use is a prerequisite for public adoption and lasting legitimacy. If the U.S. is going all-in on AI, then building and maintaining that trust is therefore not simply a communications challenge; it is a governance imperative.

The federal government plays a starring role in meeting that imperative—not only as a regulator, but also as a model user of AI. It deploys some of the most consequential and high-risk AI systems, including those that shape access to benefits, guide law enforcement priorities, manage immigration processes, and support national security decisions. The federal approach to deploying these systems does more than affect service delivery or cost savings; it sets expectations for industry standards, academic research, and public perception of the technology. In effect, the federal government serves as a societal-level proving ground for AI governance. Because it uses AI in high-risk contexts, it must demonstrate that these systems can be governed effectively through transparency, oversight, accountability, and meaningful safeguards. Failure to do so would not only diminish confidence in AI as an economic and societal asset, but weaken the already tenuous trust the public has in government as a manager of risk and opportunity

Two use cases illustrate this point. One existing high-potential but high-risk application is the Veteran’s Administration’s (VA) REACH VET program, which uses predictive models to identify veterans at elevated suicide risk so clinicians can proactively reach out. Because it draws on health records and includes explicit race coding, one would be concerned about opaque modeling choices and the possibility of inequitable or incorrect flags. The stakes are high. If veterans feel that an algorithm is driving interventions without clear transparency, clinical guardrails, and accountability or if it misses potential intervention needs, trust can erode, not only in REACH VET but in the VA’s broader use of AI, and its mental health screening and treatment programs.

Planned uses of AI in the current administration are also concerning. CMS’s planned Medicare WISeR Model would test whether “enhanced technologies,” including AI, can “expedite the prior authorization processes for select items and services that have been identified as particularly vulnerable to fraud, waste, and abuse, or inappropriate use.” In practice, this could result in automated systems delaying or denying coverage for medically necessary prescriptions or treatments if a model incorrectly flags them as suspicious. The trust risk is immediate: prior authorization already feels like a barrier to care, and adding AI without appropriate guardrails or adjudication can make delays or denials seem more automated, less explainable, and more complicated to challenge, especially for older or medically complex beneficiaries. If people perceive AI as prioritizing cost control over care, it will quickly undermine confidence in Medicare and in government AI more broadly.

These two use cases show how setting parameters around federal AI governance is not an abstract compliance exercise; it directly shapes whether people experience AI as a helpful tool or as an unaccountable gatekeeper in some of the most sensitive and consequential interactions they have with the government. Federal guidance on incorporating elements like risk assessments, inventory documentation, and recourse processes into agency deployment play an outsized role in fomenting trust in government use of AI.

Attempting to meet this challenge, both the Biden and Trump administrations have issued major federal guidance on how agencies should govern their use of AI. In 2024, the Biden administration’s Office of Management and Budget released OMB Memorandum M-24-10: Advancing Governance, Innovation, and Risk Management for Agency Use of Artificial Intelligence as part of their role in establishing how federal agencies operate and implement government-wide regulations. This memorandum set forth a government-wide framework for the responsible use of AI, including requirements for risk assessments, transparency, safeguards for high-impact systems, and clear waiver processes. However, we previously found that the growing body of AI-specific guidance, layered on top of existing procurement rules such as the Federal Acquisition Regulation (FAR), can be difficult for agencies and vendors to navigate, particularly when determining at what stage in the acquisition process risk and impact assessments should occur.

Last year, the Trump Administration’s OMB superseded OMB M-24-10 with new guidance: M-25-21: Accelerating Federal Use of AI through Innovation, Governance, and Public Trust. This memo includes elements similar to the Biden administration guidance but, because of its more flexible, agency-driven model, also makes consistent implementation more challenging. The shift toward greater agency discretion could be explained by the Administration’s emphasis on accelerating AI adoption and reducing centralized compliance requirements that could slow experimentation or deployment. Agencies now shoulder greater responsibility for building their own governance and compliance structures, a task that depends heavily on available resources and technical capacity. Well-funded agencies may be positioned to meet these expectations, while smaller or resource-constrained agencies, including those whose tools have the greatest impact on low-income or marginalized communities, may struggle to develop and implement the same safeguards. The result is a growing risk of fragmented governance across the federal landscape, with uneven protections for the people most affected by AI systems.