Federation of American Scientists, Future of Life Institute Present Converging Risks Report, AI Impact Awards at Gala

FAS AI Impact Awards Presented to Advocates, Civil Society Entrepreneurs, Industry Experts, and Policymakers

Washington, D.C. – May 20, 2026 – Tonight at the International Spy Museum in downtown Washington, D.C., the Federation of American Scientists (FAS), a non-partisan, nonprofit science and technology policy organization, in partnership with the Future of Life Institute, the world’s oldest and largest AI think tank, conclude an 18 month project to investigate the implications of artificial intelligence on global risk.

FAS and FLI partnered to build a series of convenings and reports across the intersections of artificial intelligence (AI) with biosecurity, cybersecurity, nuclear command and control, military integration, and frontier AI governance. This project brought together leaders across these areas and created a space that was rigorous, transpartisan, and solutions-oriented to approach how we should think about how AI is rapidly changing global risks. Adapting to this reality will demand that policy entrepreneurs take action; scientific and technological expertise is a must for successful policymaking.

“FAS is dedicated to developing evidence-based policies to address national challenges, and the technical advances of artificial intelligence are already outpacing our expectations. We recognized an urgency in convening expertise across disciplines to better understand how we can reduce risk and increase societal rewards,” says FAS CEO Daniel Correa.

“AI is no longer a single-domain challenge. It is a force multiplier reshaping the risk landscape across nuclear, biological, cyber, and military systems simultaneously, and it is doing so faster than our institutions can adapt,” says Future of Life President and CEO, Anthony Aguirre. “That is precisely why this partnership with FAS has mattered so much. The report gives decision-makers a clear-eyed map of how these threats are compounding, and what we can do about it. The window to put sensible guardrails in place is open, but it is closing quickly. The leaders we are honoring show that rigorous, bipartisan action on the most consequential technology of our era is both necessary and possible.”

The AI x Global Risk Gala, moderated by Ashley Gold, Senior Technology Policy Reporter at Axios, will highlight a capstone report and present awards in recognition of AI policy leaders. Bloomberg‘s cyber and emerging tech reporter, Katrina Manson will host a discussion panel about the report. The panel will include FAS board member and former Acting Under Secretary for Science and Technology at the Department of Homeland Security, Dr. Daniel Gerstein.

‘Converging Risks’ Report

The primary report, Converging Risks: AI and the Future of Global Security, is the synthesis of sector-specific investigations into nuclear policy, cyber policy, biotechnology, defense, and critical infrastructure. Increasingly, AI cuts across all of them simultaneously.

The FAS team evaluated risks through the “Threat, Vulnerability, and Consequence” or “TVC” framework, a powerful acknowledgement of how stakes rise alongside introduction and interaction with multiple factors.

The report illustrates how AI is complicating the risk calculus, adding complexity to systems and events, changing the speed at which we need to respond, and often increasing the scale of the risk.

“Despite the very real risks artificial intelligence presents, our report is not fatalistic,” says Dr. Jedidah Isler, FAS’s Chief Science Officer. “We know that productive conversations and proactive policy cannot happen if we operate from a state of hype, fear or ignorance. As scientists, we must use all of the tools at our disposal to reckon with what is very likely to be one of the most consequential technologies of this era. It’s innately a sociotechnical problem: it’s not just the technology, but what we think about it and how we collaborate in the face of tremendous change. We must begin by building government capacity, coordination, and translation infrastructure now.”

FAS AI Impact Award Winners

FAS will also present four awards at the Gala: the AI Advocacy Award, AI Impact Award for Civil Society, AI Impact Award for Industry, and the AI Policy Award.

Joseph Gordon Levitt, AI Impact Award for Advocacy

Joseph Gordon Levitt, the UN’s first global advocate for “human-centric digital governance”, will receive the AI Impact Award for Advocacy for his work raising awareness of AI risks to non-technical audiences using his skills as a writer, director, communicator, and educator.

Mr. Levitt’s recent advocacy includes speaking out about Meta’s AI chatbots endangering children (September, 2025) and supporting an AI and child safety bill in Utah (January 2026).

Mr. Levitt and his organization, HITRECORD, explore the intersection of technology and society through both his creative work and advocacy around digital governance.

Sneha Revanur, AI Impact Award for Civil Society

Sneha Revanur will receive the AI Impact Award for Civil Society for her work founding a civil society organization, Encode, that works to influence federal AI policy that unifies pro-AI, pro-human perspectives.

Ms. Revanur began her activism work at age 15 when she learned that California was considering replacing its cash bail system with a risk-based algorithm and that the algorithm had serious racial bias baked into it. She organized a statewide coalition of high school students, fought the ballot measure, and helped defeat it by 13 percentage points.

Today, Ms. Revanur continues her activism work in AI regulation to ensure that trust and fairness are built into the often invisible systems that can have enormous impact on daily life.

Chris Meserole, AI Impact Award for Industry

Chris Meserole, Executive Director of the Frontier Model Forum, will receive the AI Impact Award for Industry for his work examining the security risks associated with artificial intelligence. He’s working to determine best practices to ensure strong interconnection between industry, research, and government.

Prior to the Frontier Model Forum, Chris served as Director of the AI and Emerging Technology Initiative at the Brookings Institution and a fellow in its Foreign Policy program.

Today, Mr. Meserole works extensively on safeguarding large-scale AI systems against the risks of accidental or malicious use.

Senator Blackburn (R-TN) and Senator Blumenthal (D-CT),

AI Impact Awards for Policy Leadership

How we govern AI’s impact on society is of utmost importance. Decisions made today will drive outcomes for years, and potentially decades, to come. FAS is presenting two AI Impact Award for Policy Leadership to honor work that anticipates and addresses future risks presented by artificial intelligence.

Senator Marsha Blackburn (R-TN), Senator Richard Blumenthal (D-CT) will be presented with the AI Impact Awards for Policy Leadership for their respective leadership navigating fast-moving technology and its implications.

Senator Blackburn of Tennessee has been a bold and consequential leader on AI policy. Last summer she successfully fought to remove a provision from federal legislation that would have blocked states from protecting their own citizens from AI harms for a decade. In December, she put forward a comprehensive national framework for AI governance that requires companies to conduct real risk assessments and establishes concrete rules on training data and deepfakes. Senator Blackburn also leads the Transparency and Responsibility for Artificial Intelligence Networks (TRAIN) Act, a bipartisan bill aimed at helping musicians, artists, writers, and other copyright holders determine whether their work has been used to train generative artificial intelligence models.

Senator Blackburn’s forward thinking on AI has driven leadership on quantum computing development. She is advancing bipartisan legislation like the National Quantum Initiative Reauthorization Act to provide necessary infrastructure for future AI capabilities.

Senator Blackburn serves on the Senate Committee on Commerce, Science, and Transportation, of which she is Chairman of the Consumer Protection, Technology, and Data Privacy Subcommittee, as well as on the Senate Judiciary Committee, of which she is Chairman of the Privacy, Technology, and the Law Subcommittee.

Senator Blumenthal of Connecticut has been one of the earliest and most consistent voices on Capitol Hill regarding technology and its implications for society. He has been using his voice to demand that Congress show up for this moment. He brought Sam Altman to Congress for the first time back in 2023 to help educate lawmakers and urge them to act. He has since pushed for his AI Accountability and Personal Data Protection Act, bipartisan legislation to hold AI companies accountable for how they use copyrighted material to train their models. He also introduced the bipartisan AI Risk Evaluation Act which would create a dedicated AI risk-evaluation program within the Department of Energy focused specifically on national security, civil liberties, and labor protections. Senator Blumenthal co-leads the bipartisan Guidelines for User Age-verification and Responsible Dialogue (GUARD) Act to protect children against harms from AI bots, and this legislation is advancing in the Senate.

Senator Blumenthal serves on Senate Committees on Armed Services, Judiciary, and Homeland Security and Government Affairs.

Two senators. Different parties. Different states. Different politics. Same conclusion: Congress cannot afford to sit this one out.

Policymakers in Attendance

Additional policymakers invited to the Gala have demonstrated leadership in advancing evidence-based artificial intelligence legislation, including:

Congressman Jim Himes (D-CT) serves as Ranking Member on the House Permanent Select Committee on Intelligence, has deep experience and unique insights into how U.S. intelligence agencies and the national security apparatus integrate artificial intelligence models, including how models could be used for hacking and cyberdefense. He will be a panelist at the gala.

Senator Elissa Slotkin (D-MI) serves on the Senate Armed Services Committee as Ranking Member of the Subcommittee on Emerging Threats and Capabilities, and introduced the AI Guardrails Act to address AI use around lethal force, spying on Americans and nuclear weapons. The bill seeks to codify two existing Defense Department guidelines into law: that AI cannot autonomously decide to kill a target and that the technology cannot be used to conduct mass surveillance on Americans. It would also ban the use of artificial intelligence for launching or detonating a nuclear weapon.

Congressman Don Bacon (R-NE) serves on the House Armed Services Committee as Chairman of the Subcommittee on Cyber, Information Technology and Innovation. Congressman Bacon has championed and overseen the passage of numerous provisions pertaining to AI and risk in the FY26 NDAA. Bacon joined the Congressional probe into Elon Musk’s Grok AI over allegations of antisemitism and ‘deeply alarming messages’ (July 2025).

Congressman Bill Foster (D-IL), Congress’s only member holding a PhD in physics, introduced the bipartisan Responsible and Ethical AI Labeling (REAL) Act, which would mandate a “clear, conspicuous, and prominently displayed” disclaimer notifying readers or viewers that content was created with or manipulated by AI.

Congressman Rich McCormick (R-GA) serves on the House Armed Services Committee and as the chairman of the Subcommittee on Oversight and Investigations. He also serves on the Armed Services Committee, Oversight and Government Reform Committee, and is a former member of the bipartisan Task Force on Artificial Intelligence.

###

About the Federation of American Scientists (FAS)

The Federation of American Scientists (FAS) works to advance progress on a broad suite of contemporary issues where science, technology, and innovation policy can deliver transformative impact, and seeks to ensure that scientific and technical expertise have a seat at the policymaking table. Established in 1945 by scientists in response to the atomic bomb, FAS continues to bring scientific rigor and analysis to address national challenges. More information about FAS’s work at fas.org.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefiting life and away from extreme large-scale risks since its founding in 2014. Find out more at futureoflife.org.

RESOURCES

AI x Global Risk Nexus Project

Converging Risks: AI and the Future of Global Security (and briefing booklet)

- AI and Nuclear Command, Control, and Communications

- AI and Military Integration

- AI and Biological Risks

- AGI and Global Risk

- AI, Cyber, and Global Risk

- AI and Nuclear Risks

FAS AI Impact Award Winners

More on AI Advocacy Award winner Joseph Gordon Levitt

More on AI Impact Award for Civil Society winner Sneha Revanur and Encode

More on AI Impact Award winner Chris Meserole and Frontier Model Forum

More on AI Impact Awards for Policy winners Senator Marsha Blackburn (R-TN) and Senator Richard Blumenthal (D-CT)

Face Recognition Performance, Bias, and the Limits of Technical Fixes

Christopher Gatlin was arrested for a brutal assault he didn’t commit after AI Face Recognition Technology (FRT) said he matched the suspect. He spent 17 months behind bars, and clearing his name took two years. As of March 2026, there were at least nine documented U.S. wrongful arrests tied to face recognition misidentification, mostly involving Black people. From 2012 to 2020 Rite Aid customers, disproportionately in non-white neighborhoods, were flagged by FRT as shoplifters, confronted, and sometimes expelled, including the searching of an 11 year old girl, all on the basis of bad matches.

Errors made by FRT are one cause of these harms, and these systems are known to make more errors on certain populations, including Black people, women, East Asians, and older people. But the way these systems are used by humans is a key component of these errors. Christopher Gatlin was identified based on a grainy photo of a hooded, partially obscured face, which could not be expected to lead to reliable identification. Moreover, police arrested him despite a lack of corroborating evidence. Harms caused by Rite Aid were due in part to a decision to mainly deploy face recognition in disproportionately non-white communities, as well as a lack of proper user training and the use of poor quality photos.

At the same time, face recognition does provide real benefits. In controlled, cooperative settings such as unlocking phones, banking apps, or passport verification, modern systems can be highly accurate. NIST evaluations show dramatic improvement over time, with errors occurring about one time in 1,000, depending on conditions. Millions of Americans use face recognition daily for convenience and security.

In tasks involving uncontrolled settings with uncooperative subjects however, such as identifying people from surveillance images, accuracy is much lower and more difficult to measure. Law enforcement and child-protection organizations have still used face recognition to identify suspects, locate missing children, and support trafficking investigations, but the potential from harms from inaccurate results in high stakes settings is much greater. Furthermore, the effect of biased performance is magnified in these uncontrolled settings, in which the number of errors seems to be much greater for some subpopulations. This report focuses on the causes of this bias, its potential harms and possible steps to reduce these harms. The use of face recognition in mass surveillance obviously raises other serious potential concerns, but these are outside the scope of this report.

Harms from FRT result both from technical errors and flaws in the ways humans use these systems. This suggests two parallel strategies for reducing the negative effects of biased face recognition. One approach is to reduce the bias in face recognition systems directly. Bias can occur due to training FRT using biased datasets that do not accurately reflect the demographics of the overall population. This can be difficult to eliminate due to the massive scale of data used to train FRT, which makes it difficult to control or even understand the demographics of the data. But further efforts can be made to reduce demographic bias in the data. Numerous other external factors that are more difficult to control may also create biased performance. Consequently, in the near term it may be practical to reduce, but not to completely eliminate biased performance.

A complementary approach to reducing harms from biased face recognition is to ensure that FRT are used appropriately by human operators. This solution is much easier to implement in the near term than the previous technical solution. It is not sufficient, however, simply to ensure there is a human in the loop confirming the results of FRT, since often FRT are more accurate than humans, their errors occur on challenging cases, and people may be unable to correct these errors. Behavioral policy interventions range from research aimed at better measuring bias and understanding when FRT results are not trustworthy to clear standards for how human operators use and interpret the results of FRT and restricting the use of FRT when potential harms outweigh the benefits.

In this report we provide an overview of face recognition performance and differential performance between different demographic groups. We summarize results from the National Institute of Standards and Technology assessing performance of numerous commercial face recognition systems. And we provide an overview of potential policies to reduce harms from face recognition bias.

Acknowledgements

Our understanding of this topic has benefitted greatly from conversations with Kevin Bowyer, Leah Frazier, Patrick Grother, Anil Jain, Brendan Klare, Alice O’Toole, Jonathan Phillips, Jay Stanley, and Nathan Wessler. We also received insightful comments and suggestions from Clara Langevin and Caroline Siegal Singh. Any failure in understanding is due to the authors.

Contents

Introduction

Face Recognition Technology Has Caused Significant Harms

Improper development or use of face recognition technology (FRT) can lead to serious harms. One such example occurred in 2020 when Christopher Gatlin was arrested for a brutal assault he didn’t commit after a face recognition system proposed him as a possible match for the suspect. He spent 17 months behind bars, and clearing his name took two years. Porcha Woodruff, eight months pregnant, spent 11 hours in detention for a carjacking after another bad match, even though surveillance footage showed the suspect was not pregnant. As of March 2026, there are at least nine documented U.S. wrongful arrests tied to face recognition misidentification.

In another example of this dynamic, Rite Aid, a major pharmacy chain, deployed face recognition technology widely in stores to spot alleged serial shoplifters. Impacted customers, disproportionately in non-white neighborhoods, were flagged, confronted, and sometimes banned from stores, including searching an 11 year old girl, all on the basis of bad facial recognition matches. Federal regulators later banned the company from deploying facial recognition technology in stores for five years, noting higher false-positive rates in stores serving predominantly Black and Asian communities and improper pre-deployment safeguards (more details here).

These instances of incorrect matching and arrests have mostly involved non-white people. But, while errors may be more prevalent among these populations, as FRT use grows it can increasingly affect all people. For example, police recently released a white Tennessee grandmother who had been wrongly jailed for nearly six months based on FRT results. She was arrested while babysitting four children, accused of committing bank fraud in North Dakota, although she had never been there. Unable to pay her bills, she lost her home.

Figure 1. On the left is a surveillance photo taken at a crime scene. On the right is the image of Robert Williams that was incorrectly matched to this photo by an automatic face recognition system.

The harms described above were instigated by flawed matches produced by FRT—computational models that perform face recognition. However, these models always form part of a larger system in which humans apply FRT to some task. The failures were not just the product of a bad model, but of human failure to follow effective procedures. In many cases, face recognition searches are performed using low resolution images, with faces partially obscured. Figure 1 shows the surveillance photo used to identify Robert Williams, who was wrongly arrested for theft on the basis of this image. He later stated, “My daughters can’t unsee me being handcuffed and put into a police car.” In some cases, police have violated accepted practice with suggestive remarks that prompt witnesses to confirm the results of automatic face recognition technology. In the Rite Aid case, poor employee training, the use of low quality images, and many other deployment decisions contributed to a large number of mistaken identifications.

Face Recognition Technology is Increasingly Widely Used

Face recognition technology has become increasingly accurate and widely adopted. It is estimated that 131 million Americans use face recognition on a daily basis for applications such as unlocking their phones or banking apps, providing convenience and improving security. FRT usage is especially prevalent in applications in which the person being recognized cooperates with the system. In controlled, cooperative settings, face recognition systems have improved rapidly, with error rates roughly halving every two years in some evaluations. Under ideal conditions, top-performing systems may make a mistake only once in several hundred attempts.

Face recognition is also increasingly used by law enforcement agencies to identify uncooperative subjects, identify criminal suspects, and find missing children. Its use in surveillance is also growing. For example, Immigration and Customs Enforcement (ICE) is using FRT to identify people and determine their immigration status. In these applications, FRT often successfully identifies individuals, but their accuracy is not as high, and the potential for harmful errors increases. An incorrect match in this instance can potentially result in wrongful detention or deportation of American citizens. As face recognition use grows, so will its benefits and harms, making it an urgent matter to understand its properties, impact, and effective policy interventions.

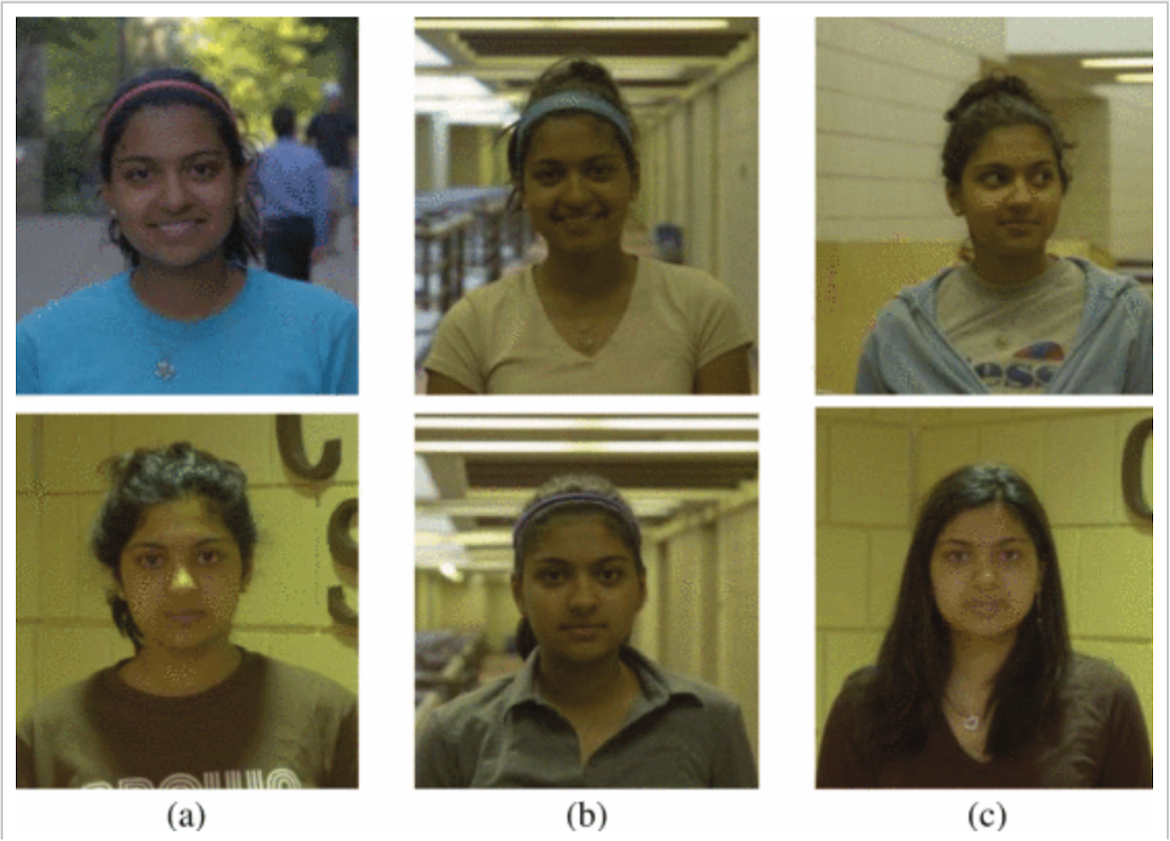

Figure 2. Each column shows a pair of images of the same person. Experimental subjects find the images on the left easiest to match, while it is most difficult to determine that the images on the right come from the same individual.

Face Recognition Difficulty Varies Significantly

The difficulty of face recognition problems varies tremendously depending on the setting. Figure 1 has already shown a difficult operational setting, in which a poor quality surveillance image must be matched. A human examining these images has a hard time telling whether they are of the same person. Figure 2 shows that even when images are of good quality, it is not always easy to tell whether they come from the same person, due to changes in things like hairstyle.

What Do We Mean by Bias in Face Recognition?

Bias in face recognition has been the subject of significant public concern and extensive research over the past decade, particularly as these systems have been deployed in high-stakes settings such as law enforcement and surveillance. This report examines the nature, causes, and consequences of this bias, and in this introduction we begin with a brief discussion of what we mean by “bias”.

Face recognition is meant to solve a problem that has an objectively correct solution; do these two images come from the same person? We say the system displays bias against certain demographic groups if it makes more errors on these groups than on the general population. We will use the terms “bias” and “differential performance” interchangeably.

FRT have consistently shown worse performance on women than men and worse performance on Black people than on white people, and many FRT display worse performance on East Asian people than white Americans. One way that bias can occur is through training FRT models using unbalanced data that better represents some groups. When this occurs, bias can be mitigated by augmenting the training set to represent different groups more equally.

However, defining demographic subgroups exactly can be difficult, making it hard to balance data. Studies that compare performance on men and women generally ignore subtleties of gender identity. Groups of Black or white people used in studies certainly contain many individuals of mixed race and, for example, Black people in the United States might have a different distribution of traits than Black people from East Africa. Different studies sample demographic subgroups in different ways, and therefore may not be evaluating exactly the same questions.

Moreover, it is unclear how best to define demographic subgroups. For example, is it more fruitful to measure differential performance between white and Black people, or between light-skinned and dark-skinned people? Black people can differ from white people not just in skin tone but also in structural properties of their face. At this time, it is unclear which aspects of appearance account for differential performance and how this would align with all possible subgroups. Most studies have been limited to a few broad demographic categories and it is not known, for example, whether performance would differ between specific nationality groups within a similar region such as Vietnamese and Korean people.

Outline of the Rest of the Report

This article aims to provide necessary background to assess the trajectory and risks of bias in face recognition technology. We do not address other important concerns about FRT, such as maintenance of privacy and the use of FRT in mass surveillance.

In the next section we will briefly describe how face recognition systems work. We will then discuss the world-wide scope of face recognition. Next we summarize the accuracy of FRT and how this has progressed. We then discuss the nature of bias in FRT, and consider the causes of this bias. Next we consider FRT as part of a socio-technical system, and the impact of human users on FRT harms. Finally, we suggest possible policy interventions to reduce these harms.

This report makes the following points:

1. Improvements in accuracy have not eliminated bias.

Face recognition systems have become significantly more accurate in recent years, but they continue to exhibit differential performance across demographic groups.

2. Bias is difficult to measure and difficult to fully eliminate.

In real-world, uncontrolled settings, bias is harder to quantify and may be larger than benchmark results suggest. While technical interventions can reduce disparities, there is no simple or complete solution.

3. Harms arise from both technical errors and how systems are used.

Errors in face recognition can lead to significant harms, including wrongful arrests and other adverse outcomes. These harms are often amplified by deployment decisions, such as where systems are used and how results are interpreted.

4. Face recognition should be understood as a sociotechnical system.

Bias and harm arise not only from the underlying models, but also from human judgment and organizational practices. Inappropriate use of face recognition results can be more significant than technical error.

5. Policy interventions can reduce harms even without perfect technical solutions.

Effective policies include improving transparency and evaluation, supporting research on real-world performance. Furthermore, just having humans check the results of FRT is not sufficient to avoid errors; this requires establishing clear, detailed protocols governing when and how face recognition may be used.

6. Governance of use is as important as improving the technology.

Auditing data and system outputs, developing tools that signal when results are unreliable, and enforcing strict use protocols can significantly reduce the risk that errors lead to harmful outcomes.

Glossary

How Face Recognition Works

Face recognition is based on machine learning, and highly dependent on the use of large-scale data sets. This data is difficult to carefully control or characterize.

Face Recognition refers to the process of automatically identifying a person from a photo. It is divided into two tasks. In verification (or one-to-one matching), two images of faces are compared to provide a yes/no answer to the question of whether they come from the same person. This is used, for example, in border control, when a live image of someone may be compared to their passport photo. In identification (or one-to-many matching), a single probe face image is compared to a potentially large gallery of images to determine which, if any faces in the gallery match the probe image. The gallery might contain, for example, mug shot images of people who have been arrested, driver’s license photos, images of people who have been barred from access to casinos, or a large collection of images scraped from the internet. A system performing identification might declare that it finds no match, return a single match, or return a potentially large collection of images that might resemble the probe image. In the latter case it is expected that these potential matches will be assessed by the user to identify valid matches. FRT may also return a confidence level about the correctness for each match, although these may not correspond to the true probability that the match is right.

A Brief History of Face Recognition

The first fully automatic face recognition system was developed 50 years ago as the subject of the PhD thesis of Takeo Kanade, who went on to become one of the pioneers in the field of computer vision. It identified landmarks on the face, such as the corner of the mouth, and used their position to compare images. Early methods like this, based on face geometry, had limited effectiveness. Scientists began to develop more useful and accurate face recognition systems through the growing use of machine learning, beginning in the late 1990s. These methods are trained with numerous face images, called a training set, to automatically extract representations of faces that can be used to compare them more robustly.

Progress accelerated rapidly as researchers began to appreciate the power of using an approach known as neural networks, which allowed them to leverage massive datasets of faces to “teach” the computer how to recognize new faces. While neural networks were used by FRT by the late ’90s, their use became dominant in the mid-2010s after further breakthroughs in machine learning with large neural networks, a technique known as deep learning. Since the mid-2010s, improvements in model architectures, training methods, and data scale have driven substantial gains in measured accuracy, especially on standardized benchmarks. At the same time, these advances have enabled rapid adoption of face recognition across a range of applications, from smartphone authentication to large-scale identification systems used by governments and private firms, even as performance in real-world settings remains highly dependent on context.

How Face Recognition Models Are Trained

To perform accurately, an FRT must be able to determine that two images of the same person are similar, even if the images are taken at different times, from different viewpoints, under different lighting conditions. This is done by training the machine learning model to extract a representation that captures facial properties that can distinguish one person from another, but that are not significantly affected by viewing conditions or even some aging. The similarity between two faces can be given a numerical score that represents the degree of difference between the representation of each face.

In its simplest form, training occurs by incrementally adjusting the parameters of a neural network. In most current publicly available systems these parameters consist of tens of millions of numbers that control the network’s behavior. If it is shown two images of the same person, the parameters are adjusted to increase the similarity score. If the images are of two different people, parameters are changed to lower the score. Once the model is trained, if two images produce a similarity score above a chosen number, known as the cutoff, the system declares the two images to be the same person; if it falls below that cutoff, the system says they are different.

Once the model has been trained, it can perform identification using a gallery of faces by comparing a representation of the probe to representations of the gallery images. That is, it can verify or identify images of people who were not in the training set, because it has learned a general representation that should apply to any faces.

The large data sets used in training are typically scraped from the internet. For example, one influential early data set, Labeled Faces in the Wild, made use of face images detected in Yahoo! news stories, with identifying captions. A number of large scale datasets containing millions of images have been developed using photos of celebrities available on the internet. Some companies, such as Meta and Google have made use of internal data that users have uploaded and labeled; these training data sets may contain more than 100 million images. Clearview, a face recognition company, claims to use data sets of more than 70 billion face images scraped from the internet. Given the high cost and diminishing returns of training with so many images it is unlikely that all of these images are used for training, and this large corpus is more likely to be used to form the gallery.

Academic FRT generally train on datasets of images of public figures, such as the MS-Celeb-1M dataset, which contains ten million images of about 100,000 individuals. These massive datasets capture how a person’s appearance can vary with age, lighting, viewpoint, expression, and other conditions, which helps improve accuracy of systems trained on the datasets. Commercial systems do not generally provide details of their training sets, but it is expected that they include similarly large sets of images scraped from the internet, or provided by users, as in the case of Google and Meta. However, because these data sets are assembled at enormous scale—often from uncontrolled sources—they are difficult to audit, regulate, or correct when they embed systematic biases.

Face Recognition in Use Today

Face recognition use is increasing rapidly, becoming more prevalent in numerous high-stakes applications.

The global face recognition market was almost nine billion dollars in 2025, with projected growth to over 30 billion by 2034. Over a third of this market is in the U.S., but there is wide adoption of FRT around the world. One of the primary applications of face recognition is to efficiently and reliably identify people. This can make access to financial systems more secure, potentially preventing identity theft. It can also make hospital admissions quicker and more accurate, and speed up passport verification. In these applications, a human subject opts-in to using the FRT, cooperating to allow consistency in viewpoint, avoiding unusual facial expressions, and enabling controlled lighting. This leads to highly accurate systems. In many cases, such as using FRT to unlock cell phones, users opt-in to the technology for added convenience and device security. When entering the country, U.S. citizens may opt-in to face recognition systems, and their photos are deleted after 12 hours, while non-citizens are required to participate, with photos retained for 75 years.

Face recognition is also widely used in surveillance and law enforcement. Ten percent of U.S. police departments use FRT. The NYPD made 2,878 arrests resulting from FRT in the first five years of its use. The Metropolitan Police in London report 100 arrests using FRT in conjunction with mounted security cameras, including a suspect accused of kidnapping. Police in New Delhi used FRT to identify almost 3,000 missing children, and FRT has been used to identify refugee children who have been separated from their family. The National Center for Missing & Exploited Children (NCMEC) has used a tool called Spotlight, which makes use of FRT, to identify children who are victims of sex trafficking. In 2023, the FBI worked with NCMEC to identify or arrest 68 suspects of trafficking. A large number of retail stores use FRT to track customers to understand traffic patterns, and despite the Rite Aid case, retailers such as Wegmans still use FRT to spot accused shoplifters. Immigration and Customs Enforcement (ICE) is using FRT to identify people and determine their immigration status.

Face recognition has been widely used for surveillance of the Uyghur population by the Chinese government., FRT are used by the Israeli government to track and surveil Palestinians.

These applications of face recognition can solve crimes, enhance security and make access more convenient, but also raise troubling concerns about mass surveillance, repression of civil liberties, and high-stakes errors which materially harm people. In surveillance and criminal investigations, subjects are not cooperative, and probe images used are often of poor quality, as illustrated in Figure 1, which produces much higher error rates. An awareness of mass surveillance can also have a chilling effect on people’s ability and willingness to participate in Constitutionally protected activities such as protest or dissent.

As face recognition has grown more practical, a large number of companies have developed and marketed FRT. This includes large tech companies such as Amazon, Microsoft, Toshiba, NEC and Apple, and smaller companies that focus more narrowly on face recognition, biometrics and security, such as Clearview, Idemia, and Rank One Computing. Clearview is one of the most widely used by federal and local law enforcement in the U.S.

Early in the development of face recognition technology, the best performing systems were produced by academics and used openly available architectures and data. However, with its rapid commercial growth, state of the art FRT are generally developed by companies that provide little transparency about how they work or what data they use. As we will discuss in more detail, the National Institute of Standards and Technology evaluates the performance of some of these systems, but this evaluation is voluntary and not all companies participate.

Face Recognition Performance Across Different Conditions

Face recognition performance has improved rapidly, but recognition can still be quite difficult in many settings.

Two types of errors can occur in face recognition. With false positives, a FRT incorrectly states that two images come from the same individual. With false negatives, the system incorrectly states that two images do not come from the same individual. The cutoff is what determines the balance between false positives and false negatives. Tightening it makes the system more cautious about declaring a match (reducing false positives) but also more likely to miss legitimate matches (increasing false negatives).

Figure 3. The ACLU found that Amazon’s face recognition system matched 28 members of Congress to mugshots of other people.

The significance of this cutoff is illustrated well by the American Civil Liberty Union’s (ACLU’s) evaluation of Amazon’s FR system, “Rekognition” and the subsequent controversy. The ACLU reported that they had tested Rekognition, and that it incorrectly identified 28 members of Congress with people who had committed crimes (Figure 3). A significantly disproportionate number of these false matches were people of color. Amazon responded by arguing that although the ACLU had used the default cutoff, or confidence threshold, of 80% for Rekognition, this was more appropriate for finding celebrities on social media, and that their documentation recommended a much more stringent cutoff of 99% for use in high stakes applications such as law enforcement. Amazon also pointed out that the bias in the results may have been due to bias in the gallery of images used by the ACLU. If the ACLU compared images to a gallery that disproportionately contained people of color it would be more likely to produce false matches for people of color in congress. The ACLU replied by stressing the dangers of a system that was inaccurate with default thresholds and a lack of guidance for the system’s use.

One lesson from the Amazon Rekognition controversy is that the potential harms of an FRT depend not just on its technical accuracy but also on how users apply these systems. It also provides some indication that Rekognition was more prone to false positive errors when applied to people of color, at least at one significant cutoff threshold.

Figure 4. Three images of a researcher at the National Institute of Standards and Technology. The left image simulates a passport or similar photo, the middle image simulates images that might be taken while going through immigration, the right image simulates an image taken by a kiosk.

Figure 5. Two pairs of images, each pair shows the same person under identical imaging conditions except for a change in lighting (images from the Multi-PIE dataset).

Challenges in Real-World Face Recognition

The most rigorous experiments measuring face recognition accuracy are conducted under tightly controlled conditions. As a result, reported performance often overstates how systems perform in real-world settings, where error rates can be much higher.

The difficulty of face recognition tasks can vary widely. Frequently, identification is performed by performing verification between the probe image and all gallery images. Identification becomes more difficult as the gallery size grows and the number of opportunities for false positive matches increases. The difficulty of face recognition tasks also depends very much on the conditions under which images were taken. For example, in border control, the subject can be required to face the camera with their face fully visible, lighting can be controlled, and camera quality can be ensured.

Figure 4 shows that even images taken at a kiosk can be much harder to match, due, for example, to changes in viewpoint. Figure 5 illustrates the effect that a change of lighting can have on the difficulty of matching faces. As previously shown in Figure 1, when images come from surveillance cameras, the subject may not be facing the camera, they may not be close to the camera, so image resolution can be low, and their hair or hand or another object may obscure part of the face. Identification with poor imaging conditions may have many orders of magnitude more errors than verification under tightly controlled conditions.

By all metrics, there seems to be little doubt that face recognition accuracy has been improving rapidly. The National Institute of Standards and Technology (NIST) Face Recognition Vendor Test (FRVT) evaluations illustrate this increase (most recent results here). NIST evaluates verification performance on two high quality images of frontal facing individuals. From 2020 to 2025 the error rate fell by a factor of three. (They set a threshold for matching to achieve a false positive rate of 0.003%, so about one false identification in 33,000 attempted matches. They then measure the false negative rate, the number of correct matches missed. The best performing system as of January 2025 achieved a false negative rate of 0.13%, a little more than one correct match missed in 800.) Similarly, the error rate on an identification task that matched a mug shot probe image to a large gallery of mugshots fell by a factor of 5 during the same period. (The best performing method, when using a threshold to produce a false positive identification rate of 0.3%, had a false negative error rate of 0.05%. This means that the system would falsely identify a probe image in the gallery (of 1,600,000 mugshots) one time in about 300, while missing a correct match about one time in 2,000.) Some results are shown in Figure 6, as of March 2025. Over a period of decades, NIST has found that errors have generally fallen by about a factor of two every two years. Under controlled conditions, FRT are now much more accurate. For example, on the best performer as of March 30, 2026, when performing verification on two mugshots, using a cutoff set to make a false positive match one time in a million, a false negative failure to find a match will occur one time in 500. This sharp increase in accuracy in a short period has happened alongside widespread adoption in applications like border control or unlocking a phone.

These experiments represent relatively ideal conditions. FRT in the real world may face much higher failure rates. This can occur due to more challenging imaging conditions, such as using a surveillance image as a probe, instead of a mugshot, or other factors such as changes in the subject’s appearance. For example, when the best performing system at mugshot identification is applied in a scenario in which the gallery contains visa images and the probe is taken from a kiosk, the error rate increases by a factor of about 18 with a false negative error about one time in 30 instead of one time in 500. This is a fairly typical increase, and still represents relatively idealized conditions compared to the most challenging ones.

Defining and Measuring Bias in Face Recognition

Face recognition performs with different levels of accuracy on different demographic groups. As face recognition becomes more accurate, this may limit the effects of this disparity in some applications, but it can still be quite significant in high-stakes applications.

Going back more than 30 years, researchers have observed different rates of accuracy in face recognition systems depending on demographic properties of the subject, including race, gender and age. For example, in 2011 a study showed that Western face recognition algorithms performed better on Caucasian faces than East Asian faces, while East Asian face recognition systems performed better on East Asian faces than Caucasian ones. In 2018, the influential Gender Shades paper examined differential performance not in face recognition, but in a related facial analysis problem of determining gender from a face, showing much poorer performance on images of dark skinned females than light skinned males.

Absolute vs. Relative Error

In considering differential performance, it is important to distinguish between absolute and relative differences in performance. We define the absolute difference in two error rates as the difference between the larger and smaller error. For example, if an FRT produces 2% error on male faces and 4% error on female faces, we would say that the absolute difference is 4% – 2% = 2%. We describe the relative error as the ratio between the larger and smaller value, which in this case would be 4%/2% = 2. As overall performance improves, the absolute error tends to decrease, while the relative error rate might or might not decrease. For example, if a new generation of FRT reduces error on male faces to 1% and reduces error on female faces to 2%, absolute error decreases from 2% to 1%, while relative error remains constant.

Whether absolute or relative error is more important depends on the operational considerations and use of the system. When performance is very high, absolute error will tend to shrink. If this translates into operational settings, then relative error may become unimportant. For example, if an FRT makes a mistake once in a billion queries on one population, and twice in a billion on another, errors for either population may be so rare that they are insignificant. In practice, the impact of absolute error also depends on how widely deployed a system is. As systems become more accurate, they may become more widely deployed, which can paradoxically result in more accurate systems producing more errors.

Even though current FRT achieve quite low error rates under ideal conditions, these error rates tend to grow much higher under more challenging conditions, and errors can be quite common. Although it is difficult to study error rates accurately under the most challenging conditions, high relative error under ideal conditions may predict relative error that is just as high or higher under challenging conditions that also have high absolute error. That is, while absolute error in operational contexts is of greatest importance, relative error in highly controlled conditions may predict high absolute error in less controlled conditions. Consequently, it is premature to think that FRT are so accurate that relative error is no longer important. A more nuanced view would hold that continuingly high relative error rates may be less important for some applications, such as unlocking phones, and still be quite important in other applications, such as criminal investigations.

NIST Experiments on Demographic Variation

Since 2019 NIST has performed extensive evaluations of demographic variations in performance on hundreds of face recognition systems. They have access to large collections of non-public images that they use to evaluate FRT submitted by companies. The large size and private nature of the dataset makes it especially unlikely that models are overfit to the data by, for example, selecting parameters that boost their performance on this particular data. NIST computes false negative rates using over a million pairs of images, comparing one high quality image of an individual to a medium quality image of the same person. False positive rates are computed using over a billion pairs of high quality images from different individuals. Image quality reflects applications such as passport checks at airports, but does not include more challenging problems such as police investigations using surveillance footage. All images come with demographic information, including the age, gender and country of origin of the subject. Country of origin is used as a proxy for race, focusing on countries that are less racially diverse, but this is not a perfect proxy.

NIST finds a relatively small demographic variation in false negative rates, in which a correct match is missed, and a much larger variation in false positive rates, in which an incorrect match is accepted. For example, the top performing FRT as of March 2025 produced 358 times as many false positives for West African females over 65 as for Eastern European males aged 35-50, with the false match rate increasing from about one in 15,000 to about one in 50. Among the top ten performing systems, the false positive rate for all West Africans was about 23 times higher, on average, than the rate for Eastern Europeans. The false positive rate for these performers on average is about 4.6 times higher for females than males, and about 2.9 times higher for people over 65 compared to people aged 20-35. The evaluations also show poorer performance on people from South or East Asia, relative to Eastern Europeans. Many additional studies have also found that FRT generally perform better on white people than people from other racial groups, and on males compared to females.

These studies do have important limitations. More narrowly defined groups (e.g. West African women over 65) will have less data, leading to noisy estimates, and when we take the ratio of two noisy estimates we amplify the noise. Also, images taken in different countries may differ in ways beyond the race of the subject, such as in the types of cameras or lighting used. Also, incorrect labels may have a significant effect on accuracy. If a visa photo is associated with the wrong name, this can lead to a false match, and these incorrect labels may be more prevalent in some countries than others. Finally, measures of bias may vary depending on the specific ways in which performance is measured. The chief scientist of a leading face recognition company has stated that in practice they find differential performance between racial groups of a factor of approximately 1.5, rather than the higher numbers found in NIST studies. (Brendan Klare, personal communication.)

Challenges in Measuring Bias in Face Recognition

There is decades of evidence of differential performance of face recognition between demographic groups, particularly affecting non-white people and females. However, these studies generally make use of relatively high quality images, and may not accurately reflect the degree of differential performance in challenging operational cases, such as the use of surveillance footage in criminal investigations or in identifying people on a watch list. This is due to the fact that it is quite difficult to accurately characterize and sample images from challenging environments. And while large scale photo collections with known identities and some demographic information exist, such as passport photos, we do not have large scale collections of photos taken in challenging conditions that have this information. While this problem is elusive, there is some evidence that differential performance increases with the difficulty of the recognition task.

Another limitation occurs because races are not well-defined biological categories but social constructs. It is not clear how to systematically divide a population into different races, especially in the case of multi-racial individuals. This is particularly challenging when images are scraped from the internet, and need to be labeled by race. Some studies have focused on skin darkness rather than race, but this is also difficult to determine accurately from photos due to the effect of unknown lighting conditions on apparent skin color. In spite of these limitations, there is a clear consensus among researchers that differences in FRT performance exist between racial groups.

An important question is how differential performance in face recognition is evolving over time. Is this a problem that was initially ignored, but is now being effectively addressed, or one that is recalcitrant? While there is no question that absolute differences in accuracy are shrinking over time, as FRT become more accurate, the behavior of relative differences is less clear. This is difficult to judge, since new test sets come out frequently, and experimental performance is generally measured over an ever changing landscape of conditions. Perhaps the most stable evaluation framework is NIST’s, which has consistently evaluated new FRT under the same conditions including systems developed from 2018 to 2026. Some of the top performing FRT have evolved, with multiple versions being released over this time period. When we examine these, we see that some have significantly reduced the amount of bias over time, while others have not, and have even seen increased bias. This suggests that it may be possible to reduce systematic bias through model design. More details can be found in the appendix.

Sources of Bias in Face Recognition Systems

Bias in face recognition systems arises from a combination of imbalanced training data, differences in image quality and gallery composition, and other technical and operational factors that are difficult to fully control or eliminate.

False negatives often arise when image quality is poor or facial features are obscured, while false positives are more likely when different individuals appear similar to the system, which can be exacerbated by limitations in training data or representation. For example, if we compare two images of the same person, and one of these images is blurry or has bad lighting or low resolution, the images may appear dissimilar due to these effects. FRT are trained to be somewhat robust to changes in viewing condition, but they are still likely to make errors when these changes are large. On the other hand, if a system is trained using few images of one demographic group, the system may not learn representations that distinguish between a wide range of appearances within that group. For example, if one trained an FRT using images of only one Black person, the system would likely learn to associate dark skin with that individual, and would not learn features that effectively distinguish between different Black people. This is an extreme example, but it is generally found that deep neural networks become more effective as the amount of relevant data increases.

We focus on false positive errors, as these show the greatest differences across demographic groups and are most closely associated with documented harms, such as wrongful arrests. In this section, we will discuss two key points. First, while it may be straightforward to improve demographic balance in datasets, completely eliminating demographic bias is complex and difficult. Second, while demographic bias in the data may be responsible for some bias in false positives, it is not necessarily the only source of these differences. Various research results present conflicting evidence of the importance of dataset bias in practice.

The Contribution of Dataset Bias

Face datasets collected in the last 15-20 years have generally consisted of images scraped from the internet. This enables the creation of large scale datasets that capture a wide range of variations in viewing conditions. These datasets often used well-known people with many online photos, without specific regard to accurately representing the distribution of people of different races or genders in the population as a whole. For example, an early and very influential dataset, Labeled Faces in the Wild (LFW), consisted of 77.5% images of men and 22.5% images of women. LFW was based on people who had appeared in Yahoo! news stories that were identified in captions, making it easier to build a large dataset of known people. However, these people were obviously not representative of the overall population.

Some more recent datasets pay closer attention to capturing the true distribution of people in the world. However, creating unbiased datasets can sometimes be a subtle and difficult problem. For example, the BUPT-Balancedface (BUPT) dataset was constructed to have equal numbers of images of Caucasian, Indian, Asian and African faces. However, subsequent analysis revealed that the Asian and Indian faces consistently appeared as a larger size in the dataset. So although the number of images was balanced, the viewing conditions of the images could still vary significantly. This discrepancy might, for example, lead to biased performance at test time.

The reason for systematic biases in datasets is often not well understood, but it is plausible that when scraping images from the internet, photos from different countries might follow different conventions, use different cameras, or differ in myriad other ways. Therefore, to judge whether a dataset is biased is not as simple as counting the number of images from each population.

A deeper difficulty is even defining what it means to have an unbiased dataset. BUPT represented four demographics equally. But it is unclear what should count as a racial category. For example, should Asian faces be counted as one category? Should Chinese and Japanese people be considered two separate racial categories? What about multiracial individuals? The concept of race is not biological, but a social construct that is not well defined. It is also problematic to correctly label the racial origins of large scale datasets, which may contain images of millions of people. It seems clear that paying attention to demographic diversity will produce less biased datasets than building datasets based on arbitrary selection of celebrities. However, it is also clear that creating completely unbiased datasets is an ill-defined problem. Even with a given definition of “unbiased” it remains very challenging and beyond current technology.

There is certainly strong evidence that dataset bias can produce differential performance, and bias can be reduced through improving the training data balance. It has been found that while Western face recognition algorithms perform better on Caucasian faces than on East Asian faces, algorithms developed in East Asia perform better on East Asian faces, a result that is likely due to dataset bias. After the Gender Shades paper demonstrated that Microsoft’s gender identification algorithm performed much more poorly on Black women than white men, Microsoft quickly improved performance dramatically on Black women by balancing its datasets.

Differential performance can also occur because of biases in the gallery data or probe data. When the gallery is formed from images scraped from the internet, the properties and number of these images may vary drastically from individual to individual, or even from group to group. It has been shown, for example, that if one group is more highly represented in the gallery, this will lead to more false positives among that group because there is greater potential for the gallery to contain faces similar to the probe. As another example, if one group, such as women, frequently have longer hair that covers more of their face in the probe image, this can also lead to higher error rates. Also, if a gallery image is of low quality, not showing a clear image of the face, it may be matched to a similar low quality probe image of a different person. Rite Aid’s use of low-quality images in its gallery is believed to have contributed to the large number of false matches it produced, which in turn led to customers—disproportionately in non-white neighborhoods—being wrongly flagged, confronted, and sometimes expelled from stores. When companies such as Clearview make use of billions of images scraped from the internet it is extremely challenging to balance these datasets or ensure uniformity in their quality.

Assessing dataset bias in commercial systems is complicated further by the fact that companies generally do not make their datasets publicly available or disclose many details about them. Moreover, NIST experiments on dataset bias do not make use of the galleries used by commercial systems. Therefore any bias due to galleries would not be detected.

Sources of Bias Beyond the Data

Other factors besides data may also significantly influence differential performance. Some experiments have shown that even balanced datasets do not produce equal performance on men and women, or between races, and that sometimes more biased datasets produce less biased and better results. Furthermore, demographic groups may have properties that make them easier or harder to recognize. For example, there may be greater variation in hairstyle in one gender than another, and males in different countries may have different trends in facial hair. If someone has an unusual beard, for example, this may make him easier to recognize, or harder to recognize if he shaves his beard. It is difficult to determine the effects on differential performance of social conventions affecting appearance. It has also been noted that darker skin may require different types of lighting to bring out the facial structure. This could result in more recognition errors for people with darker skin when lighting is not controlled.

In summary, it is clear that extreme dataset bias produces biased results. It is quite challenging to produce perfectly unbiased datasets, and less clear to what extent the differential performance observed in modern face recognition systems may be due to dataset bias, especially since these systems are built with proprietary data that is not open to public examination.

Reductions in Bias Over Time

From a policy perspective, perhaps the most important question is whether companies have the ability to produce less biased FRT. To address this question we examined NIST measurements of the performance of models produced by leading companies. NIST has assessed the degree of bias in multiple models produced over time by some companies, allowing us to see how their performance has evolved. Based on NIST reports, we find that some companies have significantly reduced the absolute and relative bias in their systems in two or three years after initial evaluation, while other companies have not reduced relative bias, and in some cases it has increased, even while absolute bias decreases due to improved overall accuracy. Details of this analysis may be found in the appendix.

These results suggest that companies are capable of reducing bias, although this is certainly not definitive. In a conversation with one of the authors, the chief scientist at a leading face recognition company confirmed that NIST evaluations have helped them identify certain variants of differential performance between racial groups, enabling them to take effective steps to proactively identify and reduce bias whenever the company becomes aware of it. (Brendan Klare, personal communication.)

The Human Factor: Face Recognition Systems as part of a Socio-Technical System

Many errors in face recognition are due not just to mistakes by the technology, but to the way in which people make use of it.

The preceding sections focused on the technical properties of face recognition systems. However, these systems do not operate in isolation. They are embedded in what researchers call a sociotechnical system, in which the technology interacts with human judgment and organizational practices. The real-world effects of face recognition therefore depend not only on technical FRT performance, but also on how human users interpret and act on its results. In practice, this interaction can create distinctive failure modes. For example, users may rely too heavily on algorithmic matches without considering other evidence or fail to appreciate how image quality and threshold choices affect reliability.

Limitations of Human Oversight

Some authors argue that these human factors can be structured to correct for technical weaknesses in face recognition systems. One commentator contends that: “it is stunningly easy to build protocols around face recognition that largely wash out the risk of discriminatory impacts…. A simple policy requiring additional confirmation before relying on algorithmic face matches would probably do the trick… one has to wonder why so few researchers who identify bias in artificial intelligence ever go on to ask whether the bias they’ve found could be controlled with such measures.”

However, empirical evidence suggests that this confidence in human oversight may be misplaced. First, FRT tends to make errors on difficult cases, in which humans also make errors. Studies show that humans are unable to identify many of the errors made by automatic systems. Furthermore, human performance on face recognition suffers from similar differential performance as machine learning systems. Dubbed the other-’race’ effect, it has long been known that humans are more accurate in recognizing faces from their own race than from others (it has been posited that this also stems from dataset bias, in that people encounter more individuals of their own race than of others). Some work indicates that current automated systems recognize faces more accurately than the typical person, and that in some cases, combining a less effective human judgement with an automatic system may actually lead to lower accuracy than simply using the results of the automatic system. Human judgements can in some cases be used to improve algorithmic accuracy but it may be difficult to determine when that is the case. In general, we cannot assume that human judgements will be accurate or that human oversight can be counted on to correct errors made by automatic systems.

Figure 7. Christopher Gaitlin, right, was identified using the security photo on the left.

User Errors

Consistent with these findings, many of the known cases of false arrests due to FRT errors involved questionable practices by investigators. Christopher Gatlin was arrested for the brutal assault of a security guard, after an FRT flagged him as a possible suspect, based on a low quality image (Figure 7). Police steered the security guard to identify Gatlin, in what they later admitted was improper behavior.

Robert Williams was arrested for burglary one year after the crime, based on applying FRT to a surveillance video. Lacking witnesses, police showed the surveillance video to an employee of the store’s insurance company, who identified Williams from a photo array, although the video was of poor quality and his face was obscured by a shadow (Figure 1). The police failed to take basic steps such as investigating Williams’ alibi. The police chief at the time, James Craig, said that “this was clearly sloppy, sloppy investigative work.” In other cases, police have shown a single suspect’s photo to a witness, violating best practices by being unduly suggestive. This led to an arrest despite the suspect’s convincing alibi.

In cases where FRT lead to false arrests, it seems that police may in fact give undue weight to the results of FRT, rather than catching their errors, an example of “automation bias”. In another case in which recommended procedures were not followed, police were unable to obtain face recognition results due to the low quality of the surveillance image. A detective felt that the surveillance image resembled the actor Woody Harrelson, and used a picture of him to search for matches, rather than the suspect’s photo.

Failures in the use of FRT occur not only in police investigations. In the Rite Aid case mentioned in the introduction, the FTC’s complaint highlighted not just algorithmic errors but significant governance failures in how the system was operated by store employees. The commission found that Rite Aid did not take reasonable steps to train or oversee store employees who were responsible for acting on match alerts, including failing to teach staff how to interpret alerts or warn them that false positives could occur. The company also failed to test or monitor the technology’s accuracy once deployed, enforce image-quality standards, or implement any procedure for tracking false positive alerts and employee responses. As a result, employees in hundreds of stores routinely followed, confronted, searched, or even called police on customers based solely on system alerts—actions taken without meaningful training on the system’s limitations or appropriate safeguards. These shortcomings in training, oversight, and procedural controls were central to the FTC’s determination that Rite Aid had failed to prevent foreseeable consumer harm from the technology’s use.

In summary, it may be difficult for humans to correct mistakes made by algorithms, and in some cases they may place undue confidence on FRT results that are questionable and based on low quality images. In many applications, such as drug stores that are looking for known shop lifters, the people making use of FRT may not be expert investigators or well trained in the appropriate use of these systems.

Policy Interventions to Address Bias in Face Recognition Systems

Many errors can be addressed by better understanding and regulation of the way in which the technology is used.

A wide variety of policy interventions are available to deal with potential harms caused by bias in FRT. These include research, transparency in documenting bias, voluntary or mandatory guidelines governing the use of face recognition, and outright bans on the use of face recognition in certain contexts. As noted above, FRT make positive contributions in law enforcement and other applications, and these positives must be weighed against potential harms in crafting policy. Numerous institutions have suggested policy changes to address bias in FRT, including a comprehensive set of proposals in a recent report from the National Academies.

Research

Federal agencies already support substantial research on face recognition. NIST conducts ongoing evaluations of performance and demographic disparities, and agencies such as the Office of the Director of National Intelligence (ODNI) and the Intelligence Advanced Research Projects Activity (IARPA) have funded foundational research in face recognition systems. However, important gaps remain, particularly in understanding how these systems perform under operational conditions and how human users interact with their outputs. Additional federal funding could expand independent research in these areas, either by strengthening NIST’s evaluation programs or by supporting academic and nonprofit research focused specifically on bias mitigation and real-world deployment risks.

Two research priorities are especially important. First, evaluation frameworks should better reflect real-world conditions. Current large-scale benchmarks often rely on relatively high-quality images, whereas many high-stakes uses—such as criminal investigations—depend on low-resolution or poorly lit surveillance images. While efforts such as the IARPA Janus Surveillance Video Benchmark (IJB-S) dataset have begun to address this issue, broader and more systematic testing under operational conditions would provide policymakers with a clearer understanding of real-world risk.

Second, research is needed to develop tools that help human operators interpret and appropriately limit their reliance on face recognition results. For example, systems could assess probe image quality, estimate the likelihood that a reliable match can be produced, and warn users when results are unlikely to be dependable. Such tools could reduce the risk that investigators or retail employees draw strong conclusions from low-quality, unreliable inputs.

Measure and Reduce Bias

A better understanding of the bias in FRT can inform the procurement decisions of potential customers and encourage companies to take steps to reduce bias. Transparency in bias can be promoted in a number of ways. NIST is already conducting regular and impactful evaluations of bias in FRT, which can be thought of as an application of the Common Task Method (such evaluations have long been common in the computer vision community). This can be continued and potentially expanded. Regulations or government procurement guidelines can be used to incentivize or require companies to participate in evaluations and make these results public. Since criminal investigations are conducted by the government, procurement guidelines are a strong potential lever in promoting transparency. In addition to transparency in performance, these approaches could also be used to promote transparency in the data used to train FR systems. Making training data public may raise significant privacy concerns, but the government could incentivize the release of information describing the data and the steps taken to enhance the demographic balance of these data sets.

Regulate Sociotechnical use of Face Recognition

If we view FR as part of a sociotechnical system, it makes sense also to govern the way in which face recognition is applied, not just the technical performance of the underlying algorithm. In practice, “responsible use” protocols need to specify who can run searches, what minimum image-quality standards apply, what form results can take, and what documentation and oversight are required. They should also define the permissible purposes for which searches may be conducted, restrict access to trained and certified personnel, require supervisory approval for high-stakes uses, and mandate that face recognition results be treated only as investigative leads rather than as dispositive evidence. Protocols can require minimum similarity thresholds below which no candidate match is returned, prohibit the use of face recognition on images that fall below objective quality metrics, and require contemporaneous documentation explaining why a search was initiated and how results were interpreted.

Additional safeguards could include audit trails of all searches and outcomes, periodic independent audits of performance and demographic disparities, disclosure requirements when face recognition contributed to an arrest or charging decision, and exclusionary consequences if required procedures are not followed. Agencies could also be required to collect and publish aggregate statistics on the number of searches conducted, the rate at which matches lead to arrests, and the frequency of erroneous identifications.

As an example of governance procedures, the FBI has established guidelines on the use of face recognition. These include limiting situations in which it can be used and the type of probe images used. They require that all face queries be evaluated by trained examiners and mandate that face recognition be used for investigative leads that must be corroborated.

As another example, the New York City police department (N.Y.P.D.) has spelled out a detailed protocol for the use of FRT. This requires investigators to submit face images to a special facial identification section of the department (the Real Time Crime Center, Facial Identification Section) that will, for example, ensure that image quality is sufficient and that use of FRT is warranted. The section can reject unsuitable probe images and reviews matches. Critically, a “possible match candidate” is meant to be “treated as an investigative lead only” and does not establish probable cause to make an arrest. The unit also retains records of searches and results. It has been reported that in other localities, investigating officers have accessed FRT directly, without supervision. Specific requirements could be mandated, with legal consequences if they are not followed, such as disallowing evidence produced in subsequent investigation.