To tune into the action on the ground, we convened practitioners, state and local officials, advocates, and policy experts to discuss what it will actually take to deploy clean energy faster, modernize electricity systems, and lower costs for households.

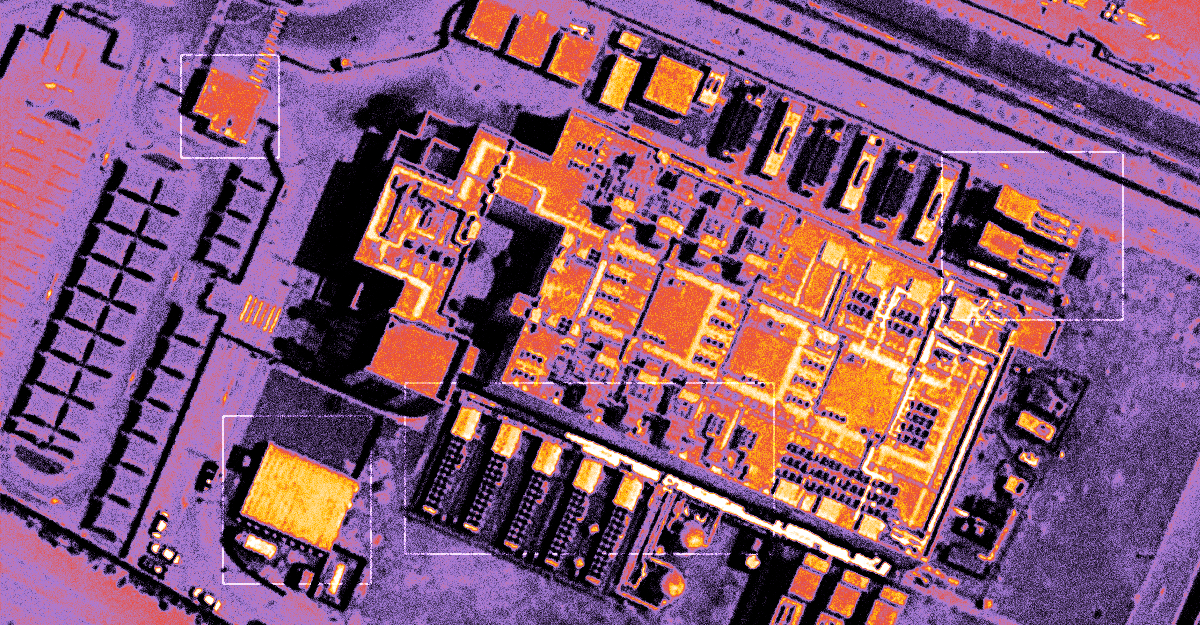

From grassroots community impacts to global geopolitical dynamics, understanding developing data center capacities is emerging as a critical analytical challenge.

Over the past few months, the Trump administration has been laying the foundation to expand the use of the Defense Production Act (DPA) for energy infrastructure and supply chains.

Get it right, and pooled hiring becomes a model for how the federal government decides what to do together and what to do apart. That’s a bigger prize than faster hiring. It’s a more functional government.